AI has been steadily gaining momentum over the past few years and is now advancing speedily. Far from the niche concept that it once was, it is a hot topic and the focus of multiple large and small organizations. What is AI and how is it different from machine learning? How is AI developing? Are we in control of this accelerated innovation? Should we be concerned for our jobs, for our safety, for human civilization? When it comes to AI benefits and threats, does one outweigh the other?

Machine learning, AI, and all that jazz

Artificial intelligence embodies many things: deep learning, robotics, computer vision, cooperative systems, and machine learning, to name only a few. Having been around since the early 1950s, it only began gaining traction around 2010 partially due to increased interest and investment in this area (and supporting data and technologies working together to make further advancements possible).

Although AI encompasses many subsets, some are more intelligent than others by design. Machine learning, for example, has been the most widely used advancement up until recently, but more intelligent AI is now at the forefront. AI and machine learning are often used interchangeably (this may or may not always be appropriate or quite right), but there is a difference between the two.

AI is an intelligent design that can learn to do more than one specific task, whereas machine learning often refers to a design developed to undertake one select and specific task or problem that it is focused on and, mostly, accomplishes very well. However, although referred to as “machine learning,” it cannot learn more than it is programmed to do. On the other hand, AI encourages adaptation to changes in an environment through learning. It can study and process its environment and adapt independently of human interaction to improve functioning (emulating the thinking and decision making displays akin to humans). This is what makes AI so interesting — but also quite concerning.

Where is AI being used?

Artificial intelligence has the potential to profoundly alter the world as we know it. It is changing the manner in which we go about our daily lives, changing how we work and how we communicate with one another. AI is already being used in health care (for diagnostics and targeted treatment), entertainment, transportation, education, and in facilitating robots. This human and machine interaction will improve and become more seamless and tailored as AI progresses and adjusts to individuals’ requirements.

For many consumers, each new automation addition to the office or the household improves convenience, it’s cool (far too cool not to have!), and mostly aims to make mundane tasks so much easier and more efficient. Physical robots, software, and self-service solutions are all helping to achieve this. Mowing the lawn and vacuuming the house has never been easier than with home-friendly robots that are a great addition to the household. After all, who can honestly say that they look forward to those mundane tasks filled with drudgery? With each new version, these intelligent creations become better at their job, functioning improves considerably, and they become more affordable so that many more people can have the opportunity to own one.

Autonomous cars are also something many are looking forward to. Imagine —while you are driven to your destination, you can use your hours more productively.

There is so much happening in this field, from the little things to the much greater. Siri, Alexa, and Watson right through to AI in the medical field (robotic surgery, for example).

And what about AI in cybersecurity? It is able to address cybercrime in a way that traditional methods are not able to achieve. On the flip side, however, adding AI to a hackers’ already high-tech toolbox further complicates defending against cyberattackers as well as accelerating AI-based attacks.

Do the benefits outweigh potential threats?

AI offers many positive innovations, right? We would like believe that we are in control of these advancements as well as the ones to come. After all, AI could not have arrived at this point without human input and innovation. So, we are in control. Or are we?

Facebook recently was forced to shut down an AI engine after learning that it had created (independent of human input) its own language that was unrecognizable to humans. This is one indication that perhaps our control over AI is limited and that the potential for threat may exist.

Although many innovations are very cool and already incredibly advanced (some may think), this is only really the beginning of much greater things. AI’s massive administering power is so much faster than the human brain in computational aptitude. Furthermore, it is quickly progressing into areas that the human race has always thought that they had sole control and ability over, such as the ability to strategize, infer, make judgments, and even create music. Research is now showing that robots may even be capable of feeling and recognizing emotion.

AI advancements are making things possible that once may have been only deemed as fiction. It is encouraging that advancements improve quality of life and advance the human race. But what if we are not as in control of it all as we think we are? Are we our own worst enemy, only not realizing it? What if AI is used for bad instead of good? What happens if AI gains the upper hand? Many scientific minded individuals — including famed physicist Stephen Hawking —fear that this may become a truth. We are faced with the task of managing a mixed human/machine intelligent environment, both in the workplace and at home.

Many sectors are finding themselves in a situation where they either adapt and get on board or fall by the wayside. Sectors such a finance, for example, are set to experience among the biggest changes due to AI — but this may be accompanied by painful job losses.

Is the progression of AI dangerous or do the benefits outweigh the adverse potential for grave effects to humanity? Some say it is not a matter of if but rather when. It is human nature to want to progress, to challenge the boundaries, and this is the reason that the human race is where it is today. Can this also be our demise?

Masses of hardware and software engineers are facilitating the change toward a future fortified with AI systems. The concern is that this advancement in technology, based on improving and benefiting humanity and lifestyle, can potentially become unstoppable and detrimental to the human race. When a man or a machine gets to determine what is right or wrong, or a machine begins to act with a level of mindfulness that’s parallel to humans — we may find that we have a problem on our hands. The urgency and push for true autonomy of machines could also spell disaster. What happens if/when the machine learns to act in accordance to its own survival. Survival of the fittest — humans know this too well.

Another concern is that the large powerful companies tend to have the greatest influence on this industry and innovation. No doubt, most strive to use this for good, but unfortunately with the power that they hold the likelihood is that the AI innovation advancements are prone to be biased with their views and values at the forefront. Should these companies be allowed to define and instill the values in AI? This has the potential to put AI’s transformational power in the hands of a few elite and already powerful organizations.

While AI technologies and robotics can potentially enhance the quality of human life and offer new prospects, the social ramifications of privacy issues and job losses are worrying for many. Productivity can potentially be boosted across multiple industries and labor costs significantly reduced, thus the uptake of such AI systems is a no-brainer for many industries. This may be at the expense of workers, though. Or perhaps industries will adapt, shift and workers will learn new skillsets to be valued once again. The risks should be carefully weighed against the benefits.

Successful AI hinges on the right kind of data

It’s not a coincidence that all the sizable AI players have access to the masses of good data: Google, Facebook, Microsoft, IBM, and Amazon, for instance. The success of AI hinges on access to the right kind of data — good data. What is good data? The data needs to be current, always continuous, in mass, and complete. Furthermore, ample data-processing power is essential to strategically interpret the results. Only then can the complete worth of AI algorithms be unraveled. This is what keeps the giants at the forefront, maintains their lead, power, and influence — be it used for good or bad.

Where are we heading?

Who knows? Ask the robots 😉 ! One thing for certain is that there is no stopping AI or the industries controlling the bulk of this innovation. AI will progress! We will be surprised and eagerly accepting of the benefits that it will offer to us and we are also likely to experience some exposure to a taxing reality when AI is manipulated for bad. We should approach with caution. Discussions should be had on how to ensure that AI is unbiased and supportive of a global society.

The AI concerns are beyond whether Alexa or Siri are prying or know too much about our whereabouts; they don’t really fall into the category. What we should be concerned about is that we are creating AI to think like us, make decisions for us, predict and do things for us, and even be hopeful for an emotional connection. If we are creating these machines, what values do we want them to have and to portray? We are very flawed society. The chances are that the machines we create will be just as flawed. If present society and the state of our world is anything to go by — it shows AI in a bad light.

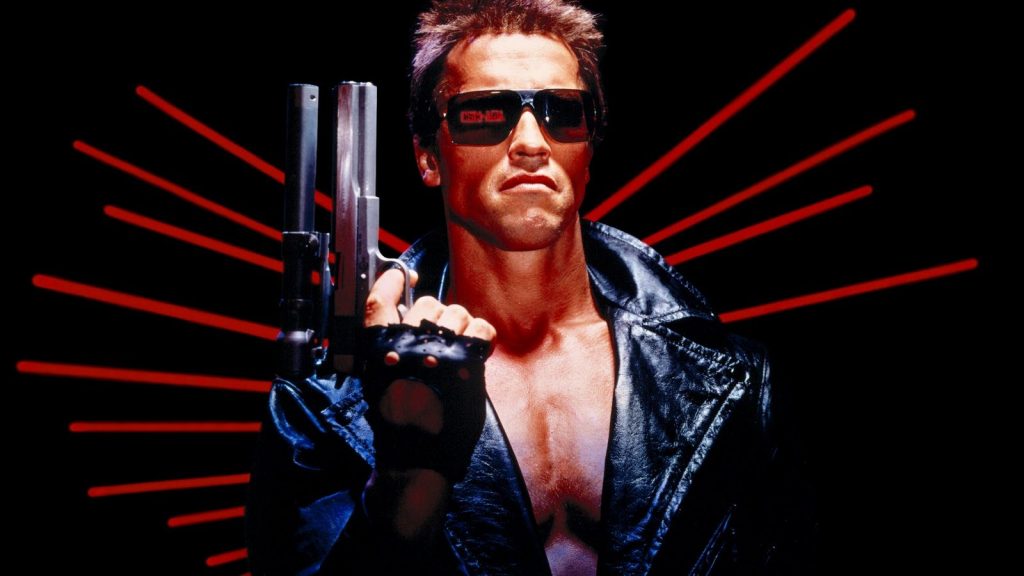

Many unanswered questions exist and many pressing issues need to be addressed. If we get it right, we will have created something awesome, out of this world. If it goes wrong, we will only have ourselves to blame for it. The potential demise of human civilization (as some see the outcome) may be at the hands of “assassin robots,” or more likely machines that have become too smart to need or want humans anymore. If this happens, the blood of humankind will be on its own hands.