Sponsored by Stellar Data Recovery

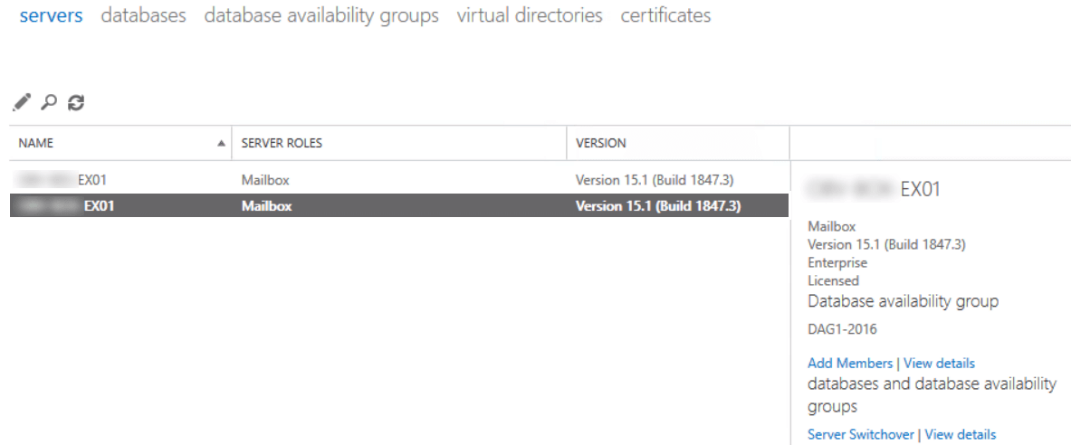

High availability (HA) was introduced in Exchange 2010 and had, since then, become the mainstay of message continuity service in organizations. A high availability setup relies on Database Availability Group (DAG) as a critical component to deploy “highly available” messaging service and mailbox database within a datacenter and across multiple AD sites for site resilience.

DAG, introduced in Exchange 2010, is a group of up to 16 mailbox servers depending on your Exchange Server license. It is configured with redundant copies of the databases to enable automatic recovery in case of a Switchover (scheduled) or failover (unexpected) outage of the database, server, or datacenter.

The DAG is created as an empty object in the Active Directory, following which mailbox servers are added to the DAG object. Adding the first mailbox server automatically creates a dedicated failover cluster for the DAG. This failover cluster monitors the DAG’s crucial information, including the database mount status, replication status, active/passive state, etc. The mailbox servers subsequently added to the DAG are joined to the failover cluster. The functioning state of a DAG in case of failure of a member server is determined by the quorum model of the failover cluster. Quorum serves as a file share witness to ensure that all or a majority of cluster members remain functional to serve “high availability” and “responsiveness.” You can also use a disk majority or now even cloud storage as a witness.

Outage handling in HA: Switchover and failover

There are two types of mechanisms for handling outage or downtime in a high availability Exchange organization, viz. switchover and failover. Switchover comes into play when there is a planned or scheduled downtime of the database, server, or datacenter, typically for maintenance, hardware upgrades, and windows updates. In a switchover, the administrator “manually” initiates the outage by using the Exchange Admin Center (EAC) or Exchange Management Shell. Switchover involves switching over of one or more active database copies to other mailbox server(s) in the DAG.

In contrast, failover is an “automatic” response to an unexpected outage due to the failure of a database, server, or datacenter, and it involves automated recovery through moving of the active database copies to another mailbox server in the DAG, which was previously a passive server.

Switchover and failover mechanisms rely on Active Manager, a role that runs inside the Exchange Replication service on all mailbox servers in the DAG, to manage the switching of active database copies to other servers.

The following are the process steps for switchover and failover, particularly in the case of a “database” outage.

Database switchover

The admin uses the EAC or PowerShell to initiate switching over of the active mailbox database copy to another mailbox server in the DAG.

- The client relays a Remote Procedure Call (RPC) to the Replication service on a DAG member server that holds the Primary Active Manager (PAM*) role.

- The PAM updates the details of the currently active copy of the database in the cluster database.

- The PAM communicates with the Exchange Replication service on the target member that hosts the passive database copy to be switched into the active database copy.

- Exchange Replication service on the target server queries the Replication services on all other member servers of the DAG to find out the best log source for the database copy.

- The current active database copy is dismounted, and the Exchange Replication service on the target server copies the remaining logs.

- The Replication service on the target server initiates a database mount request, following which the Information Store service on the server replays the log files and mounts the database.

- The PAM updates the details of the database switchover in the cluster database.

- Any error codes are returned through the Replication service on the target server via the Replication service on the PAM to the admin interface.

*PAM is the active manager role deciding the Active and Passive database copies in a DAG. PAM role resides in the DAG member that owns the cluster quorum resource.

Database failover

- The Exchange Information Store service detects a database failure and records the event in the Windows Server crimson channel**.

- The Active Manager on the mailbox server, hosting the failed database, detects the failure events and initiates a request for the database copy status from the other servers in the DAG.

- The servers, hosting the mailbox database copy, return the status, following which the PAM determines the best copy of the database and initiates the process to move the active copy.

- The PAM updates the new database mount location in the cluster database and sends a request to the Active Manager on the target server to assume the database master role.

- The Active Manager on the target server requests the Exchange Replication service to copy the latest logs from the preceding server and set the mountable flag for the database.

- The Exchange Replication service copies the logs from the server that was previously hosting the active copy.

- The Exchange Information Store service mounts the new active database copy.

**Crimson channel is a category of event logs in Windows Server that records the events associated with a single application or component, which in this case are high availability and replication of mailbox databases.

Considering these elaborate and systematic mechanisms, it is easier to understand that a high availability setup can address any kind of outage and maintain seamless mailbox connectivity.

However, there could be situations that could still disrupt mailbox connectivity in a DAG HA setup. The following example illustrates:

Consider a DAG setup, comprising three member servers, namely MB01, MB02, and MB03, wherein each member server is hosting a copy of three mailbox databases viz. DB 1, DB 2, and DB 3. The database copies are mirrored across each server. [See Fig. 1]

Note, this is a DAG comprising an odd number of members, so it is governed by the Node Majority Quorum model.

The DAG is seen running in a healthy state and is providing high availability. Next, the administrator needs to perform maintenance on MB02 and therefore initiates a switchover, involving the steps described earlier. As a result, the active copy of DB 2 is moved from MB02 to MB01, and subsequently, MB02 goes offline. The DAG is still able to maintain high availability with MB01 and MB03, wherein MB01 is now running two active database copies, those of DB 1 and DB 2. [See Fig. 2]

Now imagine that while MB02 is down for maintenance, MB01 faces a sudden hardware failure and faces a crash. What happens next is the loss of cluster quorum, due to which the DAG will not be able to initiate a Failover and will undergo total failure, resulting in databases dismount. This is the design for the failover cluster, as there is only one server left and one node to vote, so as a fail-safe, it shuts down the cluster. [See Fig. 3]

This situation will lead to an extended outage until the failed member server is recovered to reinstate the quorum and restore the DAG. This situation would require the manual intervention of the administrator to recover the server.

Here is a comprehensive guide with detailed instructions on how to recover a failed DAG member.

In the meanwhile, and until the HA setup is restored, mailbox connectivity in the organization will remain hampered, despite “availability” of the mailbox database copies. However, mere availability will not guarantee restoration of the “latest mailboxes,” given the fact that MB01 – the server that crashed – was hosting the active copies of DB 1 and DB 2. So, there are significant chances that these database copies hosted on MB01 are in a dirty state.

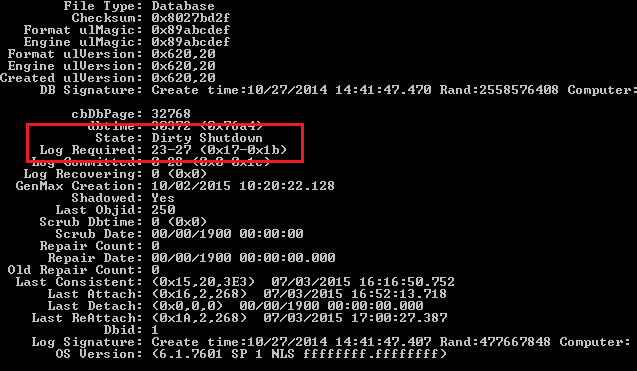

Follow these steps to check the state of the database and restore it to the consistent state, as follows:

Step 1: Ascertain the Database State by Using ESEUTIL

You can check the state of the database files by using ESEUTIL /MH cmdlet to read the header of the database in offline mode, as follows:

| PowerShell |

| eseutil /mh ”c:\DB 1.edb” |

The above cmdlet checks the state of the database file, named DB 1.edb. It returns the database state as “Dirty Shutdown” and displays the missing log file range in the Log Required section. [See Fig. 4]

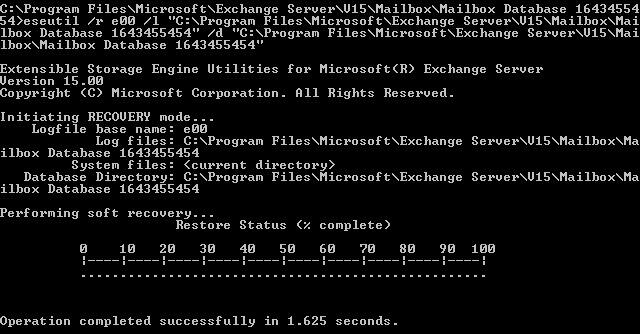

Step 2: Attempt Soft Recovery Using ESEUTIL

Use the ESEUTIL /R cmdlet to replay the transaction logs and restore the database to the consistent state, which is also known as soft repair or recovery. The following is the syntax of ESEUTIL /R cmdlet, further illustrated with an example:

| PowerShell |

| ESEUTIL /r <log_prefix> /l <path_to_the_folder_with_log_files> /d <path_to_the_folder_with_the_database> |

Example:

| PowerShell |

| ESEUTIL /r E00 /l “C:\Program Files\Microsoft\Exchange Server\V15\MB01\DB 1” /d “C:\Program Files\Microsoft\Exchange Server\V15\MB01\DB 1” |

This example illustrates the use of ESEUTIL /R cmdlet to perform soft recovery of the database, named DB 1. [See Fig. 5]

Next, recheck the database state by using ESEUTIL /MH cmdlet. [See Fig. 6]

By using the ESEUTIL commands, you should be able to restore the database to a clean shutdown state and mount it successfully, reinstating access to the latest copies of the mailboxes.

However, what happens if the soft repair method doesn’t work and the database is still found in the Dirty Shutdown state after running the ESEUTIL /MH cmdlet?

You can attempt Hard Recovery by using ESEUTIL /P cmdlet, but be advised that the hard recovery method involves “removal” of data to attain database consistency, and therefore it results in data loss. So, it must be used only when there is no other option. In fact, EseUtil itself will prompt you to accept the data loss. This is not a 100 percent guaranteed solution, and you must also take into consideration that Microsoft adds hard-coded information in the database if hard recovery is used. If you have a support agreement with Microsoft and you ask them for assistance after you run the hard recovery, they will not support you, as it’s a breach of the support agreement.

As an alternative and much more successful method to recover the databases with no complication and with ease, you can use Stellar Repair for Exchange, a third-party tool to repair the corrupted database and restore the Exchange services with the least impact on the business without any data loss.

Stellar Repair for Exchange can easily open any Exchange Server Mailbox database, irrespective of the damage and version, and restore and recover it with great precision. The application is relatively small and can be installed on any machine, whether Windows 10 or server edition, and doesn’t require technical skills to run it. The tool can export the mailboxes to PST, Live Exchange, or Office 365 tenants.

To summarize

High availability is undoubtedly the preferred architecture in Exchange organizations worldwide, as it ensures business continuity. Based on DAG, the concept of high availability can be extended beyond a single datacenter to multiple AD sites for attaining site resilience, which is a dream setup for any Exchange administrator. But, like any other system, there are unfactored failover scenarios that could lead to an extended disruption, which, in the case of Exchange, could mean inordinate downtime for email and even data loss.

For instance, incidents like server crash can lead to outright failure of an HA setup (DAG) and further corrupt the database copies, placing the administrator in a tight spot. In this case, apart from using ESEUTIL, there’s not much that can be reliably used to fix the database corruption and mount the database. Also, data loss is an added risk, if hard recovery comes into play. One must also factor in the downtime apart from the administrative effort and resources needed to restore the services. So, having a third-party Exchange database recovery tool like Stellar Repair for Exchange can be a smart and informed decision for handling such situations, given the fact that database corruption can happen anytime and due to reasons beyond control. So, you would need the right tools to get you back in operation!

Featured image: Shutterstock