Amazon is holding AWS (Amazon Web Services) summits around the world. This week, I attended the San Francisco Summit held at the Moscone Center. In this article, I am going to focus on the big announcements coming from the keynote address. (Read the Day 1 article, where you can learn more about AWS, my key takeaways, and some additional announcements.)

Keynote begins

The AWS keynote address was led by Amazon chief technology officer Werner Vogels, a charismatic man with a lot of stage presence, and plenty of energy to spare. This being my first AWS conference, I was eager to learn how Amazon sells and innovated on its products.

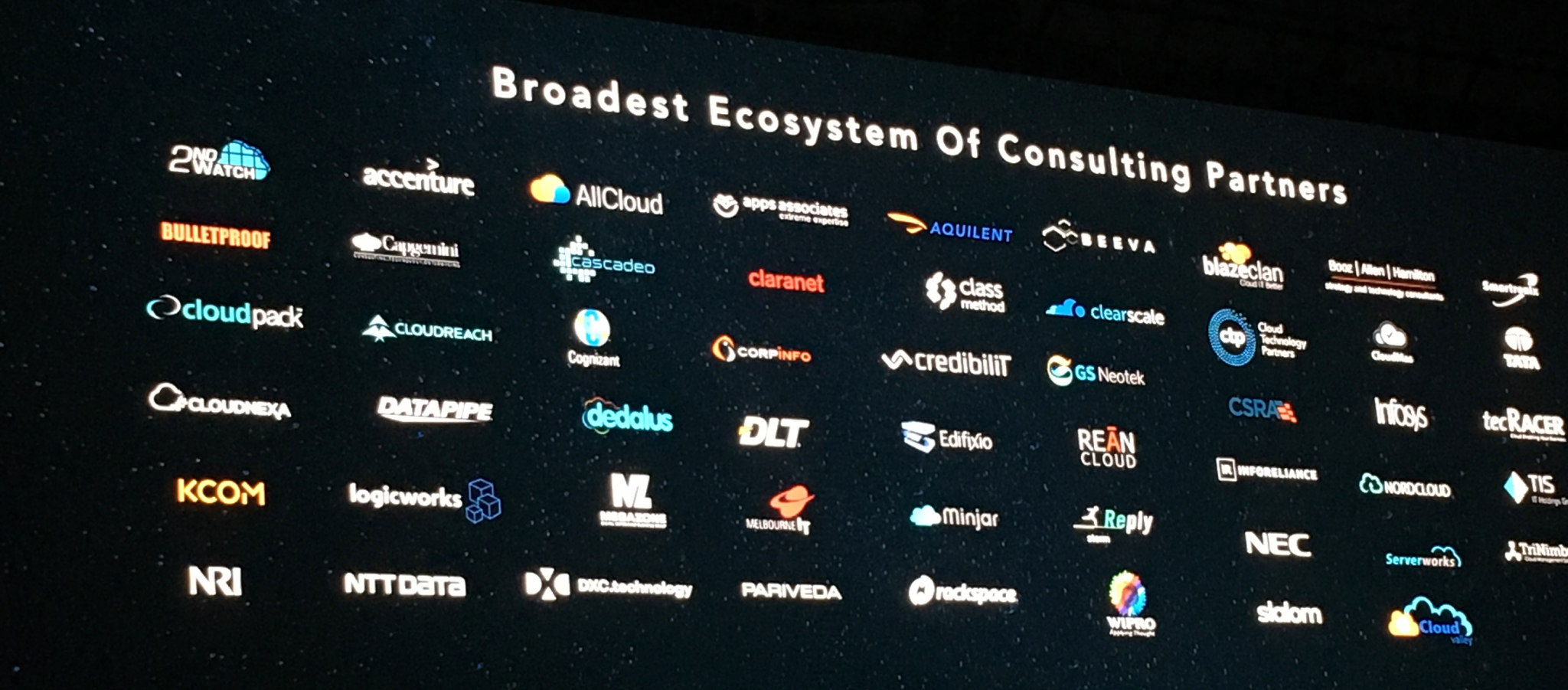

Logo time!

Clearly, Amazon is very proud of its massive ecosystem of customers, partners, and independent software vendors (ISVs). The companies on board with Amazon are a who’s who of household names.

First announcement: SaaS contracts

With so many Software as a Service (SaaS) companies writing their software on the AWS platform and such a large number of customers using the platform, it makes sense that Amazon would want to play a part in building lasting relationships between these parties.

With AWS, you can now visit a marketplace to find solutions to meet your business needs. Rather than contracting with each of these companies individually, you can now create a contract online and it will all be charged via your AWS bill.

Since the bullets in the above image are cut off a bit, here is a summary:

Since the bullets in the above image are cut off a bit, here is a summary:

- Great reliability for SaaS subscriptions (1, 2, 3 years).

- Upgrade or expand contracts at any time.

- Simple APIs make on-boarding easy for ISVs.

- New charges on your AWS bill.

The CEO of Splunk came on stage to announce their inclusion in the marketplace. To view the marketplace, start here.

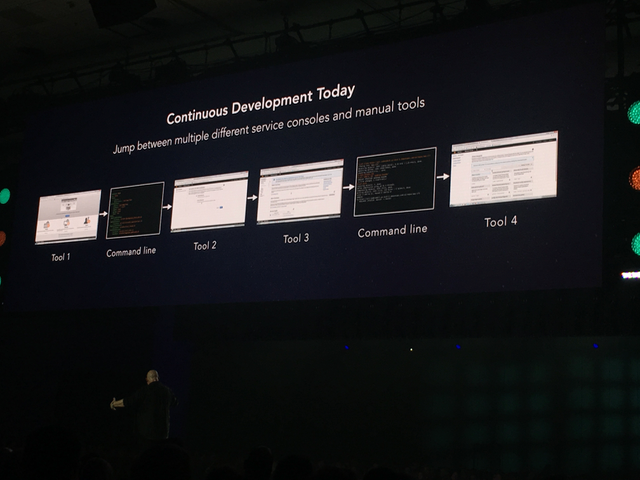

CI/CD Announcement

Amazon refers to the process of designing, building, testing, and releasing code to be the CI/CD process. That stands for continuous improvement/continuous development & deployment. As you can see in the image below, there are many steps to releasing products and updates on AWS.

The image is really pointing out some pain points most developers have when writing code for AWS. I experienced these pain points myself when taking a course at the summit. We developed a fictitious unicorn-hailing app that uses S3, DynamoDB, Cognito, API gateways, and Lambda functions. By the time the app was complete, I counted 12 open tabs and two open terminal windows. That didn’t count all the tabs I opened and closed during the process. Without an IDE (integrated development environment) of their own, it takes a lot of work to design, debug, and manage Amazon AWS code.

Introducing AWS CodeStar. With CodeStar, you can select a set of templates with common design patterns. Once you choose the design pattern, it will create a solution for you to use in Visual Studio, Eclipse, or command line. While excited, some developers pointed out the lack of support for C# (.Net) and common patterns they use, but it was still met with applause.

The CodeStar solution goes further by integrating with AWS services like CodeCommit, CodeBuild, CodePipeline, and CodeDeploy. It also provides integration with Jira for issues tracking. All put together, you have a solution where you can clone your code to a local computer for development work in an IDE, follow a typical Git workflow, and then have your code automatically build onto AWS. In theory, this means you are spending a lot less time working in all sorts of tabs and typing command lines to push your code and get it into QA, test, or production environments.

Serverless computing

It is evident a lot of companies still use virtual machines or Docker containers to run their apps. Amazon is on a mission to move people from these big operating system-dependent application into a serverless architecture. You can read my Day 1 article and this article to learn more on the topic.

To build a serverless architecture, you still need a database, storage, APIs, and more, but it also needs business logic. In what Amazon calls the monolithic app architecture, you run a server with a compiled app that has all the business logic locked up in the operating system.

In the new serverless world, you build functions that represent the business logic and only pay for the use of that function. Amazon calls these Lambda functions. There are challenges with creating Lambda functions because one function may have to call another, and that might have to call another. Rather than hard-coding Lambda functions to call each other, you can create step functions. These step functions are workflows that you build. These workflows determine what Lambda functions get called and when.

As you can see in the image below, there are three types of step functions:

- Sequential steps: Perform one task, wait for it to complete, and then perform the next task. In the image, one function uploads a RAW TIFF image, and then the next function deletes it.

- Parallel steps: Run multiple functions at the same time. In the image, you can see a select image converter function runs, then it spawns three functions that run at the same time. One function converts the image to TIFF, another to JPG, and another to PNG. Steps can also run if/then style statements, which is why that sample shows the step function ending because the image format is not recognized.

- Branching steps: Run certain functions based on a set of criteria. In the image, the process photo step requests one function to get the photo’s metadata, another function to resize the image, and another to process facial recognition.

As you build more and more of these little Lambda functions, it can become unwieldy to find out where a function breaks down. For example, a function might have a bug or times out. Amazon provides X-ray as a solution to help you track down which functions are the cause of the issue and give you an easy-to-read map of the process and where the breakdowns occur.

Big data announcements

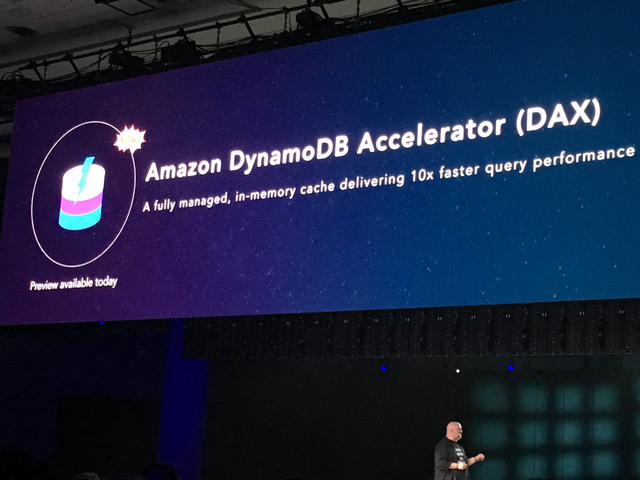

DAX

Amazon continues its push into supporting massive data sets. People call this data at scale or big data, but the idea is you are working with millions or billions of rows of data. Apparently, AWS customers love Amazon’s DynamoDB, which is a NoSQL solution.

While this is very fast, some customers want microsecond response time, and DynamoDB cannot always handle these requests in such a timely manner. That is why Amazon announced Dynamo DB Accelerator (DAX). My understanding is you still use DynamoDB, but DAX allows you to access an in-memory, cached version that is much faster. As you can see in the following image, Amazon claims a 10-times improvement in query performance when you use DAX.

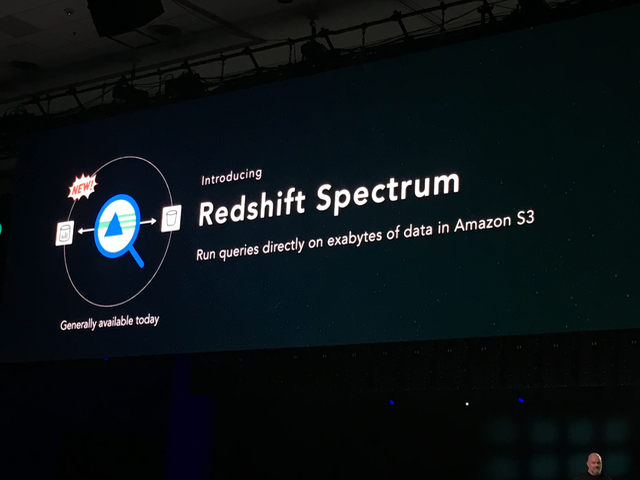

Redshift spectrum

Amazon Redshift is an AWS offering for data warehousing. Some AWS customers store massive data sets in their warehouse, but they will also set up data lakes in Amazon S3. Apparently, running queries on S3 is a serious problem (more on that in a moment), so Amazon announced the general availability of a new product, Redshift Spectrum.

Amazon is looking to address some serious performance issues. If you were to use Amazon Redshift to run a function to join, filter, group, cast, and run a query against exabytes of data on S3 using Hive with 1,000 node clusters, that query would take five years. With Redshift Spectrum, that same query takes only 155 seconds.

Amazon is looking to address some serious performance issues. If you were to use Amazon Redshift to run a function to join, filter, group, cast, and run a query against exabytes of data on S3 using Hive with 1,000 node clusters, that query would take five years. With Redshift Spectrum, that same query takes only 155 seconds.

Other announcements

Wrapping up the keynote was the general availability of Amazon Lex, which allows you to add machine learning, conversational chats, and other capabilities to your apps. Amazon Lex is based on Amazon’s consumer product, Alexa.

Overall, the two-day summit was a worthwhile event. Amazon had some great sessions to help you build apps, improve performance, migrate datacenters, and much more. If you think cloud computing is just a passing fad or is only for a certain type of tech company, I will leave you with this one quote from Werner Vogels:

General Electric is closing 30 of its 34 datacenters as they move their infrastructure to the cloud.

Lead photo credit: Amazon