If you’re in IT, not a day goes by where you don’t hear something about Docker. The technology has been quickly adopted by small startups and established giants such as Amazon, Microsoft, and ADP. The containerization platform is the darling of Silicon Valley, and everyone is jumping on the Docker bandwagon. But how is Docker handling all the attention? Will it be able to sustain its dream start over the years to come? Can it make inroads into the vast enterprise IT sector?

Docker’s rise

Docker emerged in 2013 and quickly rose to popularity with its flavor of Linux containerization. Containerization did exist before Docker was founded, but what made it different was that it used an integrated user interface, packaged dependencies, and offered a whole new level of portability for apps. All of this made containerization a lot easier to use than before. The biggest limitation that containerization had was that applications using this technology had to be using the same OS kernel and could only be transferred to systems using the exact OS version. Docker addressed this limitation, and many companies were attracted to this.

Docker emerged in 2013 and quickly rose to popularity with its flavor of Linux containerization. Containerization did exist before Docker was founded, but what made it different was that it used an integrated user interface, packaged dependencies, and offered a whole new level of portability for apps. All of this made containerization a lot easier to use than before. The biggest limitation that containerization had was that applications using this technology had to be using the same OS kernel and could only be transferred to systems using the exact OS version. Docker addressed this limitation, and many companies were attracted to this.

Along with its quick rise came many criticisms, including concerns over security and the lack of tools. And there was an overall sense of it being a fad. Docker quickly addressed these issues by listening to their customers and adding features and toolsets that expanded its capabilities. Soon, Docker began to take on workloads from virtual machines, challenging the smug VMware.

Distinct from VMs

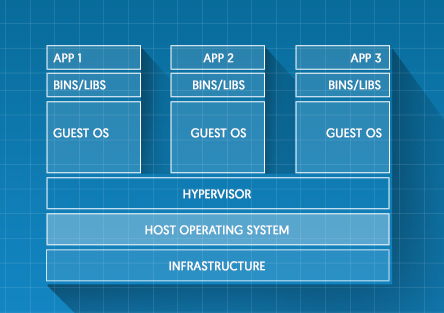

Docker containers are very different from Virtual Machines. Containers use an underlying OS, while VMs need to fire up individual instances of a virtual OS. Docker’s technology makes the containers and the apps they contain more scalable and portable across the pipeline and even across vastly different cloud environments.

Containerizing legacy apps

Since its release, Docker has gained a foothold in many companies. In a survey conducted by Docker, 80 percent of organizations said Docker is an integral part and is central to their cloud strategy.

Many vendors and analysts have been supporters of bi-modal IT. The concept pits microservices against legacy, monolithic apps by saying that the former belongs only in public clouds while the latter belongs in on-premise servers. But Docker CEO Ben Golub calls bi-modal IT a “fallacy.” Docker has been so convenient to companies that they’ve increasingly started using it to containerize legacy apps.

In fact, more than using Docker for exclusively greenfield applications, the reality is that most organizations fall somewhere between microservices and monolithic. And it’s these organizations that are implementing Docker and seeing huge improvements in their application performance. This is the state of most enterprises and this is a field where Docker wants to play as well.

[tg_youtube video_id=”NSxi8oix748″]

Docker wants to blend in

Docker doesn’t want to revolutionize existing infrastructure and apps altogether but wants to blend in to existing workloads and make them more efficient. Most organizations have a mix of apps and infrastructure. Many are under the impression that there can only be black-and-white views such as having microservices in the cloud and legacy apps in on-premise servers. In fact, it’s being seen that many companies have a hybrid of infrastructures.

Docker doesn’t want to revolutionize existing infrastructure and apps altogether but wants to blend in to existing workloads and make them more efficient. Most organizations have a mix of apps and infrastructure. Many are under the impression that there can only be black-and-white views such as having microservices in the cloud and legacy apps in on-premise servers. In fact, it’s being seen that many companies have a hybrid of infrastructures.

“At Docker, we like to talk about ‘incremental revolution.’ It means that you’re starting small, imposing the least amount of change as possible, and allowing yourself to grow flexibly over time.” — Ben Golub.

Orchestrating containers for the enterprise

Orchestration is everything you need to go from a simple containerized development environment to a live system. The physical equivalent of this is the actual shipping industry: It’s the expansion from one container to a whole system of shipping around the world. This was a key concept of DockerCon 2016. Much of the focus was on how to better orchestrate large numbers of containers. The problem with orchestration is that it can only be done by either hiring a team of experts, which would be a waste of resources, or by relying on another company’s team of experts, which could lead to lock-in issues.

To tackle these challenges, Docker has introduced built-in orchestration that can be used even by non-experts. Many companies have been using Google’s Kubernetes tool to handle orchestration, but now Docker’s own tools look promising. With better orchestration tools, Docker is taking aim at the enterprise market.

Tighter Security

Earlier on, there were security concerns about using Docker, mostly fueled by the fact that there was very little isolation between containers. Essentially, if one container had a security exploit, it could easily spread to the other containers and affect the entire system. Virtual Machines have been around for a while and had more robust security than Docker containers. But Docker has been implementing better security measures, for example signatures and Docker Bench, which make sure companies get a more secure experience.

“The most security-conscious organizations on the planet are now adopting Docker not in spite of security concerns, but to address their security concerns.” — Ben Golub.

Stories from the trenches

Companies like ADP have also said that Docker has shown them a new path to DevOps. It’s simplified the processes so that developers package apps in containers and Ops ship the containers, and the common denominator between them is Docker. A lot of devs have also been championing the use of Docker containers as it makes the experience of transferring an app to Ops a lot more fluid. There is no longer the old problem of apps working on the Dev side and then breaking on the QA or Ops side due to system incompatibilities.

Companies like ADP have also said that Docker has shown them a new path to DevOps. It’s simplified the processes so that developers package apps in containers and Ops ship the containers, and the common denominator between them is Docker. A lot of devs have also been championing the use of Docker containers as it makes the experience of transferring an app to Ops a lot more fluid. There is no longer the old problem of apps working on the Dev side and then breaking on the QA or Ops side due to system incompatibilities.

At DockerCon 2016, ADP’s CTO Keith Fulton gave an amusing example comparing microservices to bite-sized chicken nuggets. “Everybody likes nuggets,” Fulton said. “No one wants to gnaw on a bone any more. Chicken nuggets are convenient.” At ADP, however, they use a lot of monolithic, legacy apps, which Fulton compared to chickens. To solve this, they use containers as pieces of code that need to be changed the most and containerize them separately from the bigger container, containing the legacy app. This enables them to get the best of both worlds — the functionality of their legacy apps combined with the scale of Docker.

Vendors have become so eager to support Docker that Hewlett-Packard Enterprise has even started shipping their servers with Docker in them. Docker has also announced two new integrations — Docker for AWS and Docker for Azure. These integrations enable Docker to leverage the underlying architecture of both AWS and Azure without compromising on portability. These updates are enabling Docker to have a well-defined focus on the enterprise.

As Docker keeps introducing and refining features, its prospect for the future is bright. Having established its foothold in the enterprise, its new features target the various needs of large enterprises. As you think about the future of the data center, pay attention to Docker, and consider how you can leverage it as you build tomorrow’s leading applications.