All the big names in tech are working to find their place in AI (artificial intelligence) and machine learning. Google is spending heavily on doing everything from image recognition to solving complex problems in healthcare, manufacturing, and much more. In this article, I walk you through Google’s most significant AI and machine learning announcements from the Google I/O 2017 conference.

We are moving from mobile first to AI first. –Sundar Pichai, CEO, Google

TensorFlow

The largest open source machine learning project on GitHub is Google’s TensorFlow, which you can learn more about here. TensorFlow uses machine intelligence to do things like predict whether someone has a particular disease, create unique works of art, and much more. The following video will give you a sense of how developers are using this year-old technology.

[tg_youtube video_id=”mWl45NkFBOc”]

Announcing New TPUs

I don’t know about you, but starting up my computer sometimes feels like it takes forever. So, how then, can computers process images, understand what it sees in a video, and solve major healthcare problems? The answer is in large-scale compute-intensive chips and infrastructure.

In the following image, you can see Google’s CEO Sundar Pichai announcement of Tensor Processing Units. By themselves, these new TPUs are incredibly powerful, but you can string them together into what Google calls a pod, and the combined system provides up to 11.5 petaflops of computing power.

These new TPUs will be available to Google Compute customers. Google’s Fei Fei Li shares an example where their large-scale translation model used to take a full day on 32 of the world’s best GPUs (graphical processing units). That same task takes only a few hours while only using 1/8th of a TPU pod.

We are democratizing AI. –Fei Fei Li, Chief Scientist, Google Cloud

Announcing the TensorFlow Research Cloud

With all that processing power, you can imagine opportunities abound for researchers to solve big problems that were either not possible or only available to small, elite groups of people. Today, Google is asking professional researchers and students alike to join their TensorFlow Research Cloud.

The TensorFlow Research Cloud is a network of 1,000 connected TPUs and is set aside for researchers to work on their projects. Best of all, the Research Cloud is free. Click here to sign up.

AutoML

An emerging area of research in machine learning is to have–get ready for this–machine learning systems teach machine learning systems how to learn. To the best of my (limited) knowledge, creating a machine learning algorithm requires data science skills the mainstream organizations and startups may not have.

As you can see in the following image, you train the AutoML system, so it knows different animals, then the machine learning system will teach itself and automatically learn how to figure out the difference between one picture and another.

Machine learning on your phone

Google’s powerful machine learning and AI systems are better because people store their photos, emails, searches, documents, and much more of their lives on the Google platform. Google can make use of this information to teach machines how to understand data better. As I mentioned earlier in this article, that powerful AI can do things like help in the healthcare market, but there are little things in life it can help with as well.

Google Lens

Back in the day, when you took a picture on your phone, it knew things like the size, color, and location of the image. Later, phones got smarter and could recognize faces, and then even recognize who those people are and the setting of the photo; for example, there’s John in a room with a TV in the background.

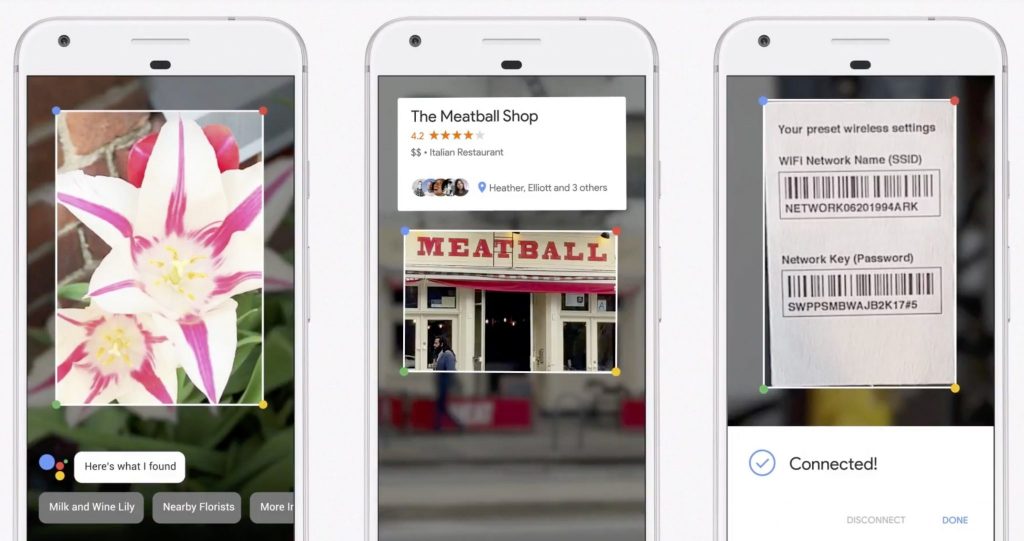

In the following image, you can see that Google Lens is taking photo and video recognition to the next level. If you take a picture of a flower, it will recognize what type of flower it is. If you point your camera to a store, Google Lens will recognize the store and pop-up a card that provides review details rating, contact information, and more. In another very cool example, Google showed how you snap a picture of the back of a wireless router and automatically connect to it with the network ID and password.

Google Assistant

If Google Lens can give you insight into the pictures you are taking, Google Assistant brings context and actions. There are a lot of Google Assistant features that I will not cover here, so I will focus on just one productivity demonstration.

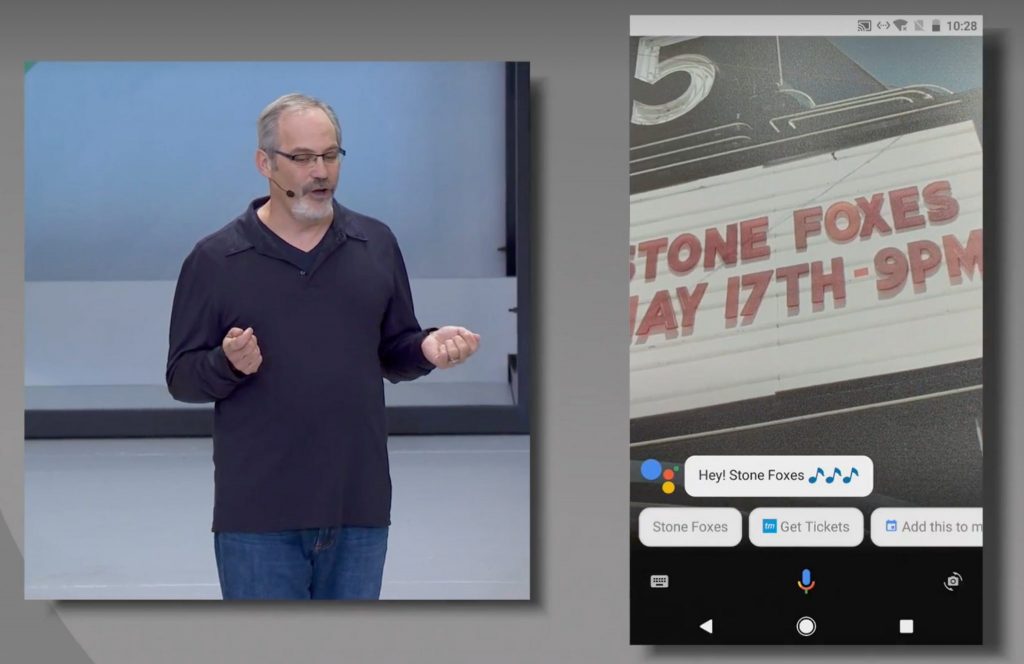

In the following example, a person points their camera at a marquee. Google Assistant recognizes the text spelled out with giant block letters in real-time. With knowledge of the text and your location, Google Assistant understands it is a concert venue. Finally, it offers the ability to purchase tickets or add it to the calendar. That whole demonstration lasted all of two seconds.

Democratizing AI

While not a Google-only message, it is evident the next wave of innovation and investments will be in artificial intelligence and machine learning. Of course, mobile, web, and desktop computing are not dead, but the cycle of innovation is more evolutionary than revolutionary.

To that end, there was one last big announcement, which is the inclusion of machine learning chips in next-generation phones. It will be interesting to learn more as we progress through this and next year.

Google didn’t talk too much about their new Android O, also not even reveal the ‘O’ name…

There were a lot of announcements for Android Go, VR/AR headsets, and much more, launching in “Fall” of this year, so I suppose we will learn the name as we get closer to the August time frame. Just a guess though.