A graphics processor unit (GPU) has always been a crucial component of CPUs, or the central processing unit as we know them. It is sort of like Optimus Prime to Transformers, the F150 to the Ford vehicle lineup, or caramel to a Snicker’s bar.

It’s the component that enables sophisticated graphics and video in applications such as video games. It started as a peripheral component of the CPU, but today, GPUs have repositioned themselves as a critical component of the computing machinery in the present and it does not appear like this is going to change any time soon. Kind of like the plug so you can plug in the machine for power purposes! Yes, that is pretty important!

A GPU can theoretically realize computation efficiency more than 100 times that of a CPU. The best analogy – tasked with reading a thousand page books, a CPU will start from page 1 and read until the end. A GPU will, instead, divide the book into a thousand pages and devour them all at once (probably even faster if that book was written by Taylor Caldwell or James A. Michener, but that is for another day).

GPUs enabling the future of computing

GPUs are the core of high performance computing in artificial intelligence, self-driving cars, and many futuristic computing applications. When used in conjugation with a CPU, GPUs can accelerate analytics, deep learning, and machine learning, enabling the realization of applications for search engines, robotics, and autonomous vehicles, video communications, and a lot more.

For the moment though, let’s focus more on what tremendous potential GPU empowered computing has for the enterprise, especially when it’s integrating so well with existing cloud infrastructure.

GPU and cloud computing – the merger of two super-technologies

GPU is a computing platform that can transform big data into never-before intelligence (almost superhuman). GPUs are at the center of computational engines that are driving the era of AI.

Most importantly, the power of GPU that accelerates computing is now integrating with data in the cloud, transforming the present and future of data analytics, machine learning, deep learning, and big data at unprecedented speeds. GPU accelerated computing is available on all cloud platforms, such as Amazon Web Services, Microsoft Azure, IBM Cloud, and the Google Cloud Platform.

The power of GPU computing for your cloud hosted data

In any organization, several gigabytes worth of data are generated every week. Sensor logs, images, text messages, videos, transactional records, interface related files, reports generated, and more–there are so many sources generating ridiculous amounts of data every day.

This results in a significant roadblock for the enablement of deep learning, because of the time taken to transfer your data from the cloud platform to the data center where GPUs can process the data. By bridging this gap, that is, by taking GPU computing to the cloud, the data sets and the computing power are much closer in the whole ecosystem, helping your organization get insightful results from futuristic data crunching.

Strategic investment to achieve long term cost savings

Traditional deep learning workloads necessitate significant uptime investment in hundreds of commodity based CPU instances. However, these instances can be replaced by strong GPU accelerated computing nodes with up to 8 GPUs for every instance.

This can result in long term cost reduction of GPU powered cloud computing by 70%. Also, with flexible pay per use pricing options, close to 100% uptime guarantees, and scalability, GPU computing for the cloud becomes a powerful choice for enterprises with heavy cloud data storage. Advanced GPUs deliver predictable performance, along with benefits such as data integrity, high bandwidth driven by GPU direct RDMA, and P2P communication with low inter-GPU latency.

Automated provisioning for eliminating job queues

GPU accelerated HPC clusters can be activated within minutes, rather than waiting for days and weeks, as generally observed with traditional provisioning. This is achieved via virtual images by using preconfigured drivers. Whether you’re looking to meet your growing computing needs, or need to ramp up computing to meet short peak durations, GPU accelerated computing is scalable enough to meet all requirements. Kind of like a delicious cheeseburger with onions and tomatoes can fill up anyone! Well, unless you are Shaq, he may need two!

Transparent integration of GPUs in existing IT infrastructure

In an ideal scenario, users should not even be aware that the applications they’re using and the data they’re accessing are all powered from the cloud. This means that the first challenge facing organizations is to seamlessly incorporate cloud based GPUs in the existing IT ecosystem. This integration needs to be unobtrusive, that is, on-ground clusters need to consume cloud GPU computing via logical extension.

Understanding the limitations with latency intolerant high performance computing applications

Latency can be a limiting factor, although it’s not directly linked to GPUs on the cloud. This can be countered to some extent by intelligently staging data for high performance computing. To minimize the effects of latency, options such as persistent storage (Amazon Web Service S3) and archival/backup (such as in AWS Glacier) can be leveraged.

There will always by HPC applications that are latency intolerant; for these, GPUs and the cloud are not yet the ideal acceleration solutions. Applications where communication and computation don’t need to be interspersed are perfect for being accelerated via cloud based GPUs.

The way forward for developers to help organizations benefit from GPU computing on the cloud

Writing code for GPU processing is not easy (writing the alphabet is much easier!). Also, porting existing code to leverage the power of GPUs for applications is a tough task for businesses. Developers need to be given robust support in the form of sets of tools to manage GPU focused programming for languages such as CUDA and OpenCL (native programming languages) and OpenACC (directive based).

Compilers, profilers, assemblers, debuggers, and so forth for enabling APIs and libraries will need to mature for in-house developers to optimize existing cloud empowered apps to leverage the power of GPUs on the web. Presently, developers need to implement core system modifications based on alterations in Linux kernels so that they enable the use of GPUs for computation acceleration.

Key takeaways

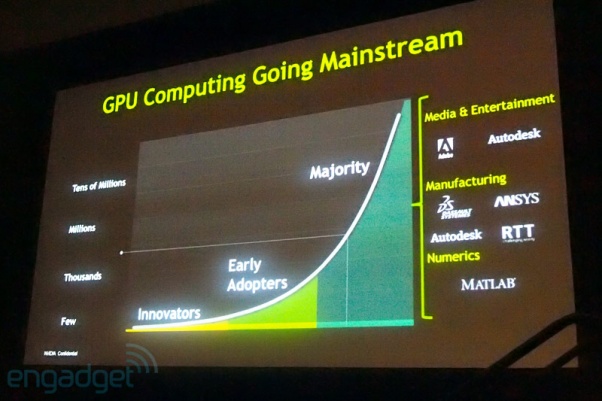

GPU accelerated computing has already made significant inroads in verticals such as computational chemistry, data science, image and computer vision, bioinformatics, defense, computational finance (can we lower taxes!?), and machine learning.

As the number and expanse of applications that can benefit from GPU acceleration grows, and the costs of acquisition of GPU computing comes down, enterprises will need to make the strategic decision to jump onboard the wave of GPU computing. For now, data heavy and application heavy enterprises with massive digital transformation budgets are endorsing GPU accelerated computing, and beginning to reap the benefits.

Just like the Raiders are reaping the benefits by leaving that failed city of Oakland! Vegas, here we come!

Photo Credits: AWS