Machine learning is the way by which cognitive applications can learn and engage with the environment around them in a natural way. Machine learning is already helping spot patterns in the purchase habits of millions of users on the Internet, with applications in Amazon Suggestions and several advertising engines. When machine learning is implemented correctly, organizations can build insights and use them to grow revenue and profitability. Machine learning is very good at reading gigabytes and terabytes of data to spot patterns in the data, the output from which can be deployed on cloud computing applications, embedded systems, analytics platforms, and the like.

IBM machine learning for z/OS

[tg_youtube video_id=”T2HtyNX7aHc”]

IBM machine learning is a solution that can be used to extract value from enterprise data. The solution assists organizations in quickly absorbing and transforming data to create, manage, and deploy self-learning behavioral models.

The kind of applications we are talking about would go like this:

- First, a model would be created that would have the code that would describe how the patterns are searched for and what would be the actions to be taken upon finding the pattern in the input data. For instance, let us consider that IBM machine learning is being used to analyze wireless digital signals from unknown sources in the environment. These could be WiFi signals, cellular signals, digital radio signals, and the like. The model that is created with IBM machine learning would have the code that would run in order to execute the machine learning.

For instance, this code could be something like the below:

- First, read one complete transmission of the digital signal.

- Categorize the signal based on already known patterns. If it is a fresh pattern, categorize the signal as a fresh pattern, otherwise categorize and tag the signal data as per the known patterns.

- Store the signal data as needed.

- Go back to the first step and resume your search for the next signal.

- Repeat the above process.

- Then the created model would automatically be refined by IBM machine learning as it continues the processing. Based on the digital signal data being read, the patterns would be categorized and the system would continue processing. As you can see, this is a typically adaptive, self-learning system that would continue to learn from the data presented to it. The more data it reads and processes, the more knowledgeable it becomes.

- Occasionally, IBM machine learning would be made to stop the model in order to make some manual adjustments. Once the manual adjustments are completed, IBM machine learning would resume running the model.

Features

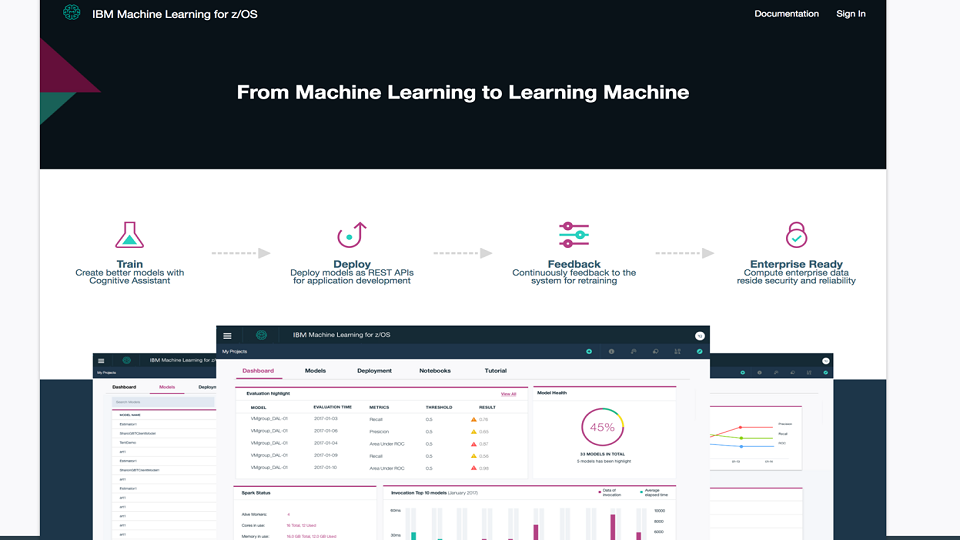

Simplify model creation

Models can be built and deployed through the visual model builder using the wizards provided or through the integrated notebooks. This feature allows data scientists and developers to focus on the model quality rather than the process complexities. What this means is that you can focus on developing a high-quality model rather than delving into the details of creating the actual model. This is because the process of creating a model has been greatly simplified inside IBM machine learning. Creating a model is a process as simple as one, two, three. Just define the data sources, define the steps, choose the target language, and your initial model is all set.

Easily manage models

The dashboard can provide a health check across all the enterprise models, thus offering insights into overall performance of the model and a quick view into those models that need to be retrained. This dashboard gives you one convenient place to check on your enterprise models. Model performance characteristics are demonstrated via standard operational parameters including patterns learnt, input data processed, and the like.

Easily deploy models

After creation, models can be instantaneously deployed inside the provided framework. The RESTful APIs allow application developers to incorporate behavioral models into their code. The presence of the framework means that deployment of the models becomes really easy and one does not need to get into low-level descriptions at all.

Ensure model accuracy

Data engineers and scientists can schedule continuous rounds of reevaluations on the new sets of data in order to monitor the accuracy of the model over time and get alerts whenever performance drops. The accuracy of the model is demonstrated by the extent to which the calculated values come close to the actual values.

Software requirements

The software requirements for the z/OS component are: The IBM z/OS Version 2.1 or later. The requirements for the Linux component are: Red Hat version 7.2 or later, Docker, Kubernetes 1.4.5 or later acting as the Docker cluster manager.

Hardware requirements

The hardware requirements include IBM z13 or zEnterprise EC12 servers running z/OS version 2.1(5650) or later. Four or more processors(zIIP) must be allocated, one general purpose CPU, 100GB memory or more to the actual LPAR where it will operate. The Linux component needs an x86 virtual environment and hardware.

How to use IBM machine learning to complete model validation

In the example discussed earlier, after the model completes running a certain number of cycles, it would have gathered a certain amount of system knowledge. Also at this point, it would have processed a certain number of sets of input data and been able to come up with a categorization for the input signal data. It would also have saved the processed data in memory for future reference. At this point, the model validation can be initiated.

The first step in the model validation would be to collate all the data related to the digital signals in the environment. Once that data is collated, it would be loaded into memory by IBM machine learning. Consequently, IBM machine learning would do a compare between the actuals data and the categorization data calculated by the model. This comparison would complete the validation exercise whereby the efficiency, accuracy and the model’s ability to learn would be assessed.

IBM machine learning offers a wonderful platform on which one can implement AI- and machine-learning based systems. There are a number of supporting frameworks and there is also support for creating the models.

Photo credit: IBM