Performance Monitoring is a complex subject and in some ways more of an art than a science. It can be daunting to be confronted with a choice of over a thousand performance counters to choose from. Which ones are important to monitor on a regular basis and which ones can be largely ignored? A lot depends on the role of the system you want to monitor and whether you’re talking about capacity planning, ensuring availability, scaling upwards, monitoring for possible problems, or troubleshooting issues that have arisen. While the basic procedure for how to use the Performance console has been covered previously on WindowsNetworking in Andrew Tabona’s article Windows 2003 Performance Monitor, I thought it might be useful to list a few key counters that administrators may want to monitor as far as general server health is concerned. So here are some of my top recommended perfmon counters in no particular order and organized around five questions you might ask yourself concerning the health of your machines.

Is Your Server Available?

Availability means your system or application is up and running, and one way of determining the availability of your system is to view the System\System Up Time counter, which tells you how many seconds it’s been since your server last rebooted. The easiest way to view this counter is in report view, which shows the actual numerical value of the counter in elapsed seconds. A better way however is to create a performance counter log and track this counter in the background so you can review it periodically when you need to generate your month-end report.

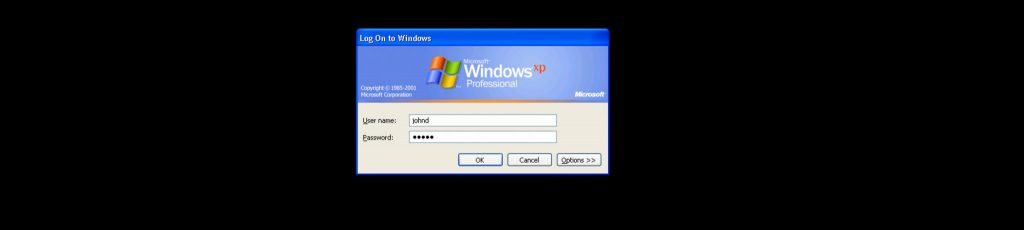

If you want to drill down even further though, you can monitor uptime for any process running on your machine using the Process(instance)\Elapsed Time counter, which tells you how long that particular process has been running on your machine. For example, Process(winlogon)\Elapsed Time will tell me how long it’s been since the Winlogon process started running on my machine, and this should normally be a few seconds less than System\System Up Time since Winlogon starts running during the boot process. You can of course use the Process(instance)\Elapsed Time counter to also monitor processes associated with specific applications and services to monitor the availability of these applications and services. Be careful however as some services are designed to start and stop under certain conditions, while other services are embedded in service host processes (svchost.exe) and you need to first identify which host process contains the service you want to monitor.

How Busy Is It?

A server that’s too busy may be unable to satisfactorily respond to client requests. That translates into unhappy users and let’s face it, an important aspect of your job as an administrator is to ensure a satisfactory “experience” for the end-users you support. The simplest measure of a system’s busyness is Processor(_Total)\% Processor Time, which measures the total utilization of your processor by all running processes. Note that if you have a multiprocessor machine, Processor(_Total)\% Processor Time actually measures the average processor utilization of your machine (i.e. utilization averaged over all processors).

If you’re monitoring this counter and it’s running at or near 100% for extended periods, you should drill down at the process level by examining Process(instance)\% Processor Time counter for various process instances on your machine. For example, on an IIS web server you might track Process(inetinfo)\% Processor Time, while on an Exchange server a good counter to watch is Process(store)\% Processor Time and so on. High processor utilization isn’t always a sign of a problem however. For example, when a backup job is running it’s typical for processor utilization to hit high levels for the duration of the backup, especially if the backup program is encrypting or compressing information before writing it to tape. In fact, if your server typically runs at around 70% or 80% processor utilization then this is normally a good sign and means your machine is handling its load effectively and not under utilized. Average processor utilization of around 20% or 30% on the other hand suggests your machine is under utilized and may be a good candidate for server consolidation using Virtual Server or VMWare.

Another thing you can do to investigate high processor utilization is to break it down into Processor(_Total)\% Privileged Time and Processor(_Total)\% User Time, which respectively show processor utilization for kernel- and user-mode processes on your machine. If kernel mode utilization is high, your machine is likely underpowered as it’s too busy handling basic OS housekeeping functions to be able to effectively run other applications. And if user mode utilization is high, it may be you have your server running too many specific roles and you should either beef hardware up by adding another processor or migrate an application or role to another box.

If your machine is running several applications or handles several server roles on your network, another way to measure busy-ness is to measure processor contention, which is an indication of how different threads are fighting for the attention of the processors on your machine. If too many threads are contending for use of the same processor, the requests by these threads get queued up, and looking at the System\Processor Queue Length counter gives an indication of how many threads are waiting for execution. If this counter is consistently higher than around 5 when processor utilization approaches 100%, then this is a good indication that there is more work (active threads) available (ready for execution) than the machine’s processors are able to handle. Note that this is not always a hard and fast indicator however, for some services like IIS 6 pool and manage their own worker threads, so on a busy web server for example you would want to look at other counters like ASP\Requests Queued or ASP.NET\Requests Queued as well. Furthermore, the larger the number of active services and applications running on your server, the busier the processor queue will normally be, so on a multi-role server running near 100% utilization content may only be a significant factor once System\Processor Queue Length exceeds something like 10 instead of 5 as mentioned previously.

Is Hardware Functioning Properly?

There are a couple of perfmon counters you can track to monitor for signs that your machine’s hardware devices are functioning properly. One of these is System\Context Switches/sec, which measures how frequently the processor has to switch from user- to kernel-mode to handle a request from a thread running in user mode. The heavier the workload running on your machine, the higher this counter will generally be, but over long term the value of this counter should remain fairly constant. If this counter suddenly starts increasing however, it may be an indicating of a malfunctioning device, especially if you are seeing a similar jump in the Processor(_Total)\Interrupts/sec counter on your machine. You may also want to check Processor(_Total)\% Privileged Time Counter and see if this counter shows a similar unexplained increase, as this may indicate problems with a device driver that is causing an additional hit on kernel mode processor utilization. In this case you can drill down and maybe find the culprit by examining the Process(instance)\% Processor Time counter for each process instances running on your machine. This won’t directly tell you which driver is utilizing processor time, but it may indicate which calling application is indirectly causing the problem and may help you troubleshoot the issue further.

If Processor(_Total)\Interrupts/sec does not correlate well with System\Context Switches/sec however, your sudden jump in context switches may instead mean that your application is hitting its scalability limit on your particular machine and you may need to scale out your application (for example by clustering) or possibly redesign how it handles user mode requests. In any case, it’s a good idea to monitor System\Context Switches/sec over a period of time to establish a baseline for this counter, and once you’ve done this then create a perfmon alert that will trigger when this counter deviates significantly from its observed mean value.

Got Enough RAM?

The Memory\Pages/sec counter indicates the number of paging operations to disk during the measuring interval, and this is the primary counter to watch for indication of possible insufficient RAM to meet your server’s needs. A good idea here is to configure a perfmon alert that triggers when the number of pages per second exceeds 50 per paging disk on your system. Another key counter to watch here is Memory\Available Bytes, and if this counter is greater than 10% of the actual RAM in your machine then you probably have more than enough RAM and don’t need to worry.

You should do two things with the Memory\Available Bytes counter: create a performance log for this counter and monitor it regularly to see if any downward trend develops, and set an alert to trigger if it drops below 2% of the installed RAM. If a downward trend does develop, you can monitor Process(instance)\Working Set for each process instance to determine which process is consuming larger and larger amounts of RAM. Process(instance)\Working Set measures the size of the working set for each process, which indicates the number of allocated pages the process can address without generating a page fault. A related counter is Memory\Cache Bytes, which measures the working set for the system i.e. the number of allocated pages kernel threads can address without generating a page fault.

Finally, another corroborating indicator of insufficient RAM is Memory\Transition Faults/sec, which measures how often recently trimmed page on the standby list are re-referenced. If this counter slowly starts to rise over time then it could also indicating you’re reaching a point where you no longer have enough RAM for your server to function well.

Disks Fast Enough?

Finally, let’s look at a couple of indicators of well-functioning hard disks in your system. Watch the Physical Disk (instance)\Disk Transfers/sec counter for each physical disk and if it goes above 25 disk I/Os per second then you’ve got poor response time for your disk. A bottleneck from a disk can significantly impact response time for applications running on your system, so you should investigate this further by tracking Physical Disk(instance)\% Idle Time, which measures the percent time that your hard disk is idle during the measurement interval, and if you see this counter fall below 20% then you’ve likely got read/write requests queuing up for your disk which is unable to service these requests in a timely fashion. In this case it’s time to upgrade your hardware to use faster disks or scale out your application to better handle the load.