A few months ago I wrote an article on multi-core CPUs; within this article I talked a little bit about how software can take advantage of many cores. In this article I will delve deeper into this world of multithreading and highlight some ways which can alleviate some of the difficulties developing a well threaded application.

J2EE

One application technology which many of you will be familiar with, at least in passing, is J2EE. J2EE is an environment used in many businesses to build enterprise level applications. Applications built on J2EE technology can easily take advantage of running multi-core CPUs. Actually, this technology can easily take advantage of multiple machines, each with many processors which, in turn, could have many cores.

Using J2EE for your enterprise applications allows a developer to code an application without much thought of threads; this is the real power behind J2EE. J2EE applications become multithreaded by changing settings found on the application server; multithreading is therefore managed by the container. How does this happen? Well, basically the business logic can be coded and then deployed onto a server within a logical container (containers contain the business logic… get it? Those crazy Java cats). Then, settings on the container will determine how many concurrent instances of this piece of business logic can be run. The container can also determine how many connections to resources such as databases can be used at once. These container managed properties, while at times difficult to work with, provide a means to separate multithreading from the application code. This is good because it allows the multithreading to be tweaked based on the hardware the application runs on without altering the application code. It is quite common for enterprise hardware to be altered; machines can be added to the server cluster, or perhaps processors can be added to or subtracted from the machines already existing on the cluster, each of these alterations affect the multi-threading potential of the applications running on the hardware.

OpenMP

Keeping the multithreading logic separate from the business logic should be a key objective for developers writing massively parallel applications. There are many reasons for this; ease of development, ease of debugging, and ease of altering the application. When one is developing in C/C++ or FORTRAN a popular implementation for used for this is OpenMP. OpenMP is an API used to produce multi-threaded code effectively and efficiently.

Essentially, OpenMP is used by including a set of instructions in the code in the form of comments, or annotations. Code is written to be sequential and then annotations are added at the appropriate locations afterwards. When the code is then compiled, with an OpenMP compatible compiler, the annotations are read and the code is compiled in such a way as to make use of threads as directed by the annotations.

While researching for this article I came across JOMP. JOMP is a OpenMP-like technology which can be used with Java. It is not clear to me what, if any, the official relationship is between JOMP and OpenMP; if someone reading this knows more about this please send me an email. I would be quite interested to learn more about this. Even though Java has a built-in API for the use and control of threads, using such an implementation is still useful.

This method of parallel programming is very advantageous. Since a program is coded to run sequentially, and the annotations are merely code comments, then if the code is compiled in a traditional compiler the compiler will simply ignore the OpenMP annotations. The same code could then be compiled with an OpenMP compiler and run with multiple threads in parallel. This means that the developer would not have to alter the code if the program was to run on two different hardware architectures: one which encourages multi-threading and one which discourages multi-threading.

Another advantage of OpenMP is that portions of the code can be annotated incrementally with very little altering of the code. This allows for more frequent testing to ensure proper functioning of the code. This is important because a developer could multi-thread many portions of the code which will then result in a different execution; it is rare that this could be checked by a compiler, and should always be checked by thorough testing.

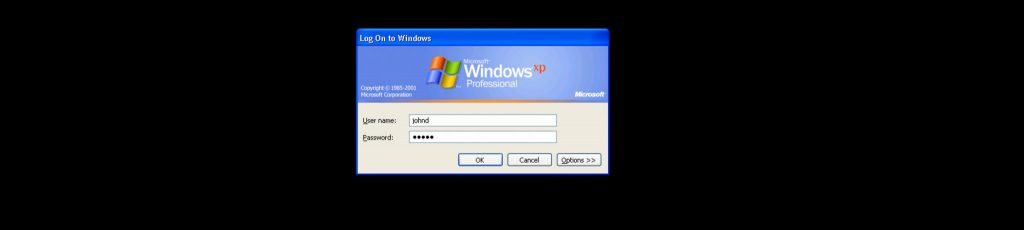

Figure 1: An example of a multithreaded application flow. (Source: www.acm.org)

Dangers of Multithreading

One common mistake made by developers when multithreading their applications, is with the multithreading of loops. For example, if one wanted to iterate over an entire array of integers and simply add one to each value, then this could be multithreaded to perform each iteration in its own thread at the same time. OpenMP accomplishes this (in C/C++) by the annotation #pragma omp parallel for. But, perhaps one wants to iterate over that same array but alter each value by adding the value of the previous element. In this case it would be a mistake to multithread this loop; if all of the loop’s iterations occur simultaneously then you cannot guarantee that the previous element has been altered to contain the new value. Running such a loop in multiple threads would result in a drastically different array of integers compared to running this loop sequentially in one loop.

Another mistake commonly made by even the most experienced developers, is with the variables used by multiple threads simultaneously. OpenMP specifically, is used for programs running in shared memory and therefore requires developers to ensure that threads are not altering variables used by other threads. Luckily, in the case of OpenMP there are annotations supported which will ensure that this doesn’t occur within a given block of multithreaded code. But developers still have to be aware of this so that they use the correct annotations where required. Again we’re back to careful, incremental testing to ensure proper operation of the code when multi-threaded.

Performance

When done carefully, multithreading applications can lead to significant performance improvements. Of course, this requires that the application be run on hardware capable of supporting many threads efficiently. But even with the appropriate hardware in place there are limits to how much improvement one can achieve via multithreading. As I mentioned previously (with my for loop example), there are blocks of code which cannot be multithreaded. Therefore, if there are large portions of code which cannot be multithreaded then the execution time of these portions will be a limiting factor in the overall execution time of the program. Amdahl’s law is a theory often used to estimate the maximum possible performance benefit seen from multi-threading an application.

There are some additional performance benefits, other than the obvious simultaneous execution of tasks, to running a multithreaded application on multi-core processors. That is that there is more cache memory for the application to take advantage of. For example, Tilera’s Tile64 processor discussed in my previous article contains a significant logical L3 cache. In many cases this increase in usable cache memory can significant reduce the time required for memory reads and can significantly increase execution time.