Back in the days of Windows Server 2012 when Hyper-V first began to fully support the NUMA architecture, most of the best practices guides that I remember reading stated that virtual machines should not span multiple NUMA nodes. The reason for this particular best practice was that stretching a VM across NUMA nodes can cause its performance to suffer. While there is definitely something to be said for squeezing the optimal performance from your virtual machines, there are certain situations in which it may be advantageous to allow a VM to span multiple NUMA nodes.

NUMA node performance issues

As previously mentioned, it is generally advisable to configure Hyper-V to disallow NUMA spanning when possible. If a Hyper-V host server contains multiple physical processors, then memory sockets are arranged in a way that mimics the CPU architecture. A system with two physical processors, for example, would have two (or more) NUMA nodes. The reason why this is important is because each processor has memory that is considered to be local to the processor. A processor can, of course, access non-local memory (memory that is local to a different processor within the system), but there is considerable latency involved because the processor cannot access that memory directly. A Hyper-V virtual machine will, therefore, experience the best performance if it is limited to using memory that is local to the CPU cores on which the VM is running.

Why span NUMA nodes?

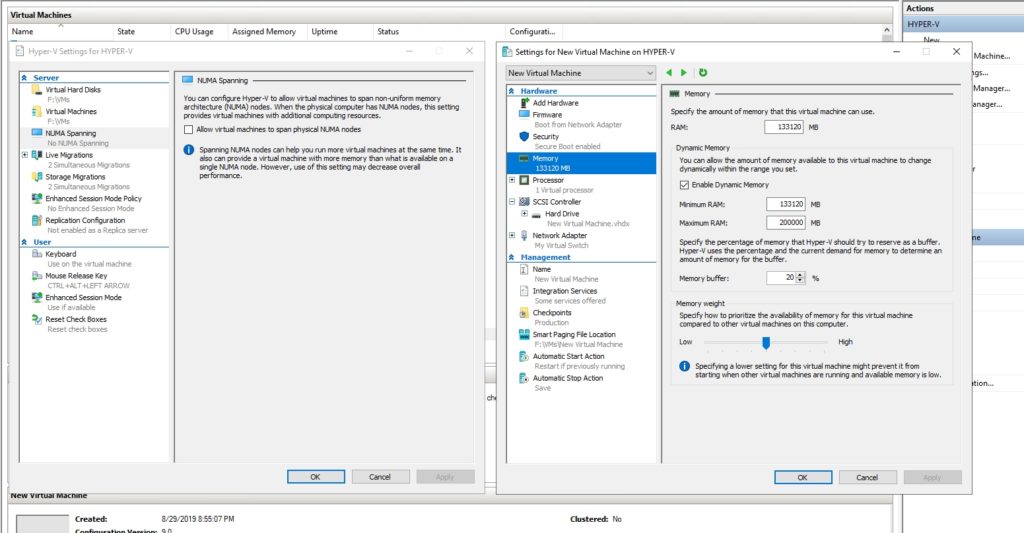

Hyper-V is configured by default to allow virtual machines to span NUMA nodes, as shown in the figure below. This, of course, raises the question of why Microsoft chose to enable NUMA spanning by default when disabling NUMA spanning generally results in better performance.

There are at least three reasons why NUMA node spanning is allowed by default. First, just because VMs are allowed to span multiple NUMA nodes does not mean that they will. Based on my own experience (I can’t find documentation to confirm or deny this), it seems that Hyper-V only stretches a VM across NUMA nodes if it has no other choice. Most of the time, Hyper-V seems to make a concerted effort to avoid having VMs span multiple NUMA nodes.

Second, disallowing NUMA spanning limits the total amount of memory that can be allocated to a virtual machine (assuming that dynamic memory is being used). Consider for example, that my lab server contains 256 GB of RAM, divided into two NUMA nodes. If I were to disable NUMA node spanning, then Hyper-V would not allow more than 128GB of RAM to be dynamically assigned to a VM (actually, the limit would be a little bit lower, because of host operating system overhead). Let me show you an example.

If you look at the figure below, you can see that I have disabled NUMA spanning, and I have created a new virtual machine with 130GB of RAM. Remember, the host has 256GB of RAM installed, so I have allocated just over half of the host’s memory. No other VMs are currently running.

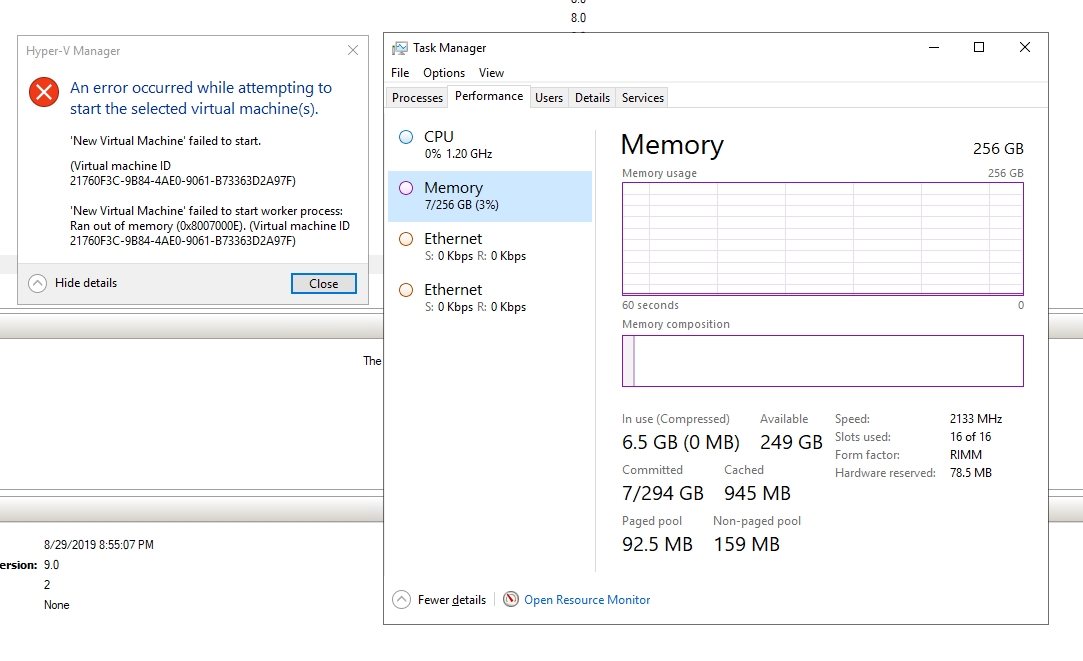

If I attempt to start the virtual machine, Hyper-V generates an error message like the one that is shown below, indicating that the VM failed to start because of insufficient memory. You will also notice that the Task Manager indicates that the host has plenty of memory available. The only thing preventing the VM from starting is the fact that I have forbidden Hyper-V from spanning the VM across NUMA nodes.

The third reason why you may not want to disable NUMA spanning is because doing so can lower your overall virtual machine density. As I just showed you, Hyper-V will prevent a VM from starting if its memory is being dynamically allocated and the virtual machine’s memory requirements exceed the amount of memory that is available within a single NUMA node. So with that in mind, imagine a situation in which a Hyper-V server has plenty of memory remaining and can comfortably accommodate additional virtual machines, but the available memory is scattered across NUMA nodes. Those additional virtual machines would not be able to start because unless individual NUMA nodes have enough memory remaining to accommodate the VM’s needs. The end result would be an underutilized server. The host has additional memory resources, but its NUMA spanning policy is preventing that memory from being used.

If you are not actively trying to achieve the highest possible virtual machine density on your hosts, then the idea of having some memory left over that your VMs can’t use might not seem like a big deal. However, it is conceivable that under the right circumstances disallowing NUMA spanning could cause live migrations to fail. For that to happen, the VM that is being live migrated would have to be configured to use dynamic memory allocation, and the destination host would have to have too little memory remaining in any one NUMA node to accommodate the VM.

Weigh the pros and cons

Although some have long claimed that you should never span a virtual machine across NUMA nodes, there are some completely legitimate use cases for doing so. As such, it is important to weigh the pros and cons of NUMA spanning rather than rigidly adhering to the idea that NUMA node spanning is a bad idea. On one hand, preventing the use of NUMA node spanning can help to ensure optimal virtual machine performance. On the other hand though, allowing NUMA node spanning can make it possible for you to create larger virtual machines, while also allowing your Hyper-V host to achieve a higher overall virtual machine density.

Additionally, it is theoretically possible for NUMA spanning to actually improve VM performance. Imagine that a VM has been allocated an amount of memory that is completely inadequate. The virtual machine isn’t going to perform very well because of its lack of memory. If that same VM were allowed to span NUMA nodes, the administrator may be able to allocate more memory to it than had previously been possible, thereby overcoming the memory bottleneck.

Featured image: Pixabay