Product Page: click here

Free Trial: click here

Managing an organization’s many distributed files and file storage systems has always been challenging, but this task has become far more complex in recent years. System admins commonly find themselves trying to manage several different types of cloud and datacenter storage, each with its own unique performance characteristics and costs. Bringing all of this storage together in a cohesive way while also keeping costs in check can be a monumental challenge. Not to mention how disruptive data migrations tend to be when space runs short. I have seen a few different products that use an abstraction layer to create a consolidated view of an organization’s storage. While these types of products have their place, it is important to keep in mind that all storage is not created equally.

Recently I heard about a product from DataCore called vFilO, which provides a unified view of an organization’s files and their storage resources but also places a heavy emphasis on helping organizations derive the greatest possible cost-benefit by moving data to the most efficient location-based on policies. In addition, vFilO provides a distributed file system with deep object capabilities spanning on-premises block, file, object as well as cloud storage.

Working with vFilO

Normally, when I write a software review, I make it a point to install the software in my lab so that I can get a feel for the deployment process. In the case of vFilO, however, due to logistic challenges, I did not get the opportunity to install the software. As such, I cannot provide any insight on what is involved in getting the product up and running — that’s usually done by DataCore partners around the globe. My review is based entirely on remote access to a demo environment that was set up by DataCore.

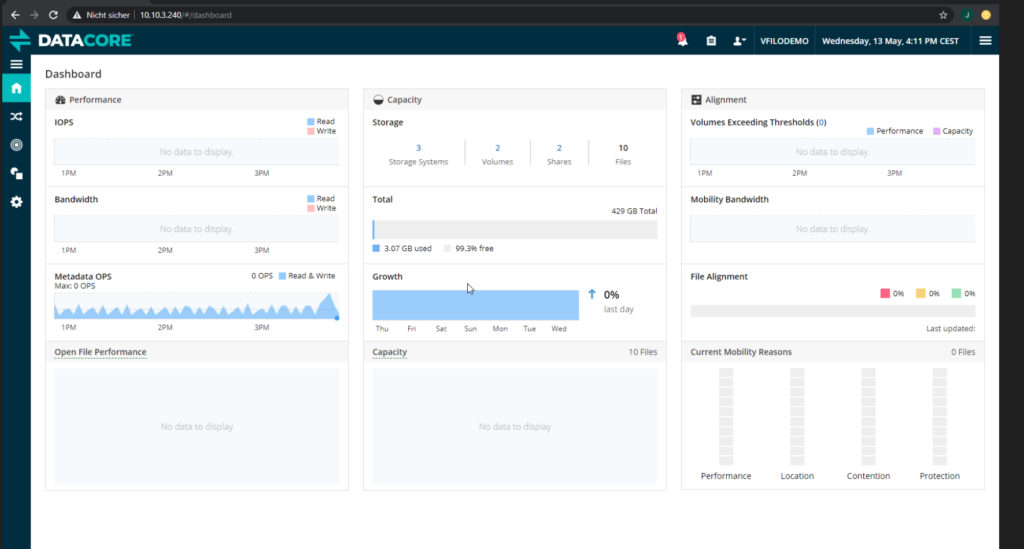

When you log into vFilO, the first thing that you see is the Dashboard screen, which you can see in the image below.

As you can see in the image, this screen provides you with a variety of storage metrics including those related to IOPS, bandwidth use, storage consumption, and more. The Dashboard also provides a visual indication of how your data growth is changing over time, which can be handy for storage capacity planning and flags any conditions needing your attention.

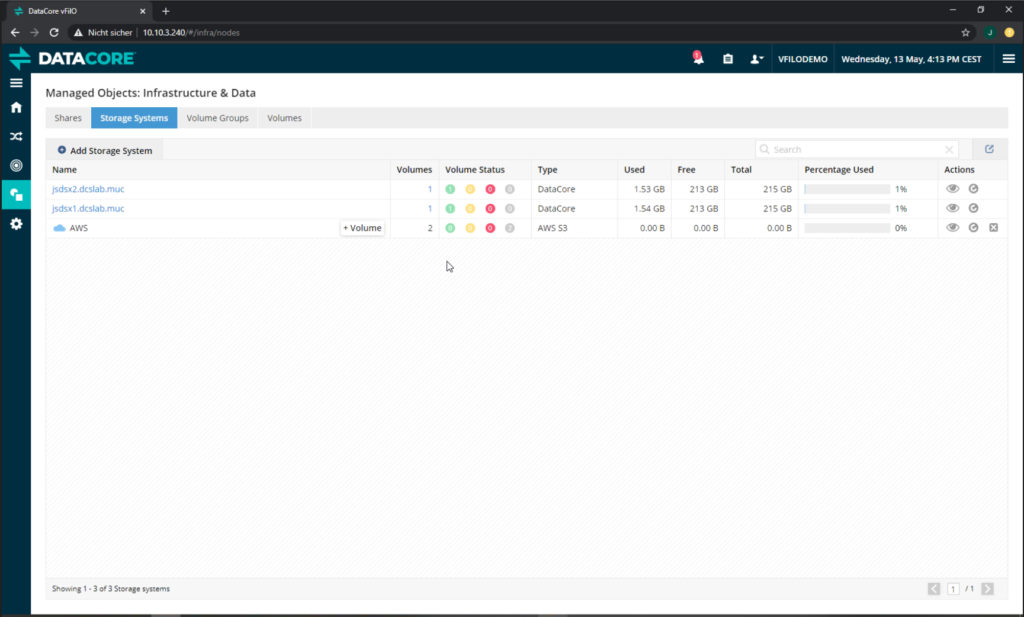

After spending a few minutes familiarizing myself with the dashboard screen, I moved on to the Manage Objects: Infrastructure and Data screen, which you can see in the screenshot below. The Manage Objects: Infrastructure and Data screen is divided into several tabs, and the screenshot shows the Storage Systems tab. This is where you assimilate existing file servers and NAS devices and add or decommission storage.

As you can see in the image, this particular deployment is currently managing two local storage resources, as well as AWS cloud storage resources. For each of the listed resources, you can see the number of volumes as well as a visual indicator of the volumes’ health. You can also see the volume type as well as the used and free storage capacity. If the storage already had files on it, the original mount points appear as volumes.

I found that vFilO makes it easy to add a storage system. Upon clicking the Add Storage System button, vFilO displays the dialog box shown in the next screenshot. As you can see, vFilO has native support for a variety of file servers, network-attached storage (NAS), on-prem object storage, and public cloud storage types. In case you are wondering, vFilO also fully supports common infrastructure components such as the Microsoft Active Directory for access controls. This way file ownership and permissions remain intact.

Next, I decided to take a look at the Shares tab. As you would expect, the Managed Objects: Infrastructure and Data screen’s Shares tab shows you the top-level share used for the global namespace, with directories underneath it corresponding to shares that currently exist within the storage that you have added to vFilO. This tab also gives you the ability to create new shares, as shown in the next figure, or to manage existing shares. One thing that I particularly liked about this interface is that when you create a share, you have the option of creating an alert that can notify you when the share begins to run low on available space. The Volume Tab similarly lets you set an upper threshold on capacity so the software can better balance use of free space.

The thing that really sets vFilO apart from some of the other file and object storage management tools on the market is its use of objectives. Before I explain what these objectives do, take a look at the screenshot below. Multiple objectives have been applied to each of the shares.

The idea behind the objectives is that file properties, owners, access patterns, and organizational priorities dictate where the data should be stored and what protective measures need to be put into place. Objectives are a way that you can tell vFilO what your goals and intentions are for data placement. The software then transparently uses the available resources to accomplish your objectives (or alerts you if it can’t). If for example, you indicated that you needed to achieve 5-nines of durability, then vFilO would likely create multiple copies of the data on different storage devices to meet that goal. You can see some of the objective options in the screenshot below.

Even though DataCore gives you plenty of predefined objectives, it is also possible to create your own custom objectives by using a simple rules editor. You can see what this looks like in the next figure. Here, I have begun creating a rule that will apply to files that have been inactive for four weeks. In a production environment, such a rule might be used to automatically move data to a less expensive storage tier or archive it in the cloud, in which case the data would be deduplicated and compressed. vFilO can help drive significant efficiency improvements and cost savings.

This brings up an important point. vFilO can dynamically relocate data in a way that helps you to minimize costs while also delivering the required level of performance and protection. Even so, these moves are completely transparent to the end-user. End-users access their files in the same way that they always have, with the same directory/folder hierarchy that they are accustomed to, and are silently redirected to the data’s current location. This is unlike conventional file management where the file hierarchy is tied to the storage location, making data movement quite disruptive.

vFilO combines the functions of a cloud gateway, a data optimization engine, a virtualization layer with multi-site namespace capabilities, and a distributed file system into a single product.

Pricing

The price that DataCore charges for a vFilO license varies based on the type of data that is being managed. The MSRP for active data starts at $354 (U.S.) per TB per year and $175 per TB per year for inactive or archive data. This does not include the cost of the underlying storage. It is also worth noting that the price per TB decreases materially with volume.

The verdict

Whenever I write a product review for TechGenix, I conclude it by giving the product a star rating. These ratings range from zero to five stars, with five stars being a perfect score. With that in mind, I decided to give DataCore’s vFilO a score of 4.7 stars, which is a gold star review.

As I worked with vFilO, I found its interface to be relatively intuitive so long as you have a basic understanding of file shares and enterprise storage, although I do think that some of the metrics on the Dashboard screen could benefit from a bit more clarity.

I particularly liked the ability to assign objectives to shares, directories, and even individual files, and I also liked the seamless blending of block, file, and object storage — it’s a new generation of storage system that is flexible and very powerful.

Rating 4.7/5