Over the last several years, server virtualization has become one of the most dominant technologies in IT.

But what is server virtualization, and how does it work?

Server virtualization is really just a fancy way of referring to the concept of hardware sharing. This hardware sharing dramatically reduces an organization’s cost, while also providing numerous other benefits.

To understand why hardware sharing is such a big deal, think for a moment about the computer that you use every day. It might be a desktop, a laptop, a PC, or a Mac. The specifics don’t really matter. Regardless of what you are using, your computer has built-in hardware resources such as a CPU, memory, a network adapter, and a hard disk. The computer also has an operating system. This operating system might be Windows, Mac OS X, Linux, or perhaps even something else. There are also applications (programs) that run on top of your computer’s operating system.

Not all that long ago, network servers were very similar to your personal computer. Like the computer that is sitting on your desk, these servers had hardware resources, an operating system, and ran one or more applications or infrastructure services. Although it is often possible to install multiple applications onto a single server operating system, it has become common practice to install one application per server. Doing so helps to prevent applications from interfering with one another.

So with this idea in mind, imagine if you had to take the same approach to using your personal computer, and purchase a separate computer for each application that you wanted to run. How cluttered would your home or office become? What would it be like trying to take ten different laptops through the security checkpoint at the airport? How much would all of that cost?

Thankfully, desktops, laptops, tablets, phones, and other endpoint devices are really good at running multiple applications, so we don’t have to purchase a separate device for each application that we want to run. Hopefully though, this example has painted a picture of some of the challenges that network administrators often faced in the datacenter. Server hardware tends to be expensive, so it costs a lot of money to use dedicated hardware for each application. Of course, network servers also incur other costs such as licensing, support, maintenance, power, and cooling.

Many organizations found that they were spending a fortune on their IT departments, and that their datacenters were bursting at the seams trying to accommodate all of the required hardware. At the same time, server hardware was becoming far more powerful. This was of course to be expected, because technology improves over time, but as the server hardware grew more powerful, network administrators sometimes found that they were using only a tiny fraction of each server’s capacity.

Server virtualization comes into play

Server virtualization is based around the idea that since server hardware has grown to be so powerful, the hardware can easily handle running multiple workloads. Sure, there are still workloads that require dedicated hardware, but most workloads require far less hardware resources than what a modern server may be equipped with. All of a server’s extra capacity can therefore be used to run additional workloads.

But wait a minute. What about the best practice of running workloads on dedicated hardware as a way of preventing applications from interfering with one another? Well, it is still a good idea to use a dedicated server for each application. Doing so can help to prevent conflicts, while also preventing a variety of security issues. Today, however, it is possible to run an operating system and applications inside of a virtual server. A virtual server behaves almost identically to a physical server. In fact, a virtual server’s operating system might not even know that it is running on a virtual machine.

At the beginning of this article, I described a computer as consisting of hardware, an operating system, and one or more applications. A virtual server is similar to a physical server in that it has an operating system and is able to run applications. However, a virtual server runs on virtual hardware.

Virtual hardware and hypervisors

So what is this virtual hardware, and where does it come from? Virtual hardware is really nothing more than physical hardware that has been assigned to a virtual machine. The virtual machine doesn’t see all of the hardware that is installed in the physical server. Instead, it sees only the hardware that has been allocated to it. Hence the virtual machine has a filtered view of the available hardware. By sharing hardware in this way, it becomes possible for a single physical server to host multiple virtual servers.

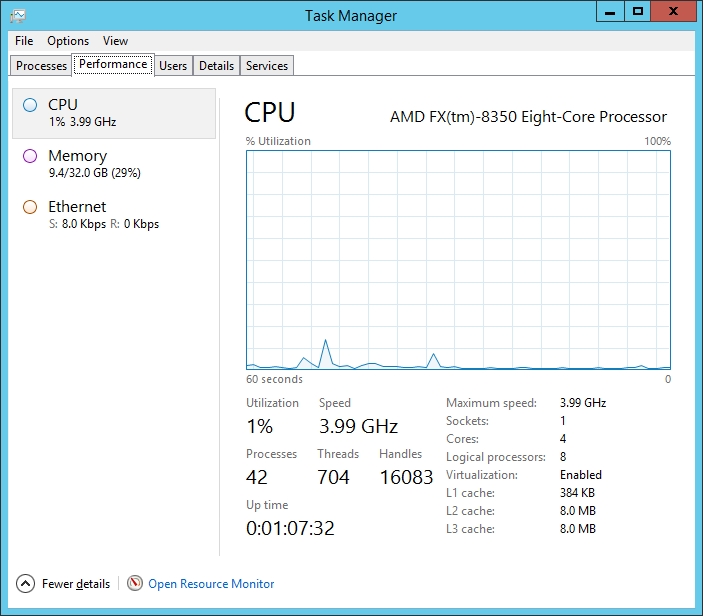

Let me give you a more concrete example. Below, you can see the hardware that exists on one of my physical servers. As you can see in the figure, this server has an eight core CPU and 32GB of memory.

The server that is shown above is running Microsoft’s Hyper-V. Hyper-V is what is known as a hypervisor. The hypervisor is the software component that makes it possible to carve up the available system resources into virtual machines. Hyper-V isn’t the only hypervisor that is available. Other vendors such as VMware also offer hypervisors.

Hyper-V Manager, pictured above, is the management console that comes with Hyper-V. The console’s top and middle section lists the virtual machines that exist on this server. Only three of the virtual machines shown in the figure are powered on right now, but you can get a sense of the way that resources are shared. The physical server contains 32GB of memory. Two of the virtual machines have been allocated 4GB of memory each, and another virtual machine has been allocated 2GB of memory. As such, 10GB of the server’s 32GB are being used by virtual machines.

Although the hypervisor is the component that allows hardware resources to be shared by virtual machines, the hypervisor cannot do this on its own. Modern hypervisors such as Hyper-V require the server hardware to support server virtualization. Modern servers usually contain a BIOS setting that can be used to enable or to disable server virtualization support. When this setting is enabled, the hypervisor can work with the physical hardware to allocate resources to virtual machines. In doing so, the hypervisor and the server hardware collectively work to create isolation boundaries, so that each virtual machine contains its own hardware resources and its own dedicated operating system.

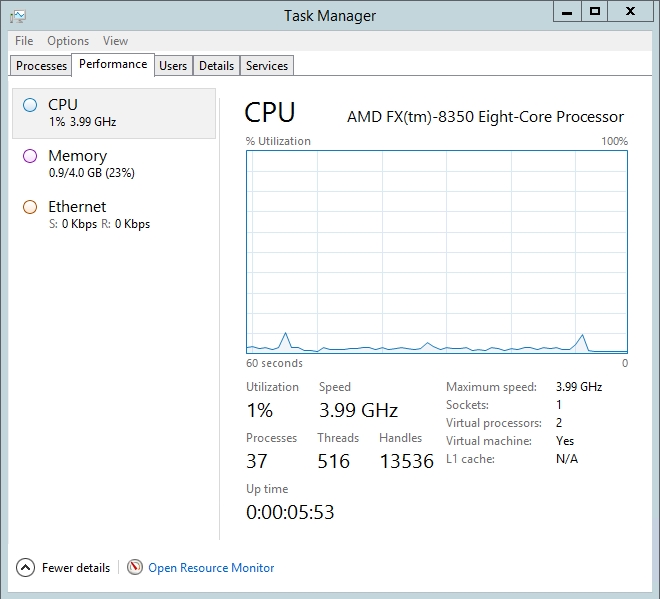

To see what this looks like, see the image below that shows one of the virtual machines on the Hyper-V server. You will notice that this virtual machine is running in a window, and that it is running a normal Windows Server operating system. You will also notice that the virtual machine only sees the 4GB of memory that have been allocated to it. It cannot see the host server’s other 28GB.

Limitless possibilities of server virtualization

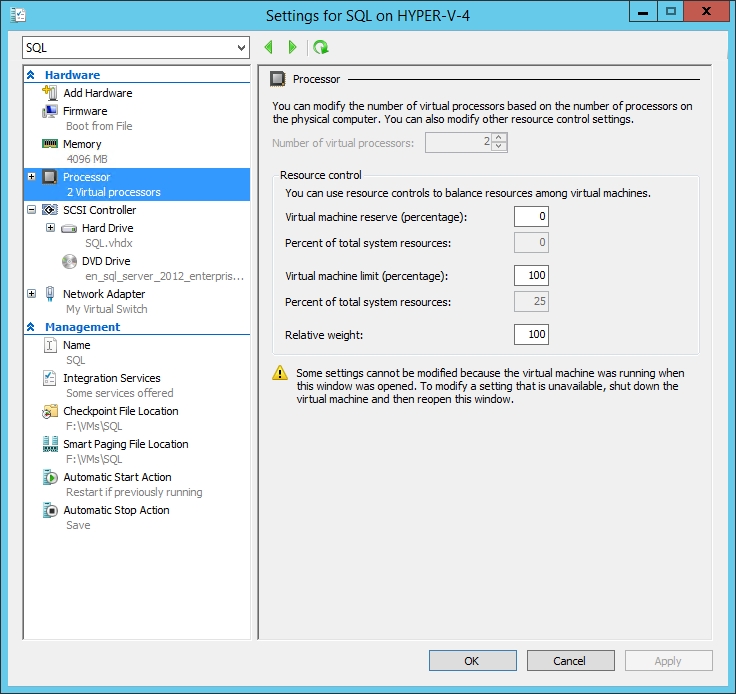

It is worth noting that although isolation boundaries do exist between virtual machines, allocated hardware is not necessarily locked away so that no other virtual machine can use it. Imagine, for example, that a server contains four CPU cores. It would be easy to think of such a system as needing one core for the hypervisor, and being able to support three single-core virtual machines with the remaining CPU cores. Such a configuration could be implemented, but it doesn’t have to be. Hyper-V allows for resource overprovisioning.

A four-core system might, for example, support the hypervisor, plus five virtual machines with two virtual CPUs each. How is this possible on a system that only has four cores? Such a configuration is sometimes possible because a workload might not need all of the resources that a CPU core can provide. If you look back to the Hyper-V Manager illustration, for example, you will notice that the virtual machines are showing 0% CPU utilization. This is because the host server’s CPU delivers far more performance than what any of the virtual machines needed at that moment in time.

If a core is not being used to its full potential, then unused CPU cycles can be allocated to another workload. When you create a virtual machine in Hyper-V, you allocate virtual processors to the virtual machine. Although virtual processors are loosely related to physical CPU cores, there is not necessarily a one to one relationship between the two. If you look at the image above, for example, you can see that this particular virtual machine has been allocated 2 virtual processors, even though the underlying host has only a single physical processor. The Task Manager displays the number of cores available to the virtual machine, but does not display individual CPUs. A quick look at the System Resource Monitor, however, shows that this virtual machine thinks that it has two CPUs, shown to the right side of the this resource monitor:

The hypervisor allows you to define the resources that you need for your virtual machines, and then it does its best to try to provide the necessary hardware resources. Of course, a host server that has been overloaded with too many virtual machines (or virtual machines that consume too many resources) will begin to see its performance suffer.

So to summarize the concept in a compact form.

Server virtualization allows a single physical server to run multiple virtual machines. And by making more efficient use of the available hardware, organizations can dramatically reduce costs with the usage of virtual servers.