I like it when an expert who does real-world IT shares his or her hard-earned experience so others can benefit. In the Mailbag and Ask Our Readers section of our weekly IT pro newsletter WServerNews we’ve often included tips, recommendations, and suggestions that have been contributed by knowledgeable readers of our newsletter who enjoy sharing what they’ve learned so other IT pros can benefit. One such contributor is Bill Bach, who is president of Goldstar Software, a company that specializes in support and training for the community that uses the PSQL database, which is used (and often embedded) by applications in just about any vertical market imaginable, including airlines, banks, doctor’s offices, steel mills, funeral homes, insurance companies, and more. But Bill’s expertise goes far beyond PSQL, and I recently asked him for his top suggestions for implementing a good small business backup solution. In the sections below, Bill describes the approach he follows for backing up data in his own small business, and I’m sure that many of our TechGenix readers will benefit from Bill’s expertise.

Choose your backup product

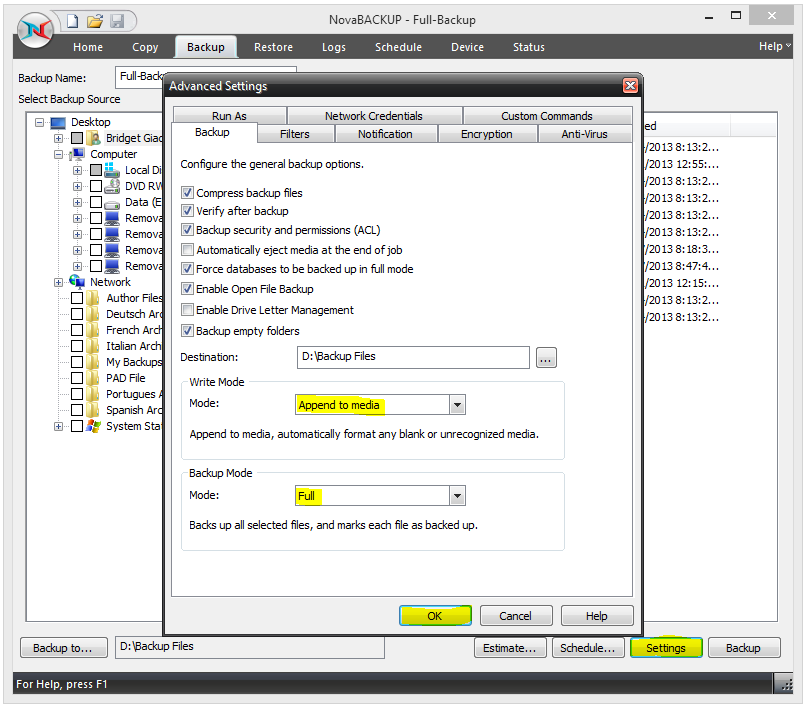

Start by picking a suitable backup product that supports image backups in your price range. Personally, I like NovaBACKUP for this purpose because it fits all my needs and yet is fairly inexpensive. Be sure also to look for a solution that compresses the entire image backup into a single file. By having the entire image in a single file, replicating the data to the external USB files is substantially simpler. I tested one backup product that actually stored the image backup in thousands of smaller files, so synchronizing that data with the external HDD was much more complicated! In the end I used a tool called Beyond Compare, which I’ll talk more about later, to perform the sync operation, so I probably could have ignored this problem. But by having only one backup file, I can name the file with the computer name and date which makes determining whether a backup has run recently very easy. I just sort by name and if I see the files “NOSTROMO201606” and “NOSTROMO201612,” then I can probably assume that it is time for another backup of Nostromo! With the other system I tested, I would have had to filter on file dates and wade through thousands of files to do the same thing. In addition, you should specifically look for a backup product that supports “bare metal restore” capability. Finally, look for one that also supports “restore anywhere” functionality, which allows you to restore to the same hardware or even to dissimilar hardware. This is important if you have to replace an aging computer.

Use a NAS box

Now just follow the instructions for the backup software to create a bare-metal restore startup media for each computer. This may be a CDROM, a DVD, a USB HDD, or even a USB flash drive, which is the cheapest option and easiest to update. Begin running your image backups via your backup software. Now, at this point you really have two options. First, you could get a separate USB HDD (or better yet 2 HDDs) for each workstation and store it either with the workstation or in a central location, but this can become a pain to manage, especially if you need to do frequent backups. The better alternative is to set up a low-cost NAS box (think something like a Netgear ReadyNAS 312, which can give you 4 to 8TB of storage). With the NAS online you can write your backups to a shared folder there, thus eliminating the need to connect anything to the individual PCs on your network.

Add some redundancy

To increase your redundancy, which is especially important if you configure the NAS in a RAID 0 array to maximize space, you should also purchase a few large external USB drives (4TB externals drives are coming down in price) and periodically (maybe weekly) copy the data from the NAS to the external USB drives. Then if you do weekly PC backups, you can use four such USB drives to gain a full month of off-line backup images that you can store in multiple locations.

As I indicated previously, I use an awesome shareware app called Beyond Compare, which can copy changed files to the USB drive at full network line speed (99 percent of my GbE network) to my USB 3.0 drives. What advantages, you may ask, does Beyond Compare provide compared to simply scripting an XCOPY or ROBOCOPY command? Well, unlike XCOPY and ROBOCOPY, Beyond Compare offers both a script interface and a GUI. Because these backup files are so large (a typical backup for a Windows 7 machine might be 60GB) you want to make sure that you copy only what you need, and you also want to only copy if you have enough disk space for storing the data. So I often just launch the Beyond Compare GUI, select the files to sync, and then let it go. This allows me to fix up any file names that may have been renamed (no sense deleting the old name and copying the new one if I can just rename the file), and I can also verify that I have enough disk space before starting.

My own experience with XCOPY is that it is really slow, nowhere near line speeds like Beyond Compare. ROBOCOPY and XXCOPY have also been tried, but eventually rejected due to their overall complexity or lack of performance. I’ve not gone back and reviewed these options recently because I am happy with what is working right now. I also use Beyond Compare to synchronize my NAS back to an extra drive on my desktop every night for another layer of redundancy, as well as pushing this data to external USB HDDs, too. Finally, I also use Beyond Compare to perform a manual, two-way sync via the GUI of data to/from my laptop whenever I travel, thus making sure that if I update one file while on the road and another user updates another file in the same folder in the office, we won’t lose our respective changes.

Finally, I sometimes I find that a user has dumped a boatload of test data on his machine that gets caught up in the mix. If I see that, I can see the spike in the data size. To fix it, I have the user purge the test data, rerun the backup, and the data size comes back down.

Putting it all together

I currently use the above approach on every PC in our small organization of around 15 users. I keep two backups of each workstation on the NAS, and whenever I make a new backup, I delete the oldest backup at the same time to keep the size manageable. Obviously, this can scale depending on a number of factors such as how many PCs you have, the frequency of backups, your retention/rotation scheme, the size of the data, the size of the NAS, and so on. In my office, all user data is stored on the servers and NAS only, so the local workstations only have a Windows image and applications, no critical data is present. To this end, we can get away with doing image backups every six months or so, and right now I’m good with that. A failure might mean that we may have to restore and then rerun Windows Update and possibly some app updates, but this is still acceptable. We do full backups before each critical update, too. More frequent backups or a longer retention time might increase the data requirements. Right now, two copies of each PC image (compressed) is well under the 4TB total size on my external USB drives, so moving the data off to the external drives is still a plug-and-play option. If I had a hundred PCs, though, I would probably set up a 24TB ReadyNAS and have it automatically replicate right to a second 24TB ReadyNAS for redundancy and then maybe do HDD backups of the NAS less frequently.

Photo credit: Shutterstock