Automation Accounts are a powerful tool for operations, security, or any team that needs to manage Azure resources and keep consistency in a Microsoft Azure environment. Using Automation Accounts, we can address and define several processes using automation. The beauty of Azure automation is the ability to connect with other Azure products such as Key Vault, Storage Accounts, and Azure Functions, to name a few. In this article, we are going to use a simple scenario where we are going to use an Automation Account to create a report of all virtual machines per resource group. The script will generate a JSON file and save it on a Storage Account.

The script being introduced here is to have information about the configuration of all VMs in the Microsoft Azure. It is useful in environments with a large number of changes throughout the lifecycle of the VMs.

The idea behind this is to use the framework that we are going to build in this article and adapt to your environment to provide a more secure and modular environment to your operations/security/infrastructure teams that are responsible for managing Azure resources.

Here are some key points that we are going to use:

- We are going to take advantage of Automation Account variables to retrieve the Storage Account and Key Vault being used for this solution.

- We are going to create a SAS token of one hour to be able to save the files into the Storage Account.

- The goal is to avoid any password in the code.

Creating the environment and the Automation Account

The first step is to create a resource group to store our resources that are going to be used by our operations/secure team. For this article, we will be creating a resource group called AP-Operations in the Canada Central region.

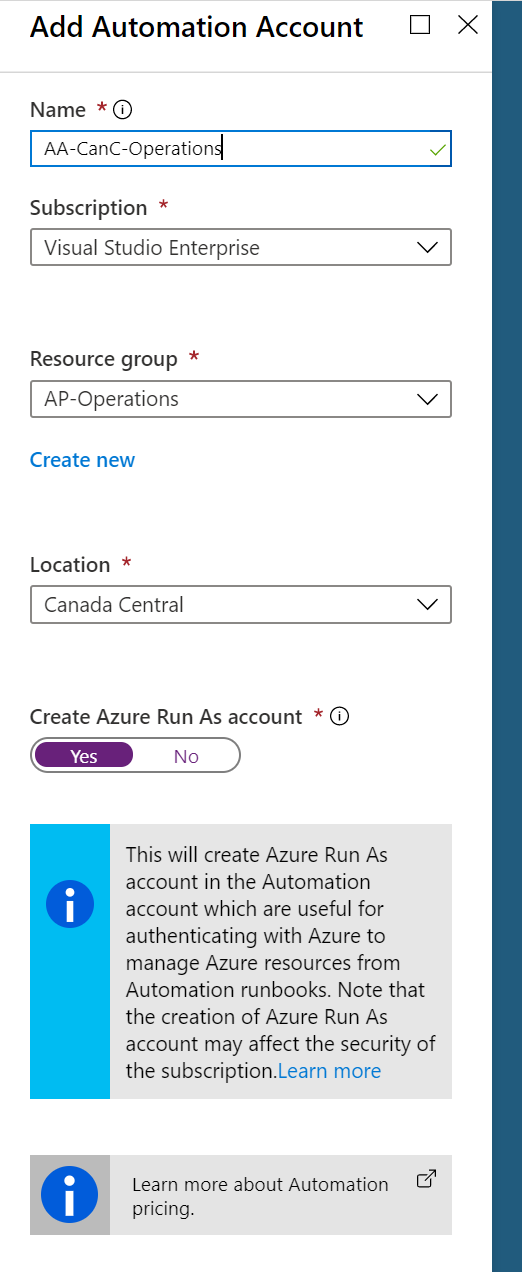

The second step is to create an Azure Automation Account. Search for Automation Account. In the new blade, click on Add and fill out all the required information. Make sure to select Yes in the Create Azure Run As Account.

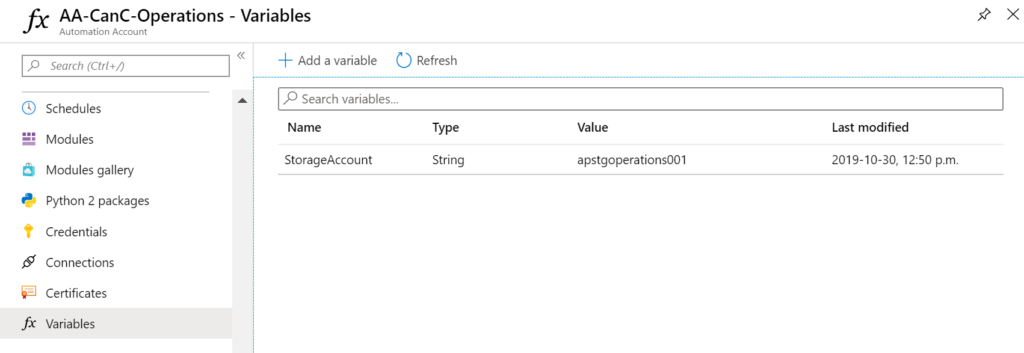

To save some time, we are going to pre-populate some of the configurations that we need to have in the Azure automation, which is the variables. For now, let’s create a single variable called StorageAccount. In the value, add TBD, and we will come back here to add the actual value later.

We are going to use the new AZ modules in our new Runbooks. We have an article describing all the ins and outs of this process, you can find more information here. For now, we are going to import the AZ.Accounts, AZ.Resource, AZ.Automation, AZ.Storage modules into our Automation Account.

Creating a Storage Account

A new Storage Account will be created to support the Automation Account. To create a new one, search for Storage Accounts in the Azure Portal, and click on Add.

The first step in the new Storage Account is to create a container for each Runbook. Since we are planning to create our first one, we will create a new container called vminventory.

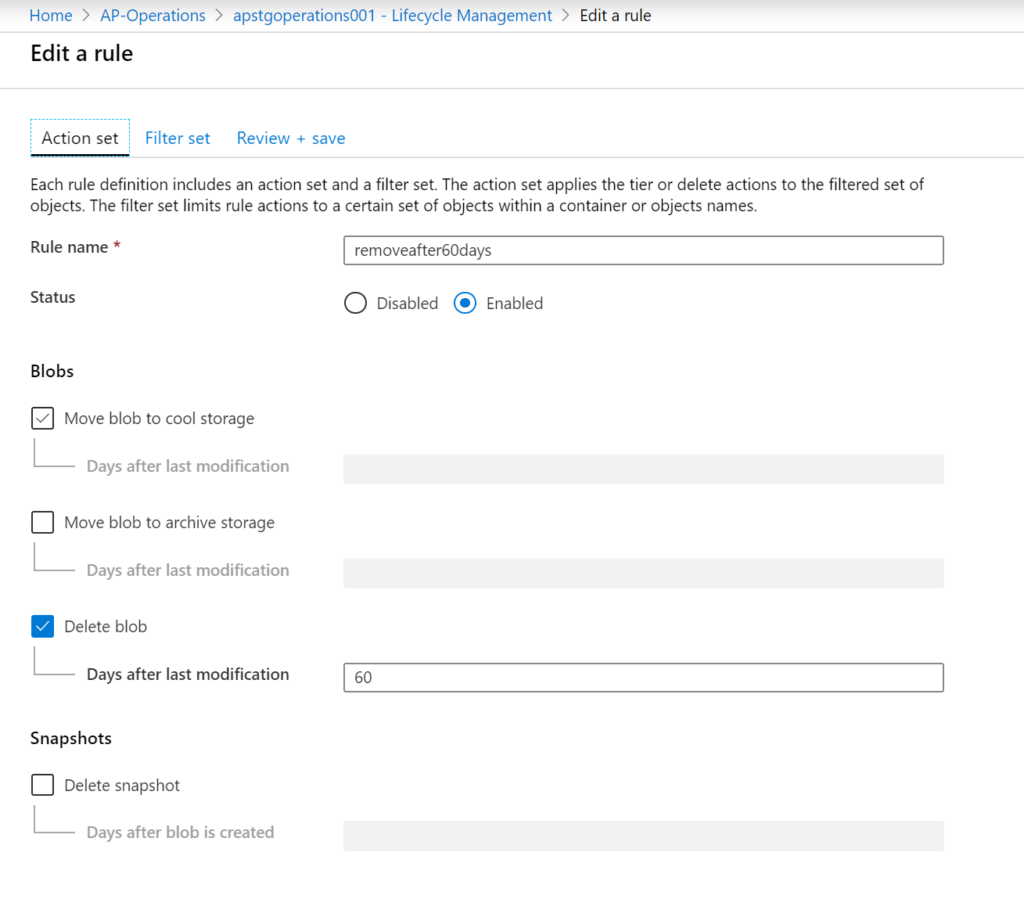

This Storage Account is for our daily operations, and our goal is to keep the consumption low and keep the files in the Storage Account for 60 days. We could create a Runbook to validate the files and purge files older than 60 days. However, the Storage Account has such a feature built-in.

In the Lifecycle Management item, click on Add rule, assign a name, and select delete blob and define the number of days desired (in our article it is going to be 60).

Running your Runbook and connecting all the dots

The complete code of the script is shared in my GitHub area. However, we are going to explore just the code that connects with the new features being introduced in this article, which are variables and Storage Accounts.

The first one is to retrieve the Storage Account name from the Automation Account variables. To retrieve any data, we must know the Automation Account Name and the resource group. We are going to use variables in the script for those two pieces of information. In the third line, we retrieve the content of the Storage Account.

$vResourceGroupname = "AP-Operations" $vAutomationAccountName = "AA-CanC-Operations" $vaStorageAccount = Get-AzAutomationVariable $vAutomationAccountName -Name "StorageAccount" -ResourceGroupName $vResourceGroupname

Now that we have the Storage Account name, we are going to create a period of time of two hours using the $StartTime(current time) and the $EndTime (two hours from now). We are going to retrieve the Storage Account into the $stgAccount variable and create a SAS token using all the information created until this point. Finally, we are going to use the variable $stgcontext to create the connection with the Storage Account using the SASToken.

$StartTime = Get-Date $EndTime = $startTime.AddHours(1.0) $stgAccount = Get-AzStorageAccount -Name $vaStorageAccount.value -ResourceGroupName $vResourceGroupname $SASToken = New-AzStorageAccountSASToken -Service Blob -ResourceType Container,Object -Permission "racwdlup" -startTime $StartTime -ExpiryTime $EndTime -Context $StgAccount.Context $stgcontext = New-AzStorageContext -storageAccountName $stgAccount.StorageAccountName -SasToken $SASToken

To save files into the Storage Account, we can run the following cmdlet, where the $vFileName is the name of the file in the current file system, the container name and the -Force to overwrite any existing files with the same name.

$tmp = Set-AzStorageBlobContent -File $vFileName -Container vminventory -Context $stgcontext -Force

Validating the script in action

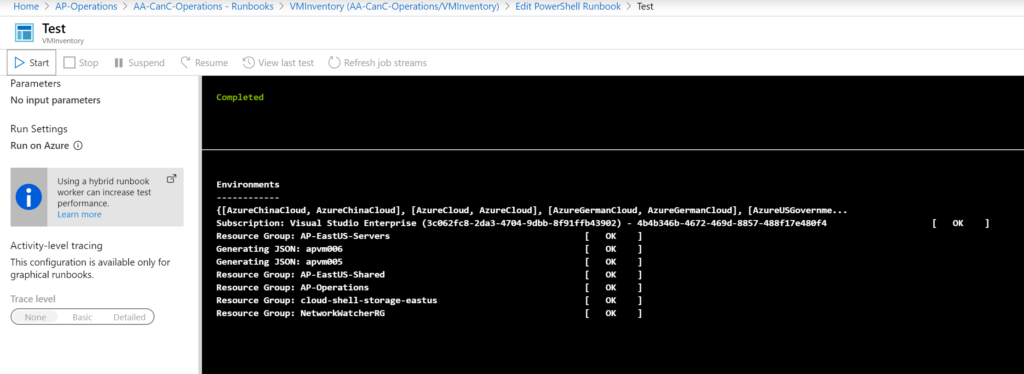

You can copy and paste the content of the script from the GitHub link above. The first run should be using a test pane and the results should be similar to the image listed below.

Since we are using Storage Accounts as a repository of our scripts, we should check the storage account to validate if we can see the files being stored there. We can see the content of the JSON file using the Edit tab, as depicted in the image below.

Featured image: Shutterstock

Hi Anderson,

I’m trying to upload an output excel file directly to ADL storage account using azure automation runbook .. Is it possible ? Using automation account we knew that an instance is azure managed and there isn’t option to save the file locally (even tried $env:TEMP) but didn’t worked. Is there any easy to do it if so please let know . Thanks

Hello,

Does this work in a Powershell Workflow. I’ve bee trying various methods for establishing a storage account for exporting a csv and keep getting errors like this. I’ve done the inline approach for a few attempts. Any insight that you can offer would be much appreciated.

Cannot find the ‘Get-AzStorageAccount’ command. If this command is defined as a workflow, ensure it is defined before the workflow that calls it. If it is a command intended to run directly within Windows PowerShell (or is not available on this system), place it in an InlineScript: ‘InlineScript { Get-AzStorageAccount }’

Thanks this was really useful