Mark Van Noy has had a lot of experience in the area of application virtualization. He’s an old hand with Microsoft App-V, one of the earlier solutions that made deploying applications easier, and he recently evaluated Liquidware’s FlexApp as a possible solution for the University of Colorado Boulder in the Greater Denver Area where he currently works as technical lead/architect in their central IT department. In this article, Mark describes his recent experience with trying out Turbo.net as an application virtualization solution and he walks us through his pilot deployment giving us his take on the pros and cons of using this solution. Let’s turn the floor over to Mark.

A look at Turbo application virtualization and streaming

Reviewing Turbo.net’s product has proven to be quite difficult. On the surface the product is simple to describe: It allows applications to be packaged into discrete containers that can then be streamed by a central server. However, Turbo is an application virtualization platform that does much, much more. The packaging can be performed with their GUI Studio tool or scripted with their custom Turbo Script language. In addition to streaming applications from servers, applications can be locally run on Windows PC’s. Turbo can also turn their containers into silent install MSI files for deploying through tools such as MECM (the tool formerly known as SCCM formerly known as SMS.) There are a deep set of command-line tools that install with the client making every setting completely scriptable as well as other features that tie into the application virtualization role. All of the options available make for a lot of different functionalities to look at to have some semblance of a comprehensive review.

The University of Colorado Boulder began looking at Turbo.net’s product late in the Fall of 2019. After seeing what we felt was a compelling demo we entered into a six-month pilot that was intended to place select students onto the environment for some portion of the Spring 2020 semester. About the time we were getting the pilot fully ready to launch the COVID-19 pandemic hit disrupting the campus in ways we could not have anticipated. This turned much of our pilot into an internal pilot, though we did get the product into some students’ hands before the end of the semester.

Turbo pros

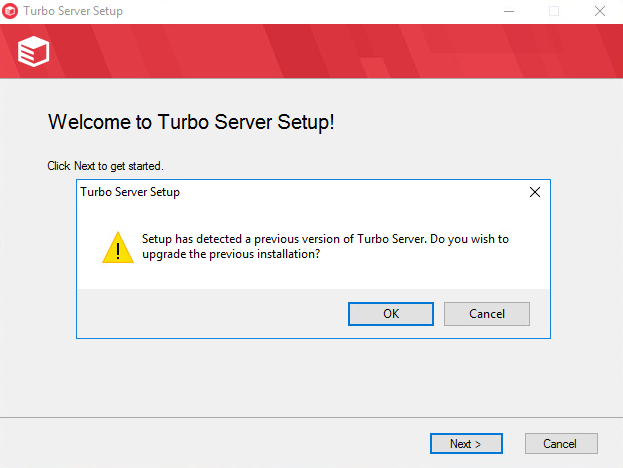

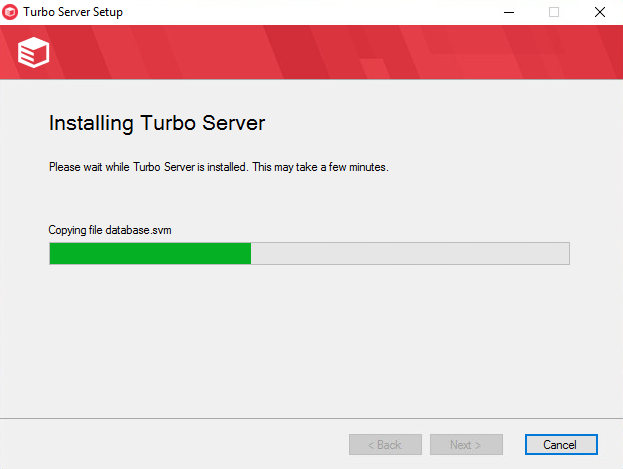

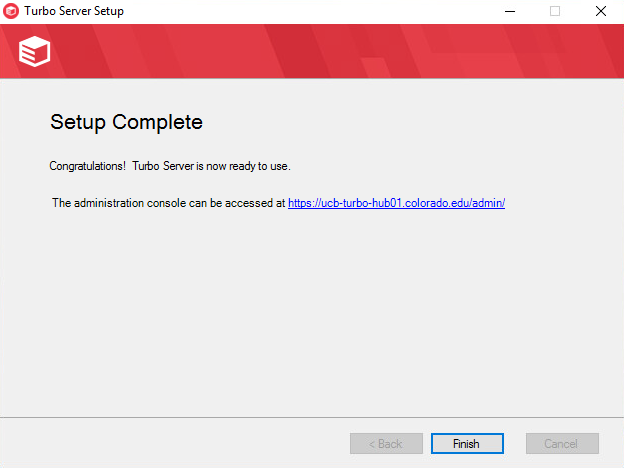

Upgrades may be an odd thing to lead with. However, for our pilot, I had the opportunity to apply a few upgrades. Without question, Turbo installs are the easiest upgrades I have ever performed in my entire IT career. Rather than have a special patching installer, all upgrades are the same full installer as a fresh install uses. The installer sees a previous version and upgrades it. No settings were ever lost. Just the click for accepting the license agreement, a few minutes of progress bar, and click on finish. The servers did not even need rebooting. Done.

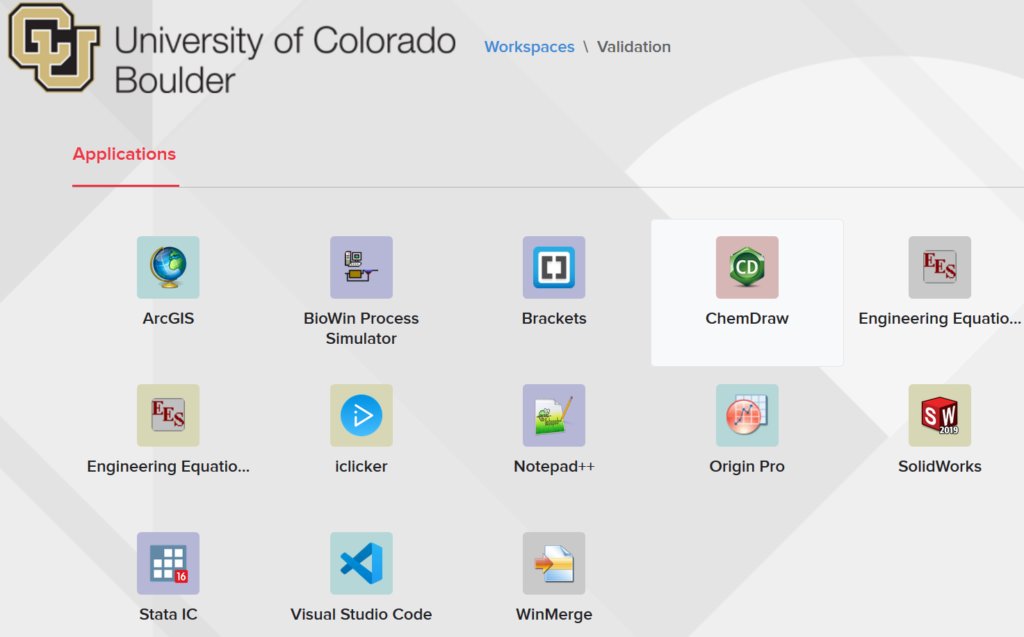

Application compatibility is very good with Turbo. The only thing holding it back from being extremely high is that it does not currently support packaging the right-click context menus. I did get a positive confirmation that support of right-click menus was currently in the works for a future release. We were able to successfully package several applications that are difficult enough to package that most MSI repackagers fail. Examples include SolidWorks, ArcGIS, AutoCAD, Adobe CC, SAS, and SPSS. Near the end of the pilot we had successfully packaged and deployed over 24 applications we packaged and another 8 we pulled pre-packaged directly from the Turbo.net servers. We had some trouble with two applications due to their licensing mechanisms and the Turbo support was able to get us straightened out. Essentially, the HASP license tool installs some drivers that have to live outside of the Turbo application container because the containers run in user mode exclusively. The drivers can either be installed independently or they can be scripted to install from the container itself; though that will require the container to ask for elevation. Multiple applications installed services. Services were captured without any additional work on our part. Some application packages were also extremely large, up to 25.5GB, and still ran without a hitch.

There is a lot of flexibility in how Turbo applications are deployed and run. For managed computers, the Turbo client can be installed and then applications can be packaged into .msi files to be natively deployed in MECM using your existing distribution points. If Turbo is already being used for streaming then the client could be installed and MECM could simply call turbo command-line statements to install from the Turbo hub. The Turbo hub and application servers can be installed then the application servers host applications for end-users while the portal servers provide a web front end for consuming applications either client-free, with a client, or running local on Windows computers. The various combinations of deployment options can be combined in any way that is ideal for the specific customer’s site all while maintaining a containerized application experience.

There is even more flexibility in how application containers work. When I first saw the Turbo command line, I commented that it reminded me a lot of Docker. There is even Turbo Script for automating application packaging. Applications live in containers based on images. Images can be layered together to create custom containers at runtime. This is all quite easy to do as well. Say there is a need for Firefox 50.1.0, with Silverlight 5.1, and Java JRE 8.191. The Turbo command turbo installi Mozilla/firefox:50.1.0,Microsoft/Silverlight:5.1,oracle/jre:8.191, will install all the layers together into a container on the local computer while adding the Start Menu icons as well as any desktop icons that may have been in the package. The command could be sent out through MECM so that it targets a complete collection of computers. Various combinations can be mixed up without ever having to repackage anything. Best of all, the containers are not actually installing any software, that was done during packaging, so there are no reboots to interrupt end users.

Normally, if I have to contact tech support, I would consider that a negative mark. However, in the case of Turbo.net, I need to call out the unusually high quality of support. While we had some teething problems with our on-premises deployment, every problem we had was resolved quickly. We were also connected to the relevant experts at Turbo.net based on any problem we were experiencing rather than going through the all too common hurdles of technical support tiers. The behavior that made me happiest with support is that fixes were not one-off patches we needed to apply. Instead, any fixes we needed were fully integrated into their build system so fixes for us are now fixes for all Turbo.net customers. Throughout our pilot, once a problem was fixed it stayed fixed and by having fixes integrated into builds, I have greater confidence that those fixes will remain in place.

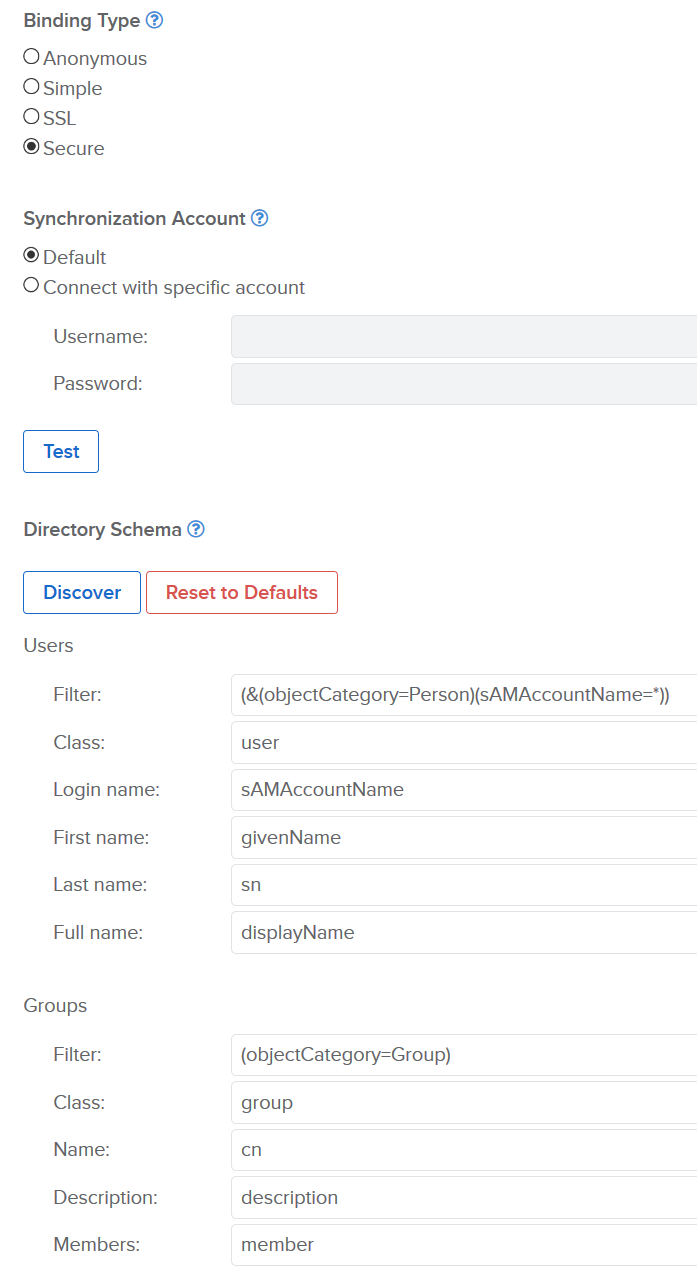

Turbo.net has a great deal of compatibility with identity systems. There is support for locally syncing accounts and groups against Microsoft’s Active Directory. Additionally, any LDAP source can be used to sync user and group memberships with the caveat that the requisite schema to the LDAP system needs to be provided. Microsoft Azure is also a supported authentication method. Using Azure SSO is likely the most desirable method because it moves the authentication process to Microsoft rather than the Turbo.net hosted resources.

Entitling users to applications is quite straightforward. Applications are first added to Workspaces. The same application can be added to different Workspaces without any conflict of duplication of storage. A benefit of having multiple Workspaces with some set of the same applications published to them is that the same application may have different settings in each Workspace. For example, the ArcGIS package could be set to start the ArcMAP application in one Workspace and ArcGlobe in another. Users, either Azure SSO-based or locally synced, are then either directly added to a Workspace or applicable groups are added. The process is reminiscent of a simpler version of file or folder access in Windows.

Turbo.net also provides a good amount of reporting by default in the management web console. For example, memory use, CPU, and session counts are provided for all the servers with the ability to export the graphs as SVG or PNG as well as look at the last hour, day, week, month, quarter, six months, or year. There are also built-in graphs showing which applications are being launched, how they are being launched, who is launching them, and when they were launched. Finally, there are built-in reports to let administrators know how much storage is being consumed by the applications provided as well as user usage and sessions.

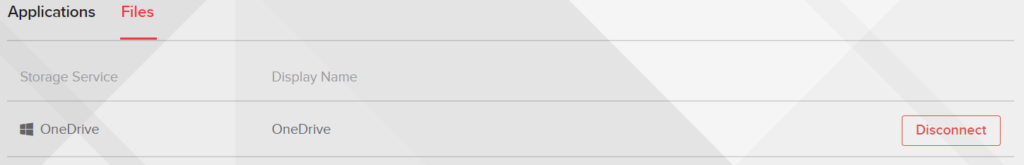

Turbo.net can leverage Microsoft’s Azure application framework to support connecting OneDrive or Dropbox to specific user logins. This allows a virtual T: drive to appear in applications that are directly connected to OneDrive or Dropbox. This functionality is opt-in so users are required to connect their OneDrive or Dropbox account using the Turbo interface. Connecting cloud storage gives a consistent user experience for managing file access. Without cloud storage, the end-users may access files on a network share through the HTML5 interface, file shares, and files local to their computer through the client based Windowed mode, or any method available to the host computer when running the application container on a Windows computer rather than streaming. Limited file browsing is a common challenge with streaming applications as they natively see the file system they are being hosted on. We tried using OneDrive because of our existing Office 365 subscription and found the cloud storage to work well.

Tunneling is another feature that, while still in preview, could be very useful. The tunneling feature is used to allow TCP and/or IP connections back to a host in the same environment as the Turbo.net servers. The most common use for this functionality is to allow connections back to software license servers that control concurrent user access without having to be on a VPN when an application container is running locally on a Windows computer rather than streaming from an Application server.

Turbo cons

Turbo’s flexibility, just like any other application with a great deal of flexibility, brings complexity. Trying to jump right in and use all the various features of Turbo is both confusing and overwhelming. There is a lot to learn. Constraining the process to single-use cases at a time is essential for building the required knowledge. Getting a handle on streaming, running on local PC’s, command-line tools, layering, and deploying with MECM all at the same time will be frustrating at best.

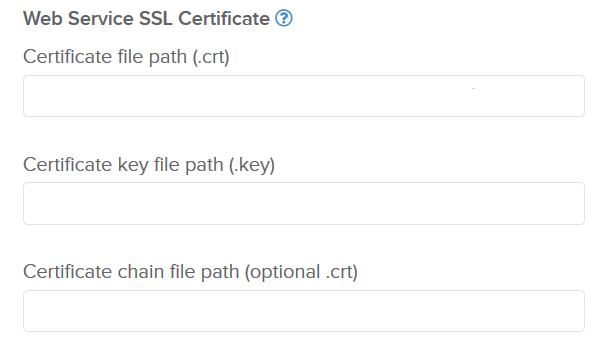

Adding SSL certificates to Turbo is a process that could use some smoothing. The certificates have to be locally available on each server despite the management of the certificates being controlled centrally by the hub server’s web GUI. This is not hard to manage; it just took some discovery. For example, we installed OpenSSL on each Portal and Hub server to generate the .key and the CSRs for our issuing authority. After converting the certificates, we received into proper x509 PEM certificates and stripping the passphrase from the private key the certificates were ready to use. Initially, I had thought to place the certificates in a secure CIFS location, but UNC paths are not supported. I then thought that placing them all in a secure folder on the Hub would work. When I discovered that only the Hub was getting its certificates, I realized I needed to place the certificates in a secure directory on each server. The only reason this is counter-intuitive is that work done managing the servers is done through the central web management portal and not the servers themselves so I was wondering what the context was when prompted for the path.

Turbo’s documentation is a weakness the company acknowledges needs work. When I was first told this, I was a bit surprised because the documentation I had been reading was quite good. The documentation is available to the public here. As I moved deeper into our pilot, I found that the documentation shortcomings are less about the quality of the existing documentation than it is about the documentation that still needs to be written. For example, there is good detail about how to layer application pieces from the command line, however, there are no examples of how to do the same thing in the web console for streaming applications. Once layering is understood it is fairly straightforward that the same layering syntax is simply entered into the Temporary Layers field for the application in question on the Components subsection. Some of the documentation gaps are likely due to the rapid introduction of new features; a welcome problem for sure. Ultimately, what the documentation gaps mean is that customers will likely need to put in some support tickets to get their questions answered until the documentation can catch up.

The Turbo Hub server is a single point of failure. As the name implies, the Hub is the root of all things Turbo. The Application and Portal servers check-in are managed by the Hub server. Much of this communication is transmitted through the SQL database so if the SQL database is running in a cluster mode of some form, such as Active/Active or Always On, then there is some additional resilience. If the Hub server is being used for authentication by syncing with an on-premises Active Directory then a failure of the Hub server will prevent any logins from occurring. Having some form of redundancy in the Hub server component would really help in ensuring Turbo environments are always up. One way to alleviate this concern would be to build out multiple duplicate Turbo farm environments then federate them to keep them in sync.

One criticism that was voiced to me during the pilot — which I do not share — is that the user interface for customers is too similar to RDSH, Citrix, or VMware. All of these products are similarly simple with what is essentially a list of applications presented to a user based on what they are entitled to run. I like the uncluttered interface. Since Turbo.net makes the API available to customers, it is possible to create a custom interface for end-users or to hook into an existing customer portal to maintain a consistent look and feel. Making a custom web-based interface using the Turbo.net API would likely take considerable time and effort.

Turbo: Effective and efficient app management

Turbo is a solution that IT departments should look into. Package once, deploy anywhere, is a reality with Turbo’s containerization technology. Layering can save time by removing both duplication of effort and duplication of software across packages. Allowing end-users to run applications on their own Windows devices allows for the number of servers streaming applications to be reduced. The containerization technology also allows for applications to have different versions running side by side without conflict as well as to appear to be installed without having to touch any Registry settings. Turbo allows for the effective and efficient management of applications on both IT managed devices and BYOD scenarios.

Featured image: Designed by Skyclick / Freepik