If somehow our worst nightmare does come true and machines one day take over the world, it will be ironic that it all started with video games. This is almost too good of a plot to be true, but it is. From the time when games were played in black and white until now, when we have ultra-HD games and 8GB graphics cards, there have been people working behind the scenes to make gaming what it is today, and boy did their ship come home in style. Imagine how a kid would feel if one day he woke up to find his dad doing tax returns on his PlayStation. That’s exactly how gamers around the world are feeling right now with data-scientists running their deep-learning algorithms on $3,000 gaming machines. Since all they’re actually doing is low-level math on a large scale, it turns out that gaming processors do this a lot better than a bunch of regular CPUs put together.

Humble beginnings

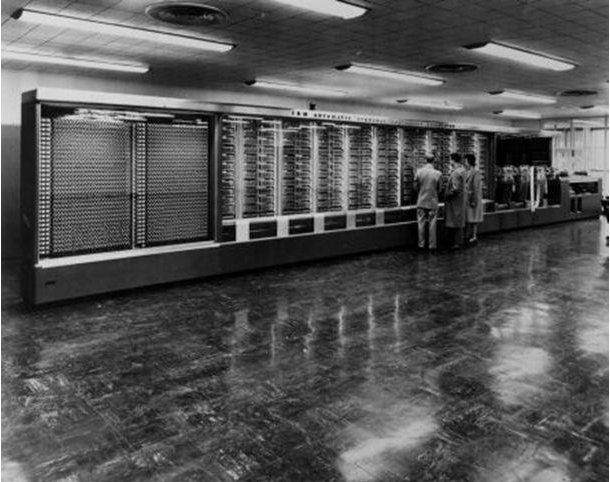

To better understand the concept of deep learning and the role that graphics processing units (GPUs) play, a short history lesson is in order, one that dates back about 60 years. Facial recognition is one benchmark that humans have set up to test the true capabilities of any AI system, as facial recognition is a human trait. Skip to 1957, when Frank Rosenblatt invents the perceptron algorithm that essentially takes multiple inputs, weighs them out based on a given criteria and answers with a yes or a no, or rather an on or an off. This algorithm was implemented in one of the first facial recognition machines, the “Perceptron Mark 1.” The machine was connected to an actual camera that used 20×20 cadmium sulphide photocells to produce a 400 pixel image. What’s more is the machine used actual weights to weigh the input against the on or off criteria supplied.

Rosenblatt died in a boating accident shortly after his theory was debunked by a popular book in 1969 called “Perceptrons” by Marvin Minsky and Seymour Papert. The book mostly highlighted the limitations of the single-layer perceptron structure and how they only had a limited array of applications and brought attention to the fact that the perceptron model failed at a simple task like the XOR function.

This devastating blow to connectionism, followed by Rosenblatt’s subsequent death in 1971, caused a blackout in the sector for more than a decade until the book “Parallel Distributed Processing” was published in 1986. The book is still the core of deep learning today. The book essentially pointed out that with a multilevel nonlinear neural network with hidden layers, a much wider array of functions can be accomplished with ease, and this is what our modern day neural networks are based on.

The winds of winter

So if the technology has been around since 1957, how come it’s taken 60 years to come up with machines that can learn for themselves? The answer is in the video games, as strange as that may seem. Even after “Parallel Distributed Processing” was published, the field of AI faced some serious setbacks in terms of funding cuts, and most governments and organizations that were backing it decided that AI was not the next big thing.

Though the main problem at the time was raw computational power, it was also a case of over-expectations, with people predicting that computers would be able to carry out actual conversations by 2001. Everything just seemed to be happening too quick, and people didn’t see that the technology needed time to develop and mature. Japan’s massive fifth-generation project, an attempt to build an “epoch-making computer,” did not meet any of its 20-year deadlines and with it died the interest of almost all other government organizations as well.

Business as usual for the gaming industry

Many people call the time from 1987-1993 the AI winter because of the lack of development. But in 1993, a group of electrical engineers decided to make specialized chips for faster and more realistic video games. In 1990, the video-game industry was already worth $4.7 billion and growing. Better and faster graphics were all that you needed to blow away the competition, and Nvidia decided to do just that. No one was paying money to build AI systems but everyone was paying money to build gaming systems. And then, in 1999, Nvidia released the GeForce 256, billed as the first commercially available GPU capable of 3D rendering, video acceleration, and integrated GUI acceleration.

The Big Bang

Now, though the concept of neural networks wasn’t new, the amount of data just didn’t exist to train one properly. Combined with the lack of processing power available at the time, AI was essentially written off. It wasn’t until almost half a century later that big data comes into the picture, and we now have a limitless supply of data to train a neural network like the ones initially invented by Rosenblatt. Though there were a number of behind-the-scenes breakthroughs for a number of years, it wasn’t until the 2012 ImageNet computer image recognition competition that the Big Bang of AI occurred. At that point of time, Nvidia CEO Jen-Hsun Huang certainly had no clue that his company would soon be more important to AI than Microsoft, Intel, or Facebook.

AI acceleration

It’s funny that the breakthrough happened in facial recognition 55 years after the first attempt was made with cameras and weights. This time it was by Alex Krizhevsky, who created a neural network called Alexnet and trained it with millions of example images and used 2 Nvidia GeForce GTX 580 cards to effectively do the trillions upon trillions of mathematical calculations required. In doing so he not only won the competition by a considerable margin, but also outperformed Google’s 16,000 machine cluster with just one system. Krizhevsky now works for Google and is at the core of their deep learning program.

GPUs made the AI wave possible, and it’s a big wave. Riding on top of that wave is Nvidia. Though the market for PCs is declining, the gaming market continues to grow at a steady rate. Artificial intelligence has gotten to where it’s at mostly by the realization that problems they are facing have most of the time already been worked out by researchers in other fields. A lot of mathematical operations have been borrowed from the fields of mathematics, economics, and operational research. The hardware, as it turns out, has been borrowed from the gaming industry, and GPUs are now being specifically produced for deep learning applications.

The AI race is on

With the AI market said to hit $36 billion by 2025, things are just warming up. Nvidia initially spent $10 million building its first GPU the NV1, now its stock is worth more than $60 billion. Though tech giants like Microsoft and Google are buying large quantities of Nvidia chips for their datacenters, they have also started work on building their own chips. While Nvidia is at the center of one of the biggest technology booms in history and owns a 70 percent share of the GPU market, it doesn’t mean that they’re invincible. With AMD’s launch of the Radeon Instinct line of GPUs specifically designed for deep learning, and Intel’s acquisition of Nervana Systems, the message is loud and clear: GPUs are the future of AI.

It’s like a case of keep your friends close and your enemies closer, because while companies like Microsoft and IBM and almost everyone else seems to be teaming up with Nvidia, they’ve all got side projects to develop their own GPUs in full swing, so it will be interesting to see how things unfold now. Intel isn’t far behind, either, and has promised to increase deep learning efficiency 100 times in the next three years. What’s clear is that this has brought rise to a new breed of GPUs, now called AI accelerators. Since gaming and AI seem to be symbiotic, I wonder how long before someone tries to play “Call Of Duty” on Nvidia’s DGX-1, the world’s first AI supercomputer in a box.