Every Hollywood actor from Will Smith to Arnold Schwarzenegger has been in a cool AI flick. What’s common among all these films is the urge to show how helpless we will be if one day the machines take over. The problem with this theory put simply is machines think only inside the box. As human beings, thinking out of the box and coming up with unique and ingenious solutions to problems is what makes us intelligent. A good example would be the Deep Blue chess-playing computer from IBM that beat former World Chess Champion Garry Kasparov. Chess is an “in-the-box” game. There are only so many possible moves and a limited number of pieces that only move a certain way. Life isn’t chess, and without a human being to set it up and program it, there’s no way that machine from IBM could even get there in the first place.

AI’s edge over humans

Where AI really thrives, like in the Garry Kasparov example, is when used in conjunction with human beings. In other words, the programmers used the IBM Deep Blue computer to beat a legendary chess player. The advantage that the system had over Kasparov was that the system wasn’t subconsciously thinking about everything a human being is thinking about, like what his wife asked him to pick up on the way home, or if he has to pick up his daughter from school. The advantage that machines have over us is their ability to stay focused and do a job over and over again without getting bored. They don’t get tired or sleepy or thirsty, and these are the areas in which enterprises can exploit and make use of their abilities.

AI and the use of algorithms

An algorithm, like the one used in Deep Blue, is a complex set of instructions and can be referred to as code. The code is what makes these machines run, and by following a complex set of instructions and evaluating data received from sensors and input devices, they come to decisions. Now, as we look at algorithms and how they are used to solve complex problems, they still aren’t AI. An algorithm is a specific set of codes like any other software program and cannot mimic human intelligence, which is what the whole point of AI is.

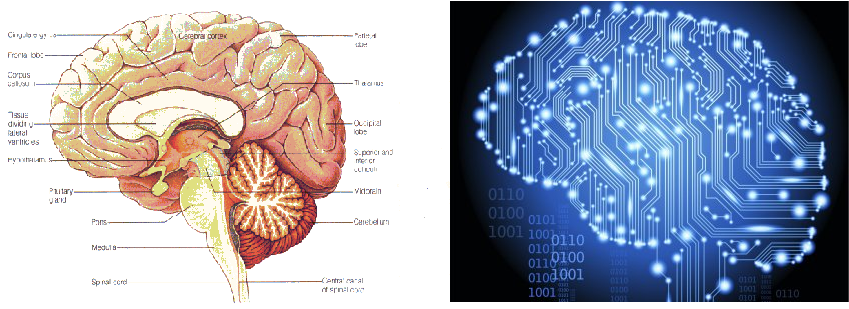

AI and the human brain

To actually mirror the human brain on a ground level means that unlike a piece of code that runs from beginning to end, you need to mimic millions of neurons that are processing information in parallel. A distinctive similarity is seen here between the biological brain and the DevOps approach, for example where an algorithm or linear set of codes or instructions can be compared to the waterfall style of doing things one at a time. This is why it took 2,000 CPUs working in parallel to first figure out the difference between cats and humans in YouTube videos, which is often looked at as the first major breakthrough.

From CPUs to GPUs

Though the concept of AI is over 50 years old, what took us so long? The ability for a software program to mimic a human mind requires huge processing power that just wasn’t available at the time. Even today, the most advanced processors from Intel all have their limitations due to heat and resistance capacities of the semiconductors they use. A CPU is often playing handicapped in the sense that it needs to worry about a lot of other things like the GUI, the OS, the network, security, and the like. To run a simple program that watches YouTube videos and distinguishes cats from humans would take about 2,000 CPUs running in parallel because they’re working on 2,000 other things as well and not just watching videos. That kind of power just isn’t what your average developer has on hand and, hence, the long wait for a better option.

That better option came when the person working on that cat video project at Google decided, “Why not get the graphics processing unit to crunch the numbers instead?” This proved to be a stroke of genius as a GPU is made with one thing in mind, and that’s to convert zeros and ones into a viewable display. As it turns out, these specialized processors can do simple number crunching a lot more efficiently than CPUs, and it took only 64 GPUs to get the job done that would have otherwise taken 2,000 CPUs. This has led to the development of “AI accelerators” rather than graphics accelerators, although they’re basically the same thing minus the hardware that’s specifically graphic in nature, for example shape and texture analysis.

‘Deep Learning’

GPUs made it a lot easier and cost efficient to build artificial neural networks, where nodes, or “neurodes,” replace the neurons of the human brain. Like any human brain, this artificial neural network requires constant “teachings” and instructions and is ever developing. A great example would be the movie “Chappie,” in which the robot, though completely built, has the mind of a newborn baby and not only needs time to learn how to read and learn how his environment works, but also needs to be “taught” things. This learning process is where AI distinguishes itself from an algorithm, and is often referred to as Deep Learning. For example, an algorithm that predicts online user behavior may be only about 10 percent accurate when it’s launched. However, with constant feedback and input along with analysis of data of hundreds and millions of users, this algorithm could get to a point where it never gets it wrong.

This concept of Deep Learning has given birth to the DeepMind artificial intelligence being developed by Google’s parent company, Alphabet. What it does is pair an artificial neural network with vast data storages of conventional computers. The new hybrid system can work out information on its own by analyzing data and optimizing its memory, and, at the same time, pull out the correct information. A good example where they’ve used this is to discover people’s ancestries. After being given certain information about relationships, the system was able to figure out the rest of the family tree on its own. Another example given by the researchers is applied to the public transport system. By understanding the basics about one system, for example the London Underground, the system was able to learn and apply this to any other transit system in the world, in this instance the New York City Subway system. By understanding the fundamentals of one system, the Differential Neural Computer can use that information to figure out complex relations and routes without any help.

Artificial neural networks like these have been used in businesses since as long ago as 1987, when the Security Pacific National Bank set up a task force to curb debit card fraud. Artificial intelligence is used frequently in the financial sector to predict market fluctuations, and apps like Kasisito and Moneystream have been using AI for a while.The Royal Bank of Scotland earlier this year launched “Luvo,” a natural language AI bot that will answer banking questions and perform simple tasks like money transfers. The Royal Free Hospital in North London has announced a partnership with Google’s DeepMind to build a mobile app that will monitor patients with acute kidney problems.

Helpful accessory

AI devices are able to make decisions based on calculations after considering all possible permutations and combinations. When a human being is given such a task, instead of analyzing every bit of data, he might take a shortcut and go with an average, or if he’s got a headache he might just “go with his gut.” Human beings aren’t machines, and though we can come up with ridiculous solutions to problems that actually work, we are slaves to our minds and we suffer when given repetitive tasks. It is in these situations that companies can use machines to not only improve their efficiency, but also improve the lives of their employees.

One place where AI really outshines humans is making predictions based on facts and analysis of raw data. Due to the large computational power derived from semiconductors, AI algorithms can successfully predict market patterns, user behaviors, and customer interests. In an office that deals with customer service, a good AI system working in conjunction with humans would effectively categorize queries based on urgency and subsequently assign them effectively. Another good example would be collecting and analyzing feedback from customers based on services or products rendered, where a well-programmed AI system could scour the Internet and analyze social media responses to such products or services. Case in point: the popular travel portal Expedia, which uses machine learning algorithms to improve recommendations for customers. Another great example is the French multinational energy firm Engle, which uses a combination of drones and AI image processing to inspect and monitor its infrastructure.

Winning combination

Along with human beings to correct and update the AI algorithms, the productivity of this human-machine combination far surpasses the ability and efficiency of a team of just human beings. Any efforts to completely replace humans with machines, however, seem impossible.