Cloud storage service in Microsoft Azure is a big deal. We use Storage Accounts in our VMs, databases, applications, as a general repository of data, as a place to store location for logs, temporary data, and deployments, just to mention a few.

The deployment of Storage Accounts is extremely simple, and we can do using any of the available interfaces that interact with RestAPI, including Azure Portal, PowerShell, Azure CLI.

The Azure Storage architecture deserves at least one article just to explain the storage stamp, which is comprised of three tiers: Frontend, Partition Layer, and Stream Layer, and the combination of Storage Stamps with Location Services and DNS, which is a simple summary of all architecture behind of Storage Accounts.

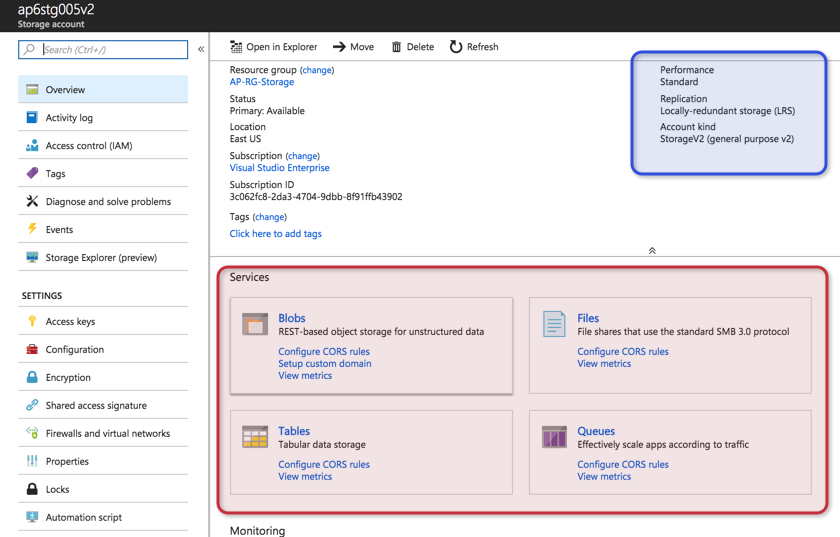

Azure Storage is easy and powerful, and it recognizes several services: Blobs, Files, Queues, and Tables. Each of those services will be reachable through an endpoint address that is based on the name of the Storage Account, as follows:

- Blob: http://<Storage-Account-Name>.blob.core.windows.net

- Table: http://<Storage-Account-Name>.table.core.windows.net

- Queue: http://<Storage-Account-Name>.queue.core.windows.net

- File: http://<Storage-Account-Name>.file.core.windows.net

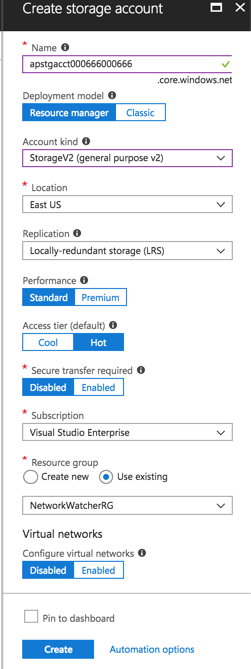

When creating a Storage Account using Azure Portal we are faced with several questions that require a decision, such as the type of Storage Account, replication, performance, and access tier. We can also define the security by allowing access to a Storage Account only from a specific group of virtual networks in Azure. In this article, we will cover some of those options and try to explain in easy terms the options available.

Types of Storage Accounts

The first decision to be made when creating Storage Accounts is the type. We have three types available: General Purpose (v1), General Purpose (v2), and Blob Storage.

The General Purpose (v1) has been available since the classic deployment model, which has been replaced for the ARM (Azure Resource Manager) in the last years. It does not have all the new features, and the cost, in general, is higher than its predecessor, General Purpose (v2).

The General Purpose (v2) is the new kid on the block. It has all the capabilities that General Purpose (v1) has. All existent and new features are being created to be used in this version. Using General Purpose (v2), we have access to define the access tier (hot or cold) at the account level.

Using either General Purpose v1 or v2, the new Storage Account will have all services available: Blobs, Files, Tables, and Queues. In the Overview page, we can also check the current performance, replication, and type of account as depicted in the image below.

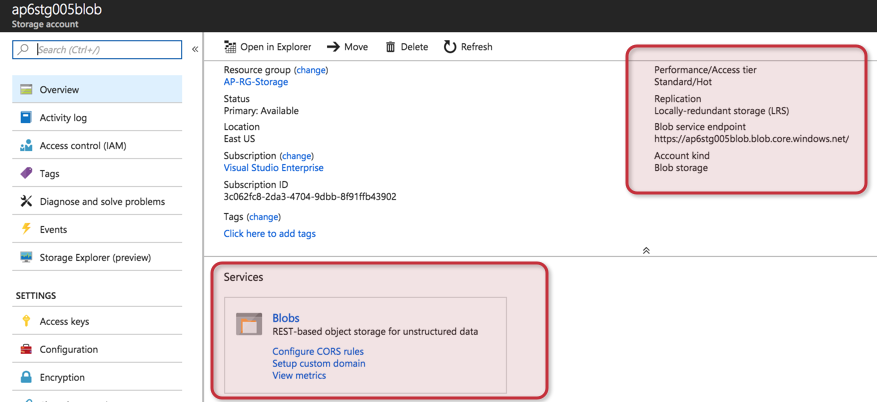

Last but not least is the Blob Storage Account. It only supports Blobs service and it has the same features as the block blobs in the General Purpose (v2) Storage Account. We can see the difference in the Overview blade between a Blob Storage account and the general purpose accounts (pictured above).

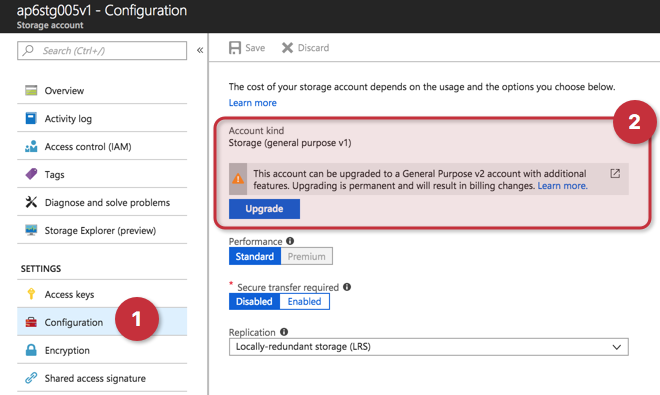

“Why use General Purpose (v1)?” I hear you asking. Well, if you want to take advantage of all new features, General Purpose (v2) is definitely the way to go. But if you are still using the Classic Model, the General Purpose (v1) supports that environment, and in some special cases, such as high volumes transactions, the General Purpose (v1) can be more cost-effective.

If you are still using General Purpose (v1), there are several methods to transition to General Purpose (v2). The easiest one is using Azure Portal: Click on Configuration within the Storage Account and click on Upgrade. If you want to upgrade using PowerShell, the following cmdlet can be used:

Set-AzureRmStorageAccount -ResourceGroupName <Resource-Group-Name> -AccountName <Storage-Account-Name > -UpgradeToStorageV2

Replication

Azure storage provides durability and high availability of all data stored in the platform. By default, we always will have replication in at least three places when using Azure Storage — that is a requirement to maintain the service level agreement provided by Microsoft Azure.

- LRS (Locally Redundant Storage): At least three copies of the data are kept in the same region, so a failure of a rack/server will not impact the data.

- ZRS (Zone Redundant Storage): Each Azure region is comprised of more than one datacenter. Using ZRS, three copies of the data will be spread among zones that have independent power, cooling, and networking capabilities.

- GRS (Geo-Redundant Storage): Besides your three regular copies, another set of three copies are stored in another region within the same geopolitical region. It uses asynchronous replication and the failover between regions is performed by Microsoft. The customer doesn’t have control over it.

- RA-GRS (Read-Only Geo-Redundant Storage): This one is GRS with a twist. The cloud administrator can have read-only access to the data in the second region. In order to access the information, it is just a matter of adding -secondary to the endpoint and use the same access keys to access the information.

When using Premium Storage, the only replication available is LRS. When deciding which replication to use, the cheapest is LRS and the price goes up where RA-GRS is the most expensive of the replication available.

Performance

When using general purpose Storage Accounts, we have the option to decide between Standard and Premium storage. The Standard is the default option and is suitable for the vast majority of workloads. It uses magnetic media to store the data.

The Premium offering provides high-performance storage and it is based on SSD to store data (much faster). It is recommended for VM workloads (disks) and high-performance applications.

Access tier

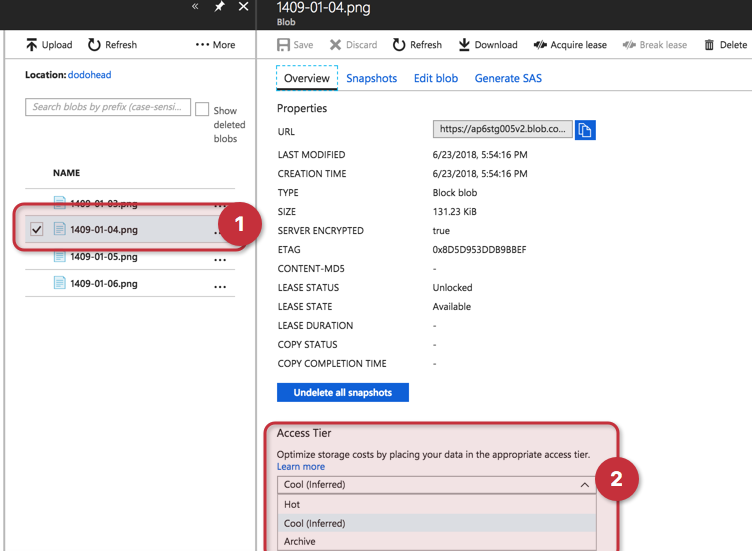

When selecting General Purpose (v2), we can select the default access tier for the data that will be stored at the blob service, the options are: cool and hot. By defining the access tier during the Storage Account creation, the cloud administrator defines that any new blob added to that given Storage Account will be automatically placed in that tier.

The rule of thumb in using the access tier is simple: If it is data that is constantly being used, then hot is the appropriate decision. If the data is not accessed that frequently and it is stored for more than 30 days, then cool becomes the best option. Using archive we are talking about data that is rarely accessed and it is stored for more than 180 days. The data is offline and just the metadata is available. To read the information stored there, a process called hydration, which means changing the access back to hot or cool, is required and it may take several hours. Keep in mind the costs around the tiers — data stored in a cold tier has a higher cost to access the data, and if the data is not left there for at least 30 days, there will be some cost added.

At the blob level (a file within the Storage Account), we can go one step further and configure archive. In the same image, we can check that the Storage Account was configured as cool (inferred) at the account level, and any new blob will use that setting unless we change manually on each file.

It all boils down to … cost! Right?

Well, not really — it is all about the balance. Although cost plays a big role in the decision process, I’m a strong believer that it is all about balance and Storage Accounts are not different.

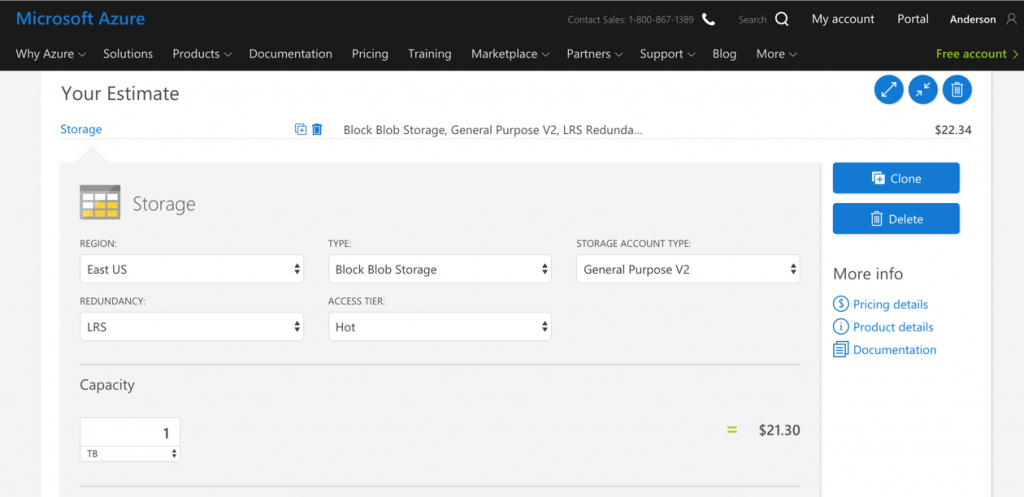

We may like the price to have most of our data in archive tier, but it is not practical in several cases. The best thing is to understand the key features of Storage Accounts and my hope is that we were able to address those initial questions on this article. Next, always check the price. The best way to get accurate information is using the Azure calculator tool, which can be found here.

Using the calculator, one can check the price and play around with replication, storage type, access tier, and region.

In this article, our focus was to understand and explain the main features of Storage Accounts, but there are way more features and functionalities to be explored, and we will tackle some of them in future articles here at TechGenix. Stay tuned!

Featured image: Shutterstock