With the exponential increase in cyber attacks of which we are all aware, combined with the shift to the Internet of Things (IoT) and the cloud, the attack surfaces and vectors have concurrently expanded to the point of near uncontrollability.

One of the most widely used tools in penetration testing are Fuzzers. I propose to examine Fuzzers and their evolution as tools as well as applicability to today’s cloud and IoT environments as well as explore the relevancy of Fuzzers and other penetration testing tools in these complex environments.

As defined by the Open Web Application Security Project (OWASP), Fuzz testing or Fuzzing is a Black Box software testing technique, which basically consists in finding implementation bugs using malformed/semi-malformed data injection in an automated fashion.

For those of you who may not be familiar with Fuzzing, OWASP provides the following trivial example: Lets’s consider an integer in a program, which stores the result of a user’s choice between 3 questions. When the user picks one, the choice will be 0, 1 or 2. Which makes three practical cases. But what if we transmit 3, or 255 ? We can, because integers are stored a static size variable. If the default switch case hasn’t been implemented securely, the program may crash and lead to “classical” security issues: (un)exploitable buffer overflows, DoS, and the like.

Therefore, simply defined, Fuzzing is the art of automatic bug finding, and its role is to find software implementation faults and identify them if possible. There are two forms of fuzzing programs, mutation-based and generation-based, which can be employed as white, grey, or black-box testing. File formats and network protocols are the most common targets of testing, but any type of program input can be fuzzed. Interesting inputs include environment variables, keyboard and mouse events, and sequences of API calls. Even items not normally considered “input” can be fuzzed, such as the contents of databases, shared memory, or the precise interleaving of threads.

A Fuzzer is a program which injects automatically semi-random data into a program/stack and detect bugs.

The data-generation part is made of generators, and vulnerability identification relies on debugging tools. Generators usually use combinations of static fuzzing vectors (known to be dangerous values), or totally random data. New generation fuzzers use genetic algorithms to link injected data and observed impact. Such tools are not typically in wide commercial use yet and I will discuss these tools later.

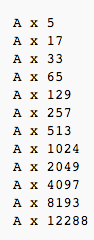

Fuzzer example

A simple example of how a Fuzzer works would be in testing for buffer overflows (BFO).

A simple example of how a Fuzzer works would be in testing for buffer overflows (BFO).

A buffer overflow or memory corruption attack is a programming condition which allows overflowing of valid data beyond its pre-located storage limit in memory.

Note that attempting to load such a definition file within a Fuzzer application can potentially cause the application to crash.

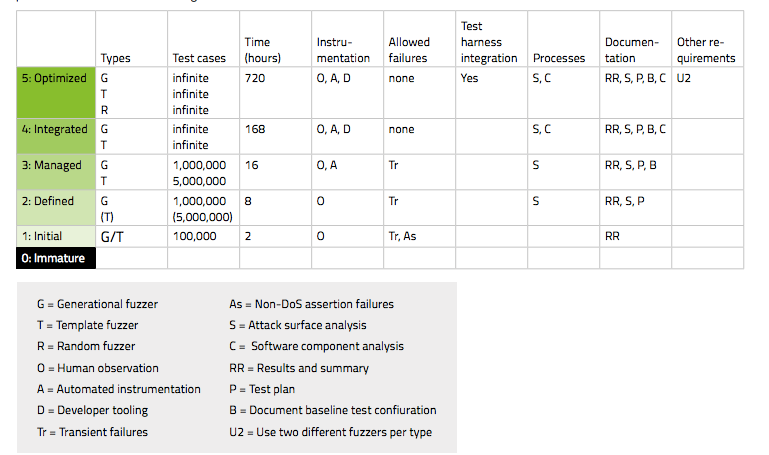

Maturity model

Fuzz testing is an industry-standard technique for locating unknown vulnerabilities in software. Fuzz testing is mandatory portion of many modern secure software development life cycles (SDLCs), such as those used at Adobe, Cisco Systems, and Microsoft. A maturity model for fuzz testing not in widespread uses exists and was developed by codenomicon. It maps metrics and procedures of effective fuzz testing to maturity levels. The maturity model does provide a methodology about fuzzing, allowing different organizations to communicate effectively about fuzzing without being tied to specific tools.

The table below shows the requirements for each level of the maturity model. The columns and abbreviations are fully explained in the subsequent sections. The requirements shown here apply per attack vector on the target.

Is Fuzzing an effective tool for safeguarding and protecting data across the IoT and the cloud?

A savvy test case engine creates the malformed inputs, or test cases, that will be used to exercise the target. Because fuzzing is an infinite space problem, the test case engine must be smart about creating test cases that are likely to trigger failures in the target software. Experience counts—the developers who create the test case engine should, ideally, have been testing and breaking software for many years.

- Creating high-quality test cases is not enough; a Fuzzer must also include automation for delivering the test cases to the target. Depending on the complexity of the protocol or file format being tested, a generational Fuzzer can easily create hundreds of thousands, even millions of test cases.

- As the test cases are delivered to the target, the Fuzzer uses instrumentation to monitor and detect if a failure has occurred. This is one of the fundamental mechanisms of fuzzing.

- When outright failure or unusual behavior occurs in a target, understanding what happened is critical. A great Fuzzer keeps detailed records of its interactions with the target.

- Hand in hand with careful record-keeping is the idea of repeatability. If your Fuzzer delivers a test case that triggers a failure, delivering the same test case to reproduce the same failure should be straightforward. This is the key to effective remediation—when testers locate a vulnerability with a Fuzzer, developers should be able to reproduce the same vulnerability, which makes determining the root cause and fixing the bug relatively easy.

- A Fuzzer should be easy to use. If the learning curve is too steep, no one will want to use it and it will just gather dust.

But at the end of the day, will these tools now matter how well defined work in the cloud?

As VooDoo Security has pointed out, many organizations moving resources into the cloud require vulnerability assessments and penetration tests of critical assets to determine whether vulnerabilities are present and what risks they pose. In many cases, compliance requirements may also be driving the need for pen tests as well. However, performing scans and penetration tests in the cloud is quite different and more complicated from those run on a typical network or application.

The type of cloud will dictate whether pen testing is even possible. For the most part, Platform as a Service (PaaS) and Infrastructure as a Service (IaaS) clouds will permit pen testing. However, Software as a Service (SaaS) providers are not likely to allow customers to pen test their applications and infrastructure, with the exception of third parties performing the cloud providers’ own pen tests for compliance or security best practices. Assuming pen testing is permitted, the next step is to coordinate with the cloud service provider (CSP) in two ways. The first is via contractual language that states pen testing is allowed, what kind of testing, and how often. If no language exists explicitly in a customer contract or per the CSP’s published policies (on its website, for example), then testing will need to be negotiated, if possible.

The bottom line is that if you cannot work with your Cloud Service Provider, there is no good answer.

As Infoblox recently stated, by carrying out ‘white hat’ attacks to identify potential entry points in the externally facing parts of an organization’s IT network, such as its firewalls, email-servers, or web-servers, pen testing can bring to light any existing security weaknesses. These potentially vulnerable external facing aspects, however, are rapidly increasing in number.

Phenomena, such as BYOD, the cloud, and shadow IT, have seen more and more different devices being added to an organization’s infrastructure, each using an increasing number of applications for both business and personal purposes. This growth has seen network boundaries expand to such a degree that they have almost dissolved, leaving networks essentially amorphous. And the imminent explosion of devices set to be introduced by the Internet of Things will only redefine the shape of the network even further.

This new environment is likely to allow increasingly more complex and sophisticated threats to flourish, as billions of connected devices continue to change and extend the network perimeter, increasing the number of potential entry points for attackers.

Put simply, the more miles of fencing there are to patrol, with more potential points of entry, the harder it will be to keep attackers out. Logically, this would suggest that pen testing is now more important than ever, but this isn’t necessarily the case.

The future of pen testing and security

According to the InfoSec Institute, one of the industries that could benefit most of all from the introduction of artificial intelligence is cybersecurity. Intelligent machines could implement algorithms designed to identify cyber threats in real time and provide an instantaneous response. Despite that the majority of security firms are already working on a new generation of automated systems, we’re still far from creating a truly self-conscious entity.

The security community is aware that many problems could not be solved with conventional methods and therefore utilizes the application of machine-learning algorithms. Security firms are working on this new family of algorithms that can help systems that implement them to identify threats that were missed by traditional security mechanisms. The security community is assisting with a rapid proliferation of intelligent objects that are threatened by a growing number of cyber threats that are increasing in complexity.

The principal risks related to the robustness of systems implementing artificial intelligence algorithms are:

- Verification: it is difficult to prove the correspondence between formal design requirements due to the dynamic evolution of such kind of solutions. In many cases, the AI system evolves in time due to their experience. This evolution could make the system no longer compliant with initial requirements.

- Security: it is crucial to prevent threat actors that could manipulate the AI systems and the way they operate.

- Validity: ensure that the AI system maintains a normal behavior that does not contradict the requirements defined in the design phase, even if the system operates in hostile conditions (e.g. During a cyber attack or in presence of failure for one of its modules).

- Control: how to enable human control over an AI system after it begins to operate, for example to change requirements.

- Reliability: the reliability of predictions made by AI systems.

And not to state the obvious, but anything that is built with AI could clearly be hacked into causing potentially even more serious consequences.

Synchronized approach to securing the cloud kill-chain

In fact, not a lot of people are truly qualified to work as penetration testers for Fuzzers and other tools. Well, at least not the best ones. Pen testing is way more than just utilizing cool hacking tools and producing vulnerability reports. Great pen testers have deep knowledge of operating systems, networking, scripting languages, and more. They are also eager to learn new approaches and employ the new content that they learn in practice. They combine manual work with automated tools and conduct their testing in iterations, reviewing interim test results to build complicated attacks just like a cyber criminal would.

However, many single-shingle security consultants and small companies offer pen testing services. Some base their services solely on the use of one or more hacking tools and produce attractive-looking reports that detail all the issues they were able to find. As with my old neighborhood studio photographer, there is no real magic there. Instead, their results are based only on the tools they learned to operate and not on any specialized skills, which means that their customers could feasibly automate testing and save time and money by doing it themselves.

I certainly don’t think that cloud-based application security testing services will make pen testers’ work redundant, but I do think they can help clean out the weeds and establish order in the field. I also believe that organizations relying on a penetration testing-only approach to application security place themselves at a high risk of potential data breaches. Overall, the best approach is to perform periodic pen testing and combine it with routine application security testing as well since application threats can be released quickly and evolve very suddenly.

Finally, and I cannot emphasize this enough, whatever type of testing your organization is preforming, it must be combined with the basics such as ensuring information security begins and cycles through the entire Software Development Life Cycle user education and address the number one threat to every enterprise: social engineering.