Introduction

Customers want to run their Exchange environment on a virtualized platform. This has been the case with Exchange Server 2003 running on VMWare, but Microsoft has been supporting the virtualized Exchange Server since Exchange Server 2007 (on Windows Server 2008!), running on a Hyper-V platform. Okay, Exchange Server 2003 is supported running on Virtual Server 2005 R2, but this is not a serious alternative in my humble opinion.

Not only is the Hyper-V platform supported, all virtualization platforms that comply with the Microsoft SVVP program (Server Virtualization Validation Program) are fully supported. For Exchange Server 2010, all Server Roles are fully supported in a virtualized environment, except for the Unified Messaging Role. The UM role works with real-time data (i.e. voice data) which can cause problems on a virtualized platform. Think about malformed voicemail messages or strange sounding voices.

In this article I will try to explain how to run Exchange Server 2010 on a Hyper-V platform. Guidelines in this article however are also valid for other virtualization platforms like VMware or Xen Server.

Designing Virtual Exchange 2010 servers

When designing an Exchange 2010 environment there are certain configuration guidelines, such as the amount of memory, the processor capacity, the disk configuration (for the Mailbox Server Role) and the ratio between the number of Exchange Servers versus the number of Domain Controllers/Global Catalog Servers (or to be more precise, the ratio between the number of processors in each server). There are all very important variables to be aware of!

One of the most important things is that you have to use the same calculations for a virtualized Exchange Server 2010 environment as you would use for a physical environment. So use the same amount of memory, the same amount of (virtual) processors, the same network settings and the same storage calculations. I have seen too many customers decreasing these numbers in a virtualized environment, and this will always result in performance degradation!

Virtual Disk Configurationfor Exchange 2010 Mailbox Servers

The official support policy for running Exchange Server in a virtualized environment can be found on the Microsoft technet website. Hyper-V has several types of Virtual Hard Disks (VHD):

-

Dynamic Disk;

-

Fixed Disk;

-

Differential Disk;

-

Pass-through disk.

The Dynamic Disk is a .VHD file that starts as a file on the Host Server as a small file, approximately 2 MB in size and it grows on demand. This causes a certain amount of overhead, enough reason to not support it for virtualized Exchange Servers. Please note that Dynamic Disks work fine, they are just not supported!

A Fixed Disk is also .VHD file running on the Host Server, but it has a predefined size. So if you define a 250 GB Fixed Disk it will create a .VHD file of 250 GB at the time of creation. This does not have the overhead of auto growing and is fully supported for virtualized Exchange Servers.

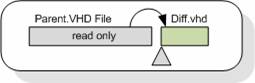

A Differential Disk basically consists of two Virtual Disks. One read-only parent disk and a small read/write child disk where all changes of the Virtual Machine are saved. You can have one parent disk combined with several child disks. Differential Disks are also not supported for running virtualized Exchange Servers.

Figure 1

Not mentioned in the list above are snapshots. Snapshots, in essence, are an implementation of Differential Disks. By default snapshots are stored on the System Disk (C:\Hyper-V) unless changed later on. If you are unaware of this there is a risk that the System Disk will fill with snapshots, causing the Host Server to stop working. Snapshots are also not supported in a virtualized Exchange Server environment.

Figure 2

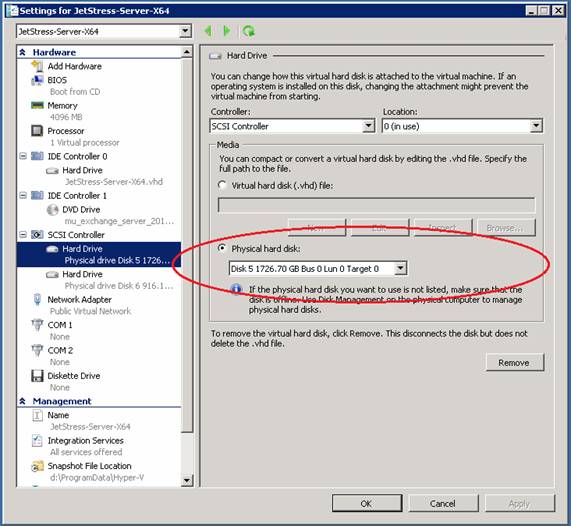

A pass-through disk is not really a virtual disk, it is a physical disk attached to the Host Computer. This can be a physical hard disk, but it can also be LUN on a storage device. It does not matter whether this is an iSCSI storage solution or a Fiber Channel solution. The physical disk is not configured as a .VHD file on the Virtual Machine, but it is attached to the virtual SCSI controller of the Virtual Machine.

Figure 3

To attach the physical disk to the virtual SCSI controller the disk has to be offline on the Host Computer. If the disk is not offline you cannot add it to the SCSI controller and the “Physical hard disk” option on the SCSI controller will be greyed out.

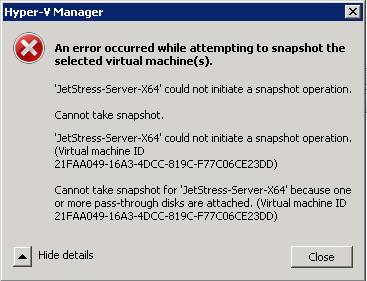

Another point to remember is that creating snapshots of a pass-through disk is not possible (it’s not supported either in a production environment as discussed earlier), if you try to create a snapshot it will result in an error:

Figure 4

In my humble opinion, pass-through disks are the best solution to run Exchange Servers on a Hyper-V platform since they do not cause any overhead on the disk.

But how does it perform? Of course it depends on the storage solution itself, but testing with an iSCSI solution from Hitachi Data Systems (HDS 2100) with dedicated spindles for the Exchange 2010 Server, attached as pass-through to the virtual SCSI controller it runs like a physical machine. Awesome….

Note:

There is a good article about running Jetstress on the MSExchange.org website written by the MSExchange.org author Rui Silva. Check it out here.

For an overview of sizing the Exchange 2010 Hub Transport Server please read the following article: Understanding Server Role Ratios and Exchange Performance. For an overview of sizing the Exchange 2010 Client Access Server please read the following article: Sizing Client Access Servers.

Network Configuration

Special care needs to be taken for the network configuration of your Host server. If you use an iSCSI solution, make sure that the network is redundant and that you can use multipath I/O to the storage array. Whenever possible use a 10Gbe solution to get the best performance.

For the public network, i.e. the network that is used by clients to connect to the Virtual Machines, use as much network interfaces as well to prevent the network becoming a bottleneck in your configuration. Do not forget a special network interface in your host server for management purposes either.

Clustering and Exchange Replication

To create a High Available solution with Hyper-V there are two options:

-

Host Clustering – Two or more Hyper-V Host Servers are configured in a failover cluster configuration while the Virtual Machine is configured a Cluster Resource. When a Cluster Node fails or needs to be brought offline for maintenance the Cluster Resource is moved to another Cluster Node. But when the Exchange Server running in the Virtual Machine fails nothing will happen, failing over a stalled Exchange Server will still result in a stalled Exchange Server, but now on a different Cluster Node.

-

Guest Clustering – Two Virtual Machines running Exchange Server are configured as a Cluster, whether it be a Cluster in Exchange Server 2007 or a Database Availability Group (DAG) solution in Exchange Server 2010. When something happens to a Virtual Machine running on a particular Host Server another Virtual Machine will take over.

In Hyper-V R1 fail-over clustering is available in the traditional manner: when a failover takes place the Cluster Resource (the Exchange Server!) is brought offline, moved to another Cluster Node and brought online again. This is called a “Quick Migration” by Microsoft. Depending on the amount of memory this can take up to a couple of minutes which might be unacceptable in a production environment.

In Hyper-V R2 clustering has been improved with the implementation of Cluster Shared Volumes (CSV) where both Cluster Nodes can access the shared storage at the same time. A CSV Cluster offers a “Live Migration” cluster. Using Live Migration the running Virtual Machine will be copied over from one Cluster Node to another Cluster Node without any downtime of the Virtual Machine. Please note that a Live Migration itself can also take several minutes depending of the configuration of the Virtual Machine.

When it comes to running Exchange Servers in a clustered environment, especially when using the Live Migration solution is that you cannot combine Exchange Server replication (CCR or DAG) with CSV Clusters. You have to use either CCR or DAG running outside the Hyper-V Cluster or use single Exchange Server within the Hyper-V Cluster. Running CCR or DAG on a Live Migration cluster is not supported!

This is not only true for Hyper-V, but also for VMWare’s VMotion.

But how does it work!? Well, recently we have installed an Exchange 2010 Mailbox Server for a customer running on a CSV cluster. 750 Mailboxes, heavy usage profile, 3 Mailbox Databases of approximately 150GB each, stored on a NetApp iSCSI solution and running without any issues. Stunning…