Already tired of the ever-intensifying hype around artificial intelligence? Self-driving cars, house appliances that send notifications to your mobile devices, computer programs that can beat humans at computer games – you hear about such stuff in every artificial intelligence report and article you read, we know that.

And until some machine can make you pancakes in the morning and wash the dishes for you, there is no reason to get that excited yet, is there? Can that machine clean your kitchen for you and make you spaghetti without burning the meatballs? That will be the day when that can happen!

Artificial intelligence has tremendous potential, but it’s still on the road and far from its destination of value-adding and easily doable automation of sophisticated computer based processes. Today, every bit of automation in computer processes is brought about by pure human intelligence that’s manifested in the form of smart algorithms, more complicate flowcharts, and untiring lab experiments.

This also means that the next mega wave of automation will need more than human effort, it will need “machine effort” as well. No one said we cannot use some help! This is where machine learning makes it case as the transformative force that will fuel artificial intelligence’s growth as an enabler of massive automation.

Before we go deep into the discussion, let’s help you differentiate among similar sounding and similar meaning terms.

Artificial intelligence

Any technique, methodology, and process that can enable computers to mimic human intelligence and use it to drive positive digital responses is artificial intelligence. Some components of artificial intelligence are – decision trees, if-then rules, multistep logics, and machine learning (which also includes deep learning). Far beyond the thinking and the grasp from the likes of Michael Kelso from the TV’s “That 70s Show”!

Machine learning

It’s a subset of AI, and comprises all the techniques focusing on improving computer programs’ efficiency with experience. These techniques include feedback loops that record information on actions (current process) and reactions (outcomes), and logic to fine tune reactions based on this information.

Deep learning

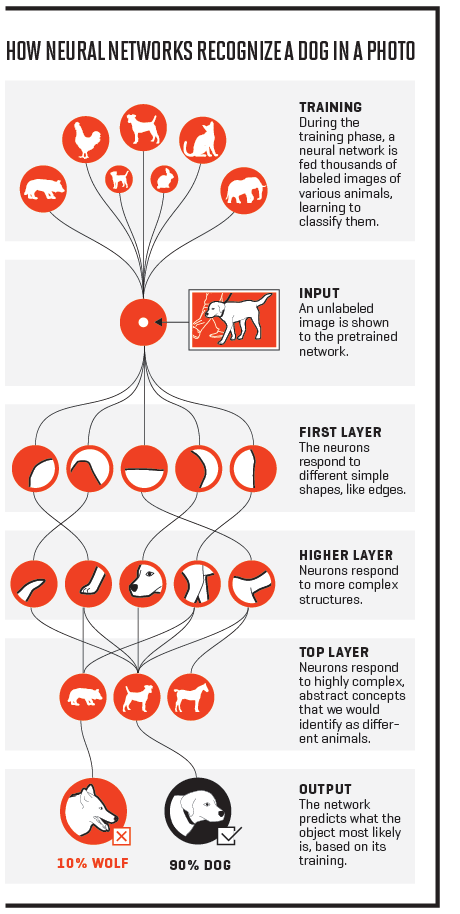

It’s a subset of machine learning, and comprises techniques that aim at improving software by exposing it to massive streams of data, and using multilayered neural networks. Now, neural networks consist of code layers of increasing complexity. Neural networks enable software to “learn” from its exposure to hundreds, thousands, and even millions of data-driven simulations.

Let’s get back to our question now: How can deep learning catalyze automation for enterprises?

Automation of IT

Wait, isn’t it the IT team that does the automation? Can IT itself be automated? Well, with deep learning, artificial intelligence is slowly making this obscure concept look clearer and structured.

Consider the case of Apache web server technology. In 1990s, server crashes were purely dealt with by humans. Then came Nagios and other monitoring systems that reported crashes, and even restarted the servers on their own.

Then came the cloud and DevOps waves, and we had configuration management tools such as Chef and Puppet, which could take care of server settings also, apart from event-based decisions of starting and stopping the server. The point is – systems are evolving, and their human dependence is diminishing.

Now, we concede that even today, if an enterprise needs more applications added to the IT infrastructure and ecosystem, it has to follow a top-down approach. A central file or cluster of files holds information about the architecture, and it needs to be edited, followed by the deployment of the new application.

Thankfully, because all these scale-ups and scale-downs are logged at one place, neural networks can be trained to understand patterns, and predict and recommend actions. This will be the first real step towards putting IT management on autopilot. Is this already in action – no! Is it doable – yes!

Automation of software development

There’s this unified movement centered on making computer programs understand human languages to drive applications of artificial intelligence. What’s left behind is the idea of making computers understand their own language better!

Put more clearly, the idea is to enable programs to understand code, understand the approach of the developer, predict the intended outcome of the code, and “magically” correct bugs, make the code more secured, suggest best practices, and even complete itself. Sounds impractical? Well, consider these facts:

- GitHub has more than 66 million pull requests; each pull request, deep down, means that some bad code is changing to good code.

- Google is building a bug prediction system that monitors code repositories and project management tools, bug reports, etc. It uses this information to predict possible bugs in a code under development.

- Siri’s rival, the Samsung-owned Viv, is a highly sophisticated compiler that compiles human language into different algorithms, and uses it to drive many more actions than Siri can do today.

All these are steps in the direction of making computer software leverage the power of massive datasets and neural networks (essentially, deep learning) to make code smarter on its own.

Automation of evolution

Here’s a rather circuitous statement – the best application of deep learning is toward automation, because that makes artificial intelligence better, cheaper, easier, and quicker. Consider a computer program that can beat any human at chess. Now this program will not achieve anything more than that.

Add the power of neural computing network in there, and this program will progressively work to beat humans in fewer moves. Similarly, any instance of deep learning success will drive more success subsequently. Use of artificial intelligence to run and manage computers will invariably bring improvements in other kinds of computations.

Soon enough, we could have smarter self-driving cars (hopefully they are not carjacked remotely like what happened in “Fast 8” with about 100 cars being taken over to wreak havoc on the streets), more autonomous robots, and more accurate predictors of sports match outcomes. Well, we already know the New England Patriots cheat, but this is another topic!

Progress is inevitable

The truth is, however, that we are still driving in first gear on this journey; more time is spent on doing things “of” deep learning than “by” deep learning. However, progress is inevitable, and within a couple of years, we will have startups as well as enterprises releasing commercial solutions leveraging the power of deep learning and driving automation.

Open source platforms such as TensorFlow will act as a nitro boost of sorts for these initial applications, and will drive the creation of more complicated and value-adding deep learning systems. Deep learning is all set to drive the power of big data, analytics, and automation together, and deliver unimaginable results to enterprises and society.

Who says you cannot have your cake and eat it, too? Only nonvisionaries.

Ok… Let’s get something straight. Computers are not smart. They are extremely fast at adding in binary. Ones and zeros. They are so locked into this, that we have to convert our language in to assembly code before they can act. The only real improvement over that last 40 years is speed. Please stop calling them smart or intelligent. They only appear to be so. It gives people a false sense of what these boxes really are. Without the instructions to tell the box everything it has to do, it is really little more than a boat anchor. I write code. I know how a simple mistake in that code can shut down the box. It simply can not think in an abstract world. In fact, it cannot really think at all. It can only respond to the instructions given to it. Because it has no creativity, it cannot create. Intelligence simply has a far deeper than what can be applied here.

But it can store more information than a human. It can calculate much faster than us. So we should give computers some respect. We would not be here without them and if you saw the movie Life, we need more robots like Chappie on Mars and so on since we do not know what is out there.

I agree, we do not have Bumblebee like robots or computers on earth but I cannot to 36×569=20,484 in 1 second or less in my head and they can do much more than all day and all night.

OK, have a fine day.

Before we can make assumptions, lets talk about what we don’t know. No one knows how much information the human brain can store. No one knows how much information is currently in our brains. What we do know is how fast we can retrieve this data compared to a computer. Speed is not an indicator of intelligence. If the only way you can relate a computer to a human is through the movies you have seen then I leave you with this thought. I learned everything I know about ghosts by watching TV. Therefore they must exist. In regard to respect, I respect people and those that built and wrote code for computers. I do not respect objects. We were here before computers and we would still be here without them. We do not need computers to live. We need them to make our lives better. So with that being said, I leave you with this thought. Computers are not intelligent. They are simply fast at what they do.