The idea of dueling AI isn’t exactly new. Countless YouTube videos have depicted conversations between Alexa and Siri, usually for comedic effect. But is there any practical use for dueling AI? The answer might surprise you.

Before I can delve into a discussion of dueling AI, I need to take a step back and talk for a moment about AI in general. I think that perhaps the best definition of AI came from Schwarzenegger in one of the Terminator movies when he said: “My CPU is a neural-net processor; a learning computer.” That statement essentially sums up what AI is. AI is an application that is designed to improve its decision-making skills over time as it ingests more and more data. Let me give you a few examples.

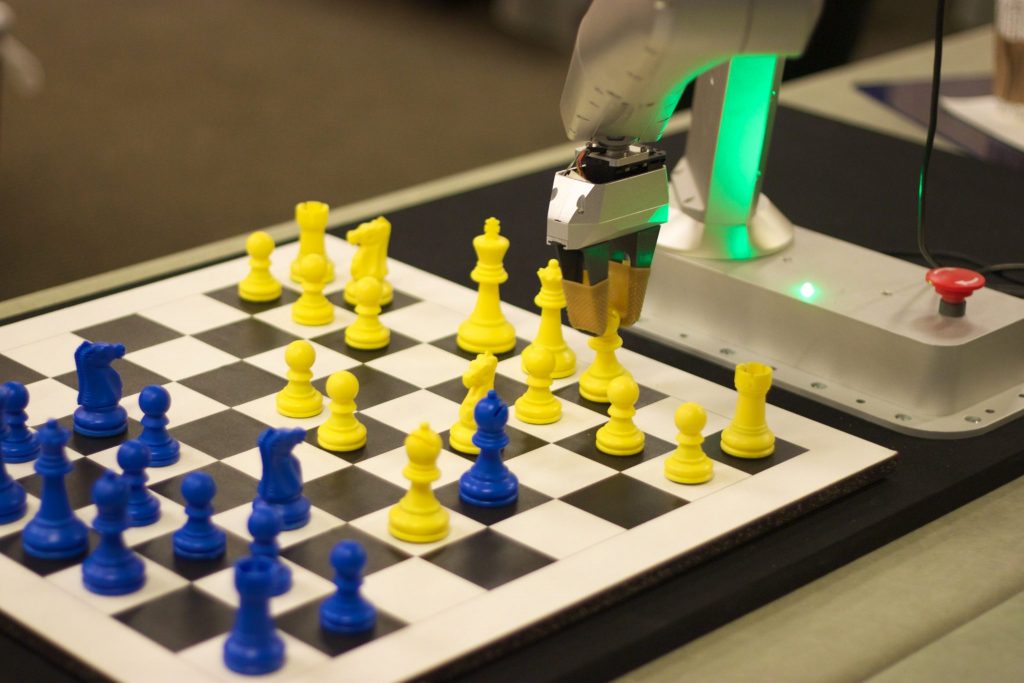

We have all probably seen chess-playing robots at one time or another. Those robots are not programmed with every conceivable combination of pieces on the chess board. According to Bern Medical, “Chess is infinite: There are 400 different positions after each player makes one move apiece. There are 72,084 positions after two moves apiece. There are more than 9 million positions after three moves apiece. There are 288+ billion different possible positions after four moves apiece.” In other words, it would be impossible to program every conceivable move. Instead, the computer plays simulated games of chess and learns based on the outcomes of those games.

The dictation software that I am currently using to write this article is another example of AI software. When I first installed the software, I had to train it to recognize my own unique speech “nuances.” Over time the speech recognition becomes increasingly more accurate because it uses past dictations to improve future accuracy. In other words, the software learns over time. The key thing to understand about artificial intelligence is that an AI engine might not be all that smart in the beginning. Just like humans, an AI engine has to be educated.

Dueling AI: It’s not Siri and Alexa arguing

So with that said, let’s talk about the concept of dueling AI. Dueling AI does not refer to Alexa and Siri getting into an argument with one another (although technically speaking, that might be a form of dueling AI). Instead, dueling AI refers to the idea that one AI engine can be used to train another. Keep in mind that the AI engines do not have to verbally speak to one another. They could conceivably train one another through a direct data interchange.

So how might such a system work? Let me give you a plausible but made up example. Many website owners use captchas as a tool for preventing the activities of bots. Often times, these captchas display a few random characters in a funky, possibly even distorted font that theoretically should not be machine readable. The user is asked to type the characters that they see as a way of proving that they are not a bot.

Captchas have not always worked this way through. At one time, it was very common for captchas to display two words, in two different fonts, and require the user to type the two words. Simple enough.

Most people probably just assumed that the two different fonts were used solely as a way to thwart bots. However, there was something far greater going on behind the scenes.

Although the captcha would display two words, and require the user to type both of the words, the computer captcha did not actually know as much as it led people to believe. The captcha knew what the first word was that it was displaying. That word was chosen at random. Surprisingly, the entire captcha test was based on the user retyping the first word in the sequence.

The second word in the sequence was completely unknown to the captcha. If the user entered the first word correctly, then the algorithm assumed that the user had also entered the second word correctly, but it had no way of verifying whether or not the second entry was indeed correct.

So what was the point of having the captcha ask users a question that it did not know the answer to? Well, as it turns out, the second word in each captcha was scanned from a book (classic literature). When users completed the captcha, the application would use the user’s entries to create electronic versions of classic books. All the application had to do was keep track of which words it was showing to each user so that it could reassemble the text in the correct order.

Given the way that this particular system worked, it would be relatively easy to create an AI engine that could be used to perform highly accurate optical character recognition. All of the key elements were in place. The system had at its disposal a relational database containing thousands (or possibly millions) of scanned words and a typed version of each word. The system could then use this information to learn how to read text on its own, without human intervention. From there, it might even be able to teach other AI systems how to read.

One of the things that have long differentiated humans from computers is our ability to pass on knowledge to one another. Sure, a computer can be programmed to send a file to another computer, but does the recipient system really understand the contents of the file (in its human context)?

Creating a meaningful system

The first step in establishing dueling AI is to be able to get a system to convey knowledge to another system in a meaningful way. Think about that one in the context of the chess-playing robot. A robot would presumably have to analyze millions of chess games before it has enough knowledge to become a chess master. If that robot could transmit its knowledge to another chess playing robot, then the gaming knowledge could be conveyed in a fraction of the time that it would take the second robot to learn the game from scratch by analyzing raw simulation data.

The first step in establishing dueling AI is to be able to get a system to convey knowledge to another system in a meaningful way. Think about that one in the context of the chess-playing robot. A robot would presumably have to analyze millions of chess games before it has enough knowledge to become a chess master. If that robot could transmit its knowledge to another chess playing robot, then the gaming knowledge could be conveyed in a fraction of the time that it would take the second robot to learn the game from scratch by analyzing raw simulation data.

Now here is where things get interesting. So far I have been discussing a one-way conveyance of knowledge. The more experienced AI is imparting its wisdom to the less experienced AI. But what happens when that knowledge transfer is complete? What is to stop the two AIs from learning from one another?

Let’s suppose for a moment that a chess-playing robot has accumulated so much knowledge of the game that it is able to beat the world’s reigning chess champion. Let’s also assume that the system transfers that knowledge to an identical robot, resulting in two robots that are better at chess than any human could ever hope to become. Because the robot’s algorithms are designed to learn by playing the game, the two robots could compete against one another, both learning from one another in the process. The robots, which are already the world’s greatest chess players, become even more skilled by facing off against one another. That is the true power of dueling AI.

Will it become too smart?

It’s fascinating to think of dueling AI technology in a business context, with regard to what might eventually be achieved. But if you are worried about an AI becoming so smart that it is able to achieve world domination, then you will be happy to know that the idea that an AI will always become smarter over time is a fallacy.

For starters, AI is nothing but computer code, and like any other code base, an AI algorithm has its limitations. Perhaps more interestingly, an AI engine can only improve its decision-making abilities if it is fed good data. You can actually “poison” an AI by purposely feeding it bad or nonsensical data.

Featured image: Shutterstock