On more than one occasion in recent weeks, I have heard someone say that the social networks need to do a better job of policing content. But is it the social networks’ job to police content, and is content policing actually a good thing? As I began to ponder these questions, I quickly began to realize that the issue is far more complicated than it might at first seem.

The comments that I have recently heard regarding policing content on social networks were not made by anyone that I know. I overheard these comments in various public places, and from what I could gather, the comments were made in regard to unfavorable political content appearing on a social networking site. Although this might be the first thing to come to mind when the subject of content policing is brought up, there are actually several different types of content filtering.

Advertiser content

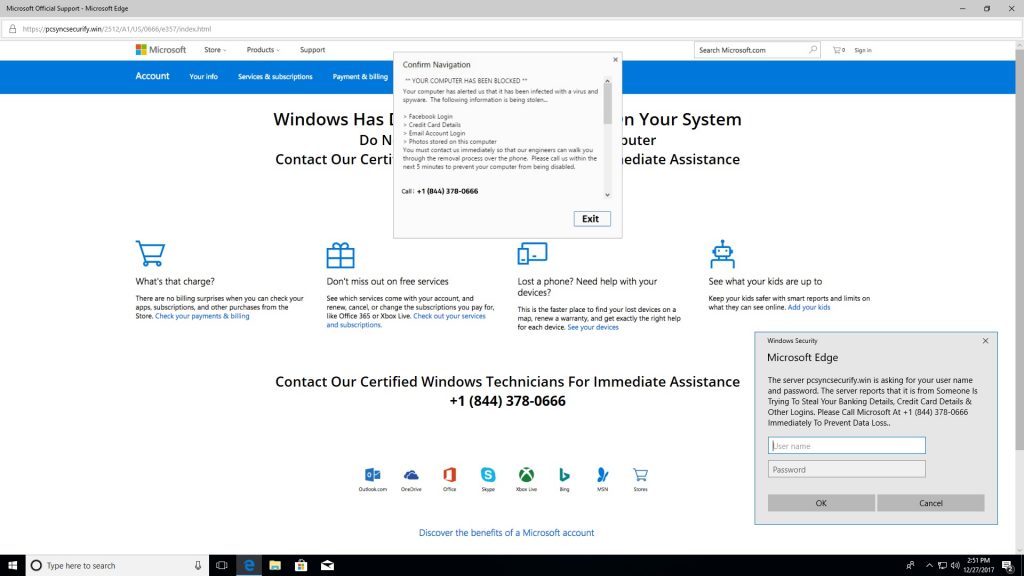

If a social network sells ad space (and really, who doesn’t?), then it must consider whether to police its advertisers. At first, this idea probably sounds ridiculous, but in some cases, advertisers can become a huge problem. There are unscrupulous individuals who, for example, buy up ad space on websites and then post ads containing malicious code or ads that attempt to trick users of the site into believing that there is something wrong with their computer. In fact, by pure coincidence, a normally legitimate site that I often visit displayed the fraudulent tech support message shown below while I was working on this article.

And speaking of ads, one has to consider whether the owner of the site has any sort of obligation to keep their site free of fraudulent advertisements. Several years ago, I was at a trade show and one of the vendors who had a booth at the show was showing me something on the company’s Web site. As we browsed the site, I made the comment that some of the advertisements appearing on the site seemed kind of dubious. The guy who I was talking to sighed in exasperation and said that he was pretty sure that the only ad that the company had ever turned down was one from Ashley Madison.

Keeping a social network free of fraudulent ads seems like such a simple thing, and yet advertisers are often kept on the honor system (trusted not to post malicious or fraudulent ads). Of course, this concept is not exactly unprecedented. It has always been the norm, even in the world of printed publications. For instance, I once sold an old Pontiac through a newspaper advertisement. The newspaper did not send someone out to make sure that the car really was in the condition stated in my ad (nor would I have expected them to). They simply assumed that my ad was honest (which it was).

Comments sections

Another area in which a social network could conceivably police content is in the comments section. Anyone who has ever posted a YouTube video has probably gotten some nasty comments from Internet trolls, but believe it or not, I would not want a social network to take it upon themselves to automatically remove such comments. The reason for this is that once a site starts removing comments from trolls, it becomes way too easy for that to morph into the removal of comments made by anyone who has a dissenting opinion. As an author, I will be the first to admit that receiving a rebuttal is no fun, but if people who have differing opinions can debate the issue in a polite and civil way, then the discussion can actually make the post more engaging.

What should be (and usually is) automatically removed from social network posts is comments that are nothing more than spam or malicious code. It’s absolutely amazing how pervasive these types of comments really are. My own website receives a few dozen comments containing spam or malicious code every single day. Like social networks, I use a moderation tool to prevent these comments from ever making it onto the site.

User content

When most people talk about policing content on social networks, they are usually referring to the filtering of user content. Before I talk about the pros and cons of user content policing, let me first clarify that I am only talking about content that is legal but considered objectionable for one reason or another. I think that we can all agree that illegal content such as kiddie porn or gruesome animal cruelty videos has no place on social media (or anywhere else for that matter). I also want to clarify that I am in no way taking any sort of political stance. The truth is that politics really aren’t my thing. I am merely engaging in a thought exercise, and attempting to write a balanced piece of content that does not favor any one side.

So with that said, when it comes to controlling content, most sites have well-established terms of use. These terms stipulate what is and is not considered acceptable on the site. Such terms might include anything from avoiding the use of swear words to staying on topic. (You wouldn’t, for example, expect to find a scientific paper on Policastro physics posted on a social network for people who love roller coasters.)

Social media sites have a right to set acceptable-use standards. They own the digital real estate and can, therefore, set their own rules. But what about content that may be deemed objectionable to some but that doesn’t violate the site’s acceptable use standards? I’m talking about things like links to external sites, dissenting opinions, blatant misinformation (fake news), and things like that.

In a perfect world, a social network would at least verify the accuracy of user content. For now, though, this would likely prove to be impossible because of the sheer volume of activity being added to social media sites each day. According to Zephoria Digital Marketing, “Every 60 seconds on Facebook: 510,000 comments are posted, 293,000 statuses are updated, and 136,000 photos are uploaded.” Sure, there are computer algorithms that can validate certain types of content, but AI has not yet evolved to the point that it can perform reliable fact checking at that scale. So for now, at least, there isn’t really a good way to ensure content accuracy.

But what about policing content on social network sites for things like dissenting opinions or unpopular political viewpoints? Again, a privately owned social network is free to manage user content as it sees fit, but I personally think that it is a mistake for a site owner to censor unpopular content.

Now before you send me any angry letters (or comments), let’s put emotion aside and look at the issue objectively, solely from a business standpoint. The act of moderating and censoring content incurs additional labor costs, and could potentially subject the site to costly legal challenges (although in certain cases, the opposite could also be true).

If someone posts something egregious, then it may be more effective to allow the online community to address it themselves (through comments, thumbs down, etc.), than for the site operators to censor the content. Again, I am not talking about death threats, hate speech, or killing puppies. I am talking about content that is legal, but objectionable for one reason or another.

Why policing content is such a complex issue

Policing content on the web is a surprisingly complex issue. On one hand, I think that at the very least, site owners have a responsibility to protect their users from content that is illegal, malicious, or potentially harmful in some other way. Conversely, heavy-handed content moderation may sometimes stifle free speech or have other unintended consequences. Ultimately, there are no universally correct answers. Regardless of whether a site polices some, all, or none of its content, there will be those who are unhappy with the policy. Hence, each social network should make a good-faith effort in managing and policing content in a way that they believe will best serve their users.

Photo credit: Shutterstock