Regression testing checks if a release or update to an application has resulted in new errors that previously didn’t exist. This is critical to ensuring outstanding customer experience. As DevOps looks to increase the frequency of releases, there are more chances of new issues being introduced into a system. The solution isn’t to release less frequently but to improve the quality of regression testing along with the increase in release frequency.

The leading web companies of the world are aware of this and invest heavily in improving the quality of their regression testing processes. In this post, we look at two such examples — Facebook and Netflix. They each take a completely different approach to regression testing, but both are effective at reducing the number of errors and are generous enough to share their approach and learnings with the wider developer community.

Facebook: Regression testing before the release

Facebook is known to be a pioneer in continuous deployments and had already achieved a cadence of 60,000 releases per day just for its Android app way back in 2017. That number is sure to have multiplied by now. The challenge with releasing continuously and at a frantic pace is to ensure things don’t break because of releases. To this end, Facebook gives careful thought to regression testing. They equip developers with tools to better gauge the impact of the code they write before it gets released into production.

Two such tools are Health Compass and Incident Tracker. Health Compass enables developers to specify the configuration for their deployments and have that configuration to be duplicated across all required tools. This improves the consistency of test data and simulates more accurate real-world usage scenarios.

The big benefit of this is that all of the internal testing tools speak the same language. They all use the same metadata to describe errors. This brings clarity to regression testing. While Health Compass ensures data consistency, Incident Tracker enables developers to analyze the data and spot issues.

Incident Tracker provides a time-series view of all errors and even de-duplicates errors across tools. This leaves developers with a clear view of which change resulted in which errors. This is key to improving code quality before a release.

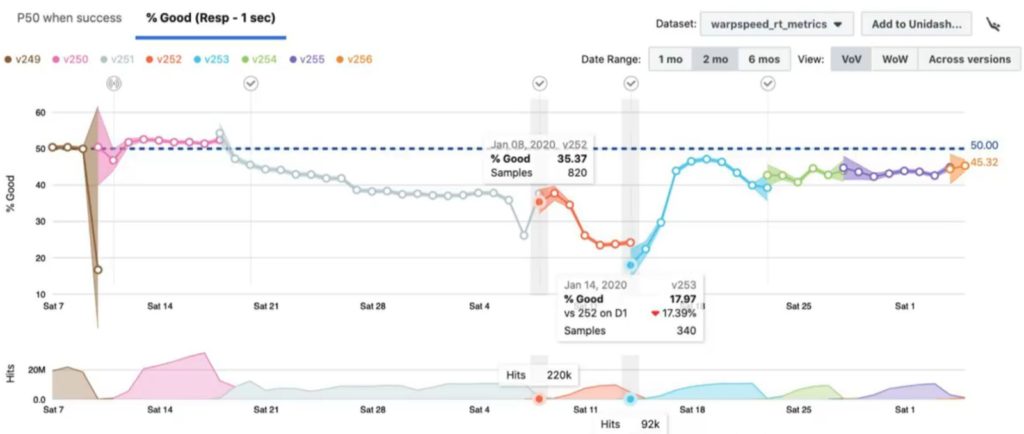

Incident Tracker shows two especially useful views of this data — version over version (VoV) and week on week (WoW). As you can tell from the image above, visualization plays an important role in gauging the impact of performance. Incident Tracker makes good use of a time-series chart to plot performance data over time, complete with color-coding and informational tooltips. This help quicken the process of analyzing regression errors and enable developers to get to the root of the issue faster.

These tools can operate in near-real-time by ingesting live data and making it available to developers for analysis. All this helps Facebook developers catch and fix errors early in the development process. This is in line with the concept of shift-left that is central to DevOps.

Facebook doesn’t want to stop here, however. Rather, they’re looking for ways to take testing further left and catch errors right within the IDE itself. This kind of “dry run” testing is also characteristic of GitOps. Testing tools like Sauce Headless are making these kinds of tests possible.

Netflix: Regression testing after the release

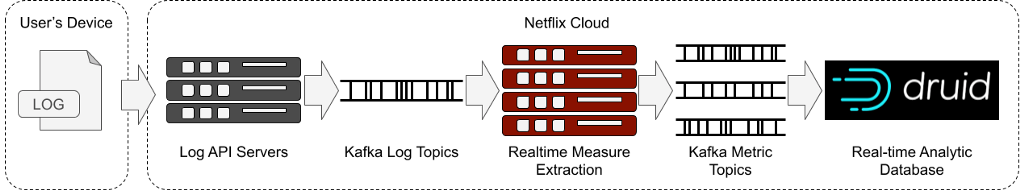

Netflix uses real-time logs from devices to assess whether new deployments have broken anything and user experience is affected. These logs are made available for analysis in real-time as soon as they’re collected from the user device.

Netflix employs the canary deployment approach to release a new version to just a subset of users. If there are errors, the release may be aborted or rolled back. If the release is error-free, it is then opened up to all users.

The data streams into Netflix’s systems at 2 million events per second. Netflix uses metadata in the form of tags to categorize the logs. This helps them analyze data from a certain type of device or a certain region. They check for anomalies in the data and look to spot trends.

To analyze the data, Netflix uses Apache Druid. Druid is a real-time analytics engine with a focus on speed and scale of analysis. It does this by organizing the data that needs to be analyzed into time chunks. The log data is sent to Druid as a real-time stream of data using Kafka.

Netflix takes data management for this process very seriously. For example, they have a threshold after which excess data gets archived. They intentionally filter out old data so that they’re always working with only the freshest real-time data. They aggregate data to facilitate smoother querying. All this enables developers to analyze data and get results in the 10s of milliseconds.

Netflix also considered adoption of Druid within the organization. Since most developers were unfamiliar with Druid and more familiar with Atlas, a time-series database created by Netflix, what they did is to create a tool that translated Atlas queries into Druid queries. This would ensure all Netflix developers could use Druid right away without having to go through a learning curve. A solution is as successful as its adoption rate, and smart organizations like Netflix do all they can to make sure everyone is on board.

Different approaches, same goal

While Facebook and Netflix have taken different approaches to regression testing, both have the same goal in mind — better-quality releases. Both care about the customer experience they provide. Both are data-driven and give careful consideration to how they capture, analyze, and use the data available to them. They go out of their way to capture data no matter how complex the process gets. They carefully clean and organize the data to make it usable. They also give thought to driving adoption of these solutions within the organization. They do this using data visualization, creating little hacks to make the tool work in a familiar way for developers. All this is driven by a relentless pursuit of tracking and measuring every step of the development process.

Though this post splits the approaches of these organizations into a dichotomy of before and after the release, in reality, both organizations care about the before and the after. Similarly, every organization that cares about customer experience must obsess over the entire pipeline, from IDE to production. To go all in on regression testing, organizations need to both shift-left and shift-right. Testing needs to be done at every step. Monitoring and receiving feedback should be built into the development process. It shouldn’t be left to manual QA testers, or Ops folk, but should be automated and systematized with tools. These organizations either used readily available open-source tools or built their own proprietary tools in house. You could even use a vendor tool and get the job done, but the underlying ideas that power the process will remain the same.

Any organization can see improvements by taking the best of both worlds that these organizations have shown here and applying the learnings across their own development pipeline. Introduce regression testing across your development process and watch the customer experience reach new highs.

Featured image: Pixabay