If you’re experiencing reaallly ssllooooooowwwwwww download speeds on what is supposed to be a high-speed link, one of the most likely causes has to do with something called TCP Window Scaling not being supported or implemented correctly. Let’s take a closer look at scaling and how it can greatly help improve download speeds, as well as where things can go wrong that crush performance.

TCP basics

When two systems wish to exchange data reliably over an IP network, they usually use TCP as the transport. TCP establishes a session with a number of mutually agreed-upon parameters, transports the data, and guarantees delivery. As specified in RFC 793, TCP provides a reliable transport over networks that may not be reliable.

Acknowledgements

In a TCP session, the sending system and receiving system negotiate parameters to help track the data exchange, and use a series of sends followed by acknowledgements to confirm delivery. A sending system sends a certain amount of data, and then waits for an acknowledgement before sending any more. If the acknowledgement is never received, it’s assumed the data wasn’t either, so the sender resends and the process repeats until all data is sent. While this guarantees delivery, it’s expensive in terms of time and bandwidth, as the acknowledgements require bandwidth to exchange and the time TCP waits for those acknowledgements adds up over a larger data transfer.

Latency

Latency refers to the time it takes for a packet to transit from sender to receiver. It’s (usually) measured in milliseconds, and every intermediate device between sender and receiver (routers, proxies, load balancers, et al.) that must process the data will add to latency. Busy networks will also add latency, and of course the further apart the sender and receiver are, the higher the latency will be. Since that is time it takes for a packet to get from sender to receiver, the higher the latency the worse the overall throughput, and the larger the data transfer, the more apparent that becomes. All those milliseconds waiting for an ACK add up. So, if there was a way to reduce the number of ACKs required by increasing the amount of data that can be sent, the transfer would be faster because more bandwidth would be used to transfer actual data and less time would be wasted waiting for ACKs.

Buffers

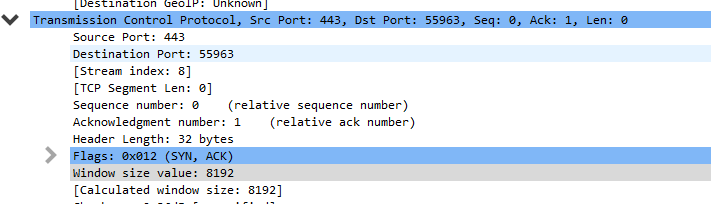

When compared to systems in use when TCP was developed, today’s hosts have significantly more memory to store data. Buffers are used to store the segments of data in a transmission so that they can be reassembled to complete the data transfer. The more memory allocated to the buffer, the more data can be transmitted before an acknowledgement is required. The amount of data that can be buffered before an acknowledgement is sent is negotiated between the sender and receiver during the TCP session establishment (the three-way handshake). The larger a buffer, often called the Window size value, the better the overall performance since that means less overhead. Here, we can see that window of 8K is negotiated in the SYN ACK from the destination system.

-

Notice the Window size value of 8192. Scaling is not determined here.

The Window size value is represented by a 16-bit number, meaning the largest it could be in theory is 64K using just TCP options. But there are options.

TCP Window Scaling

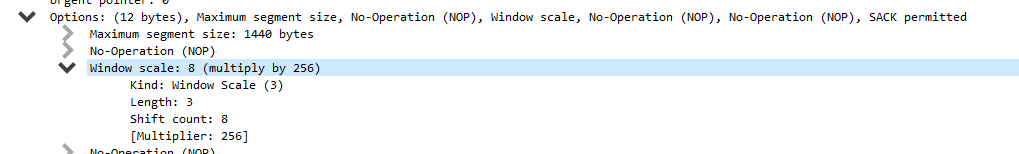

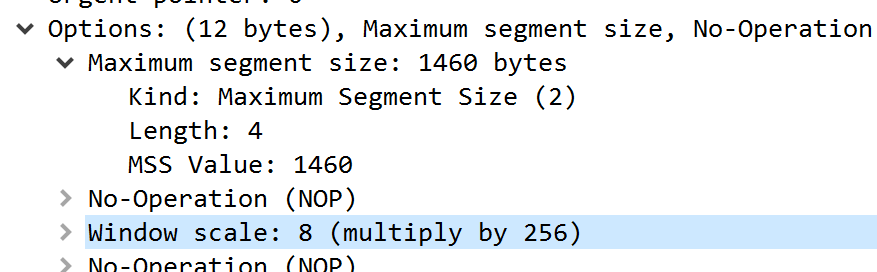

As networks became more reliable and systems’ resources increased, RFC 1323, “TCP Extensions for High Performance” was published (and later updated by RFC 7323) introduced the concept of TCP Window Scaling to increase the negotiated buffer size from the maximum 64K to a whopping 1GB, although it’s very rare that two systems will have that much memory they can allocate to buffering data transfers. How does this work? The TCP Window Scale Option maps another 16 bits (14 bits of scale) so that a TCP Window size can be raised by 2^# of bits allocated in the Window Scale. While the screenshot below shows a TCP Window Size of 8K, look further down where the Scale Factor is set.

-

Further down is where the Window Scale factor is set. In this case, scaling is set to 8.

Window Scale is set to 8, which translates to 2^8, which is 256. Multiply the 8K window by 256 to get a total size of 2048K before an ACK must be sent. Over a high-latency link, that can really add up. Without scaling factor enabled/supported, the higher the latency, the worse the impact. Take a look at these charts from Microsoft, which show the theoretical maximum throughput over a 1Gbps link across various latency values.

| RTT (ms) | Maximum Throughput (Mbit/sec) |

| 300 | 1.71 |

| 200 | 2.56 |

| 100 | 5.12 |

| 50 | 10.24 |

| 25 | 20.48 |

| 10 | 51.20 |

| 5 | 102.40 |

| 1 | 512.00 |

And here’s the same 1Gbps link with scaling enabled.

| RTT (ms) | Maximum Throughput (Mbit/sec) |

| 300 | 447.36 |

| 200 | 655.32 |

| 100 | 1310.64 |

| 50 | 2684.16 |

| 25 | 5368.32 |

| 10 | 13420.80 |

| 5 | 26841.60 |

| 1 | 134208.00 |

As you can see, even on a very low latency link, the difference in throughput is huge! But when downloading from a web server on the other side of your continent, with scaling enabled you get three orders of magnitude better potential throughput. Of course it won’t be that fast for a number of factors, but this does illustrate the importance of scale factor.

Routers and VPNs and proxies, oh my!

Now that you understand what TCP Scale Factor does, when troubleshooting slow throughput, it’s one of the first (and easiest) things to check on. All modern operating systems support TCP Scale Factor. The RFC defining it was first published in 1992, and Windows, Linux, and Mac operating systems all support it. But there are a number of network devices that do not, either because of age or intentional configuration. Proxies in particular may reduce or eliminate the TCP scale factor altogether in order to handle the load of multiple concurrent users. It’s common to see proxies from many vendors set a lower scale factor out of the box, and to further reduce or eliminate it in response to high demand. Sure, that proxy is protecting you from bad things, but at what cost?

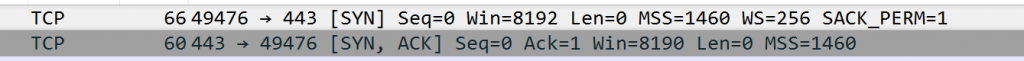

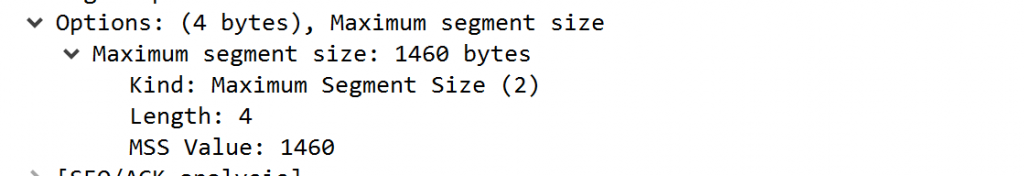

To confirm this is happening, take a network trace from your client, as well as the server, or if that is not possible, at least on the other side of the proxy or other suspect device. When you open a session from your client, you will see the TCP Scale factor, like this.

Note that in the capture in the TCP SYN, in the TCP section, after flags, and Options shows 12 bytes. You should see that get all the way to the server, and the server’s response should be at or close to the Window scale. Busy servers may reduce the scale to 4 or even 2, if they are extremely busy.

Here’s an even more obvious indicator something is wrong, and you don’t even have to expand packets. Look at the SYN, which has a WS=256, which means a Windows Scale factor is set to 2^8. The SYN ACK comes back with WS not even showing in the response!

-

You can see the WS=256 in the SYN, and nothing about WS in the SYN ACK. That means no scaling!

Expanding that, we see the SYN offers a Window Scale factor

And the SYN ACK ignores it completely!

If you see this, then you know something is killing the TCP scale factor off, which in turn is killing your performance. It may take you some time to determine what device is doing this, but start at your proxy and nine out of 10 times, you will have your culprit. For example, certain models of Bluecoat, by default, do not support TCP Window Scale at all, but can be enabled with a simple set of commands and a reboot.

Catch a wave

If your downloads or speedtests don’t live up to the purported bandwidth of your link, don’t blame your ISP right off the bat. Take a trace, look at TCP Window Scale factor, and if it’s not being used, start looking at your network infrastructure. That one option can make a huge difference in overall network performance.