Time is precious for the IT professional. Workloads can be overwhelming as organizations trip their budgets to keep shareholders happy or battle competition in the marketplace. Automation is clearly a key to working efficiently in IT environments, but achieving this can mean a steep learning curve for IT pros who are already short of time to learn new skills. Windows PowerShell is one powerful avenue for implementing automation in Windows-centric environments (and increasingly in others), but if you want to make good use of PowerShell you need to be able to work fast and smart with it. That’s where the advice and experience of an expert can come in handy, and I asked one such PowerShell expert to share some thoughts with me on this subject so I can pass it on to our TechGenix readers. Desmond Lee is principal cloud solution architect at Swiss IT Fabrik GmbH and specializes in end-to-end enterprise infrastructure solutions built around proven business processes and people integration across various industries. Desmond brings with him over a decade of global field experience in the project management, security, virtualization, systems management and automation, educational, oil/gas, travel, healthcare, telecommunications and unified communications space from KMU/SME to Fortune 500 conglomerates. Desmond is a past Microsoft Most Valuable Professional, an SVEB Certified Trainer, and founder of the Swiss IT Pro User Group, an independent, nonprofit organization for IT Pros by IT Pros championing Microsoft technologies. Desmond also blogs regularly about various PowerShell-related topics and can be found on Twitter as well. Let’s now learn how to work fast and smart using PowerShell so we don’t get buried under the unending strain of our professional workload.

Some background info

“Working Faster & Smarter with PowerShell” is a breakout session I presented at the recent PowerShell Conference 2017 Asia in Singapore. To the seasoned PowerShell DevOps, certain topics may seem obvious while others are new or not always evident. In this article, I shall run through and share some of the highlights discussed at the conference. Although PowerShell v2.0 is deprecated in the latest Windows 10 Fall Creators Update release, most code samples provided should continue to work unless otherwise stated.

Warm-up

First off is the classic approach to check for invalid items or null objects. Take the example of a typical code fragment as follows:

$s = “”

Get-Process | Select-Object -Property Company | Where-Object company -ne $s

The attempt here to filter out objects with empty (null) company entries is not attained as the result is clearly false i.e.

“” -eq $null

#False

A simple fix is to edit $s = $null and re-run the statement. Nevertheless, you can improve this further by dropping the logical comparison:

gps | select Company | ? company

This trick functions because only objects that are not null can successfully work their way right through the end of the pipeline across the Where-Object filter.

Built-in PowerShell cmdlets

Turning our attention to built-in cmdlets, Get-ChildItem (aliases: dir, ls) is a frequently used cmdlet in a manner similar to:

dir *.cpl -Recurse -ErrorAction:SilentlyContinue

You probably noticed that it can take some time before any hits are returned. This is because *.cpl is automatically mapped to the -Path parameter and may not correspond to your intention to filter on filenames with the corresponding extension. How this really works under the hood can be uncovered with Trace-Command.

To optimize the search process, the statement should instead read as:

#requires -version 5.0

#-Depth limits search to one level of sub-folder

dir -Path $env:windir -Filter *.cpl -Recurse -ea:SilentlyContinue -Depth 1

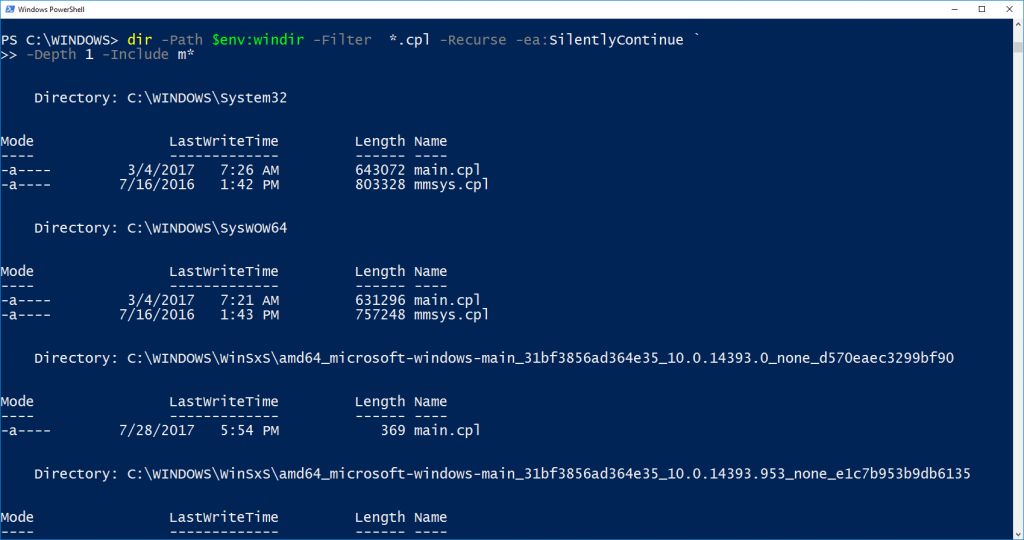

Moving forward, retrieving a list of files that start with a specific character can simply be realized with a minor modification:

dir -Path $env:windir -Filter m*.cpl -Recurse -ea:SilentlyContinue -Depth 1

The explicit use of the -Filter parameter guarantees that the filter is carried out at the “server backend” before a matching result set is returned to the client or caller. Based on this understanding, you may be tempted to use the -Include parameter as an alternative:

dir -Path $env:windir -Filter *.cpl -Recurse -ea:SilentlyContinue `

-Depth 1 -Include m*

Not only does the execution time appear to be slower, the -Depth parameter is no longer respected, i.e. the search traverses beyond one level of subfolder:

Whether this is by design or a bug remains to be clarified. Note that improvements in Get-ChildItem were introduced since PowerShell Version 3.0 although this continues to be debatable. The moral of this story is to know your cmdlets well and test until you are satisfied that they perform as expected.

Working with arrays

The array type is a perfect candidate to store multiple items or objects of the same or different types. Trying to check if an item $myCity exists in the $cities array:

$cities = “London”,”Paris”,”Berlin”,”Bern”

$myCity = “Zürich”

is usually realized using Example 1. This can easily become unmanageable and cumbersome with a large array. Rather, consider using the -in or -contains operator (-cin and -ccontains are the case-sensitive equivalents) to do the same job (Example 2).

| Example 1

$myCity -eq “London” -or $myCity -eq “Paris” -or $myCity -eq “Berlin” -or $myCity -eq “Bern”

|

Example 2

#requires -version 3.0 $myCity -in $cities $cities -contains $myCity |

It is likely that you want to know the precise location (index) of an item in the array and the code sample in Example 3 is one common style many DevOps adopt. A better way is to utilize the static IndexOf method of the [array] .NET class as given in Example 4.

| Example 3

for ($i = 0; $i -lt $cities.count; $i++) { if ($cities[$i] -eq $myCity) { “found ‘$($cities[$i])’ break; } } |

Example 4

#if ($cities -contains $myCity) if ($myCity -in $cities) { $k = [array]::IndexOf($cities,$myCity) “found ‘$($cities[$k])’ }

|

Note that IndexOf is case insensitive and delivers the proper index position solely against an exact match. Any variations in case, the length or number of characters will cause this method to fail. This is indicated with a return value of -1. As a result, you can skip entirely the -in or -contains logical test and have your cake and eat it too!

Still on the same topic, a fixed-sized array can be created using a variety of mechanisms like $arr = @( ). To “grow” an array, a completely new one with the same name is always created behind the scenes in order to accommodate incoming elements. This is at the expense of extra time and CPU cycles, and can seriously affect performance involving arrays with a sizeable number of elements that change dimensions often. Moreover, existing elements cannot be removed from a regular array.

The limitations of a conventional PowerShell array can be overcome by turning to System.Collections.ArrayList for help. How to deploy this is illustrated in the self-explanatory code sample:

$arr1 = New-Object System.Collections.ArrayList

#adds 1000 elements to the ArrayList

1..1000 | % { [void]$arr1.Add($_) }

#preferred – adds another 1000 elements in one go

$arr1.AddRange(1001..2000)

#take away the item having the value 3

$arr1.Remove(3)

#remove item at array index 3

$arr1.RemoveAt(3)

#starting from index 2 remove a count of 5 items

$arr3.RemoveRange(2,5)

[void] is only required if you want to suppress echo of the array index for the newly added single element. You can attain the same effect by piping the statement to Out-Null.

It may come as a surprise when you find out that none of the preceding methods shows up upon running $arr1 | gm. Depending on the data type(s) inserted, the resulting output will show the corresponding object methods and properties in its place. To get to the associated properties for ArrayList, the trick is to pass $arr1 as an argument to the InputObject parameter of Get-Member.

Note that a call to $arr1.ToArray() will convert the list of objects to the PowerShell System.Array base object type. Better still, assigning this to a distinct variable will create an independent copy of array which has no reference whatsoever to the original copy (held in ArrayList). That is to say, changing an array element in either copy will not affect the contents stored in the other. With this technique, it helps address one of the common needs in duplicating a list (array) of items while maintaining clear separation.

Regular expressions

A lot of DevOps shy immediately away from regular expressions (regex) because this topic seems to have an infamous reputation of being difficult to learn, let alone use. The good news is that basic administrative tasks can be easily accomplished with rudimentary understanding of regex.

Let us explain with an example where the goal is to extract the username from the Distinguished Name field in Active Directory. A straightforward way is listed here:

$dn = “CN=dlee,OU=IT,OU=Corp,DC=leedesmond,DC=com”

$idx = $($dn).IndexOf(“,”)

$dn.SubString(0,$idx).SubString(3)

#dlee

Using regex, the solution can be stripped down to become:

$m = [regex]”CN=(.+)”

$dn -match $m

#True

$matches

#Name Value

#—- —–

#1 dlee,OU=IT,OU=Corp,DC=leedesmond,DC=com

#0 CN=dlee,OU=IT,OU=Corp,DC=leedesmond,DC=com

A match ($true) will be output as the second element of the $matches hashtable. This is given by the name “1” signifying the matching hit stored as the value (as defined within the first matching parenthesis group). A period or dot matches any character whereas a trailing plus sign means multiple occurrences of the preceding element.

Consequently, an unexpected effect is the inclusion of the comma (“,”) and the remaining text as part of the first matching group. A simple fix is to exclude this punctuation mark as depicted:

$m = [regex]”CN=(.[^,]+)”

$dn -match $m

$matches[1]

#dlee

As you can see, working with regular expressions is neither that complicated nor daunting after all.

Advanced functions

Introduced in Version 2.0, an Advanced Function typically starts with the [CmdletBinding()] directive or tag to emulate native cmdlets behavior in a regular PowerShell function. One of the least used yet time-saving features is the automatic validation of function parameters without having to write your own test logic. A simple comparison between the classic and Advanced Function methods is presented in Examples 5 and 6.

| Example 5

$min = 1; $max = 5 function fb($i) { #check if $i lies between #the given range if ($i -ge $min -and $i -le $max) { “‘$i’ is within range” } else { “‘$i’ is out of range” } } |

Example 6

function fb { [CmdletBinding()] param( [ValidateRange(1,5)] $i )

“‘$i’ is within range” }

|

You will soon find out that with this power and convenience comes new responsibility. Executing an advanced function with an invalid or out-of-range parameter will cause an error to be thrown. To address this, you must wrap the actual function call in a try … catch error handling block:

#error handling

try { fb -i 0 } catch { “Out of range!” }

Instead of validating against a fixed parameter set in a PowerShell advanced function, you can employ the validation statement in a script block. The logic for error handling is transparent to the user and packed inside the function block itself. A code sample for this is shown below:

function foobar($mfl, $sbb)

{

$mfl | % {

try {

if (&$sbb $PSItem) { “‘$_’ is my colour.`n” }

}

catch {

“Not my colour!`n($_.Exception.Message)`n”

}

}

}

#

$sb = { param([ValidateSet(“red”,”green”,”blue”)]$flist) $flist }

$myflist = “pink”,”blue”,”green”

foobar $myflist $sb

The advantage with this tactic is that the function remains largely unchanged and is usable for different data sets based on the validation rule passed as a function parameter via the script block. To explore further, consider calling up Get-Help about_Functions_Advanced* to learn more about this powerful feature.

How fast is my code?

Before we round up everything, the question of how fast or the time required to execute a block of code clearly begs an answer. One simple way to measure this is to wrap code between a pair of Get-Date cmdlets and taking the time difference at code completion.

An alternative solution is to deploy the Measure-Command cmdlet where code statements are placed within a script block used as an argument to the Expression parameter as given here:

Measure-Command -Expression { gps | ? company | select name, object }

A third option is to literally use a stopwatch from the .NET framework’s diagnostics class. Putting this in practice is as simple as calling the StartNew() static method which immediately starts the clock ticking. Once the last statement of code terminates, it is necessary to execute the Stop() method. By inspecting the Elapsed property, a measure of the actual duration can be taken thereafter. You can check the IsRunning property and Start() or Stop() the stopwatch anytime at will.

$stopwatch = [system.diagnostics.stopwatch]::StartNew()

foreach ($num in 1..1000) { $num }

$stopwatch.Stop()

$stopwatch.Elapsed

Whether you are a beginner or experienced DevOps in the world of automation with PowerShell, I certainly hope that this article has served both as a timely refresher and provided an insight to help you explore brave new grounds going forward. A final food for thought — how do you swap values stored in two distinct variables without involving a temporary variable? Have fun!